您好,登錄后才能下訂單哦!

您好,登錄后才能下訂單哦!

環境描述

根據需求,部署hadoop-3.0.0基礎功能架構,以三節點為安裝環境,操作系統CentOS 7 x64;

openstack創建三臺虛擬機,開始部署;

IP地址 主機名

10.10.204.31 master

10.10.204.32 node1

10.10.204.33 node2

功能節點規劃

master node1 node2

NameNode

DataNode DataNode DataNode

HQuorumPeer NodeManager NodeManager

ResourceManager SecondaryNameNode

HMaster

三節點執行初始化操作;

1.更新系統環境;

yum clean all && yum makecache fast && yum update -y && yum install -y wget vim net-tools git ftp zip unzip

2.根據規劃修改主機名;

hostnamectl set-hostname master

hostnamectl set-hostname node1

hostnamectl set-hostname node2

3.添加hosts解析;

vim /etc/hosts

10.10.204.31 master

10.10.204.32 node1

10.10.204.33 node2

4.ping測試三臺主機之間主機名互相解析正常;

ping master

ping node1

ping node2

5.下載安裝JDK環境;

#hadoop 3.0版本需要JDK 8.0支持;

cd /opt/

#通常情況下,需要登錄oracle官網,注冊賬戶,同意其協議后,才能下載,在此根據鏈接直接wget方式下載;

wget --no-cookies --no-check-certificate --header "Cookie: gpw_e24=http%3A%2F%2Fwww.oracle.com%2F; oraclelicense=accept-securebackup-cookie" "https://download.oracle.com/otn-pub/java/jdk/8u202-b08/1961070e4c9b4e26a04e7f5a083f551e/jdk-8u202-linux-x64.tar.gz"

#創建JDK和hadoop安裝路徑

mkdir /opt/modules

cp /opt/jdk-8u202-linux-x64.tar.gz /opt/modules

cd /opt/modules

tar zxvf jdk-8u202-linux-x64.tar.gz

#配置環境變量

export JAVA_HOME="/opt/modules/jdk1.8.0_202"

export PATH=$JAVA_HOME/bin/:$PATH

source /etc/profile

#永久生效配置方式

vim /etc/bashrc

#add lines

export JAVA_HOME="/opt/modules/jdk1.8.0_202"

export PATH=$JAVA_HOME/bin/:$PATH

6.下載解壓hadoop-3.0.0安裝包;

cd /opt/

wget http://archive.apache.org/dist/hadoop/core/hadoop-3.0.0/hadoop-3.0.0.tar.gz

cp /opt/hadoop-3.0.0.tar.gz /modules/

cd /opt/modules

tar zxvf hadoop-3.0.0.tar.gz

7.關閉selinux/firewalld防火墻;

systemctl disable firewalld

vim /etc/sysconfig/selinux

SELINUX=disabled

8.重啟服務器;

reboot

master節點操作;

說明:

測試環境,全部使用root賬戶進行安裝運行hadoop;

1.添加ssh 免密碼登陸;

cd

ssh-keygen

##三次回車即可

#拷貝密鑰文件到node1/node2

ssh-copy-id master

ssh-copy-id node1

ssh-copy-id node2

2.測試免密碼登陸正常;

ssh master

ssh node1

ssh node2

3.修改hadoop配置文件;

對于hadoop配置,需修改配置文件:

hadoop-env.sh

yarn-env.sh

core-site.xml

hdfs-site.xml

mapred-site.xml

yarn-site.xml

workers

cd /opt/modules/hadoop-3.0.0/etc/hadoop

vim hadoop-env.sh

export JAVA_HOME=/opt/modules/jdk1.8.0_202

vim yarn-env.sh

export JAVA_HOME=/opt/modules/jdk1.8.0_202

配置文件解析:

https://blog.csdn.net/m290345792/article/details/79141336

vim core-site.xml

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://master:9000</value>

</property>

<property>

<name>io.file.buffer.size</name>

<value>131072</value>

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>/data/tmp</value>

</property>

<property>

<name>hadoop.proxyuser.hadoop.hosts</name>

<value></value>

</property>

<property>

<name>hadoop.proxyuser.hadoop.groups</name>

<value></value>

</property>

</configuration>

#io.file.buffer.size 隊列文件中的讀/寫緩沖區大小

vim hdfs-site.xml

<configuration>

<property>

<name>dfs.namenode.secondary.http-address</name>

<value>slave2:50090</value>

</property>

<property>

<name>dfs.replication</name>

<value>3</value>

<description>副本個數,配置默認是3,應小于datanode機器數量</description>

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>/data/tmp</value>

</property>

</configuration>

###namenode配置

#dfs.namenode.name.dir NameNode持久存儲名稱空間和事務日志的本地文件系統上路徑,如果這是一個逗號分隔的目錄列表,那么將在所有目錄中復制名稱的表,以進行冗余。

#dfs.hosts / dfs.hosts.exclude 包含/摒棄的數據存儲節點清單,如果有必要,使用這些文件來控制允許的數據存儲節點列表

#dfs.blocksize HDFS 塊大小為128MB(默認)的大文件系統

#dfs.namenode.handler.count 多個NameNode服務器線程處理來自大量數據節點的rpc

###datanode配置

#dfs.datanode.data.dir DataNode的本地文件系統上存儲塊的逗號分隔的路徑列表,如果這是一個逗號分隔的目錄列表,那么數據將存儲在所有命名的目錄中,通常在不同的設備上。

vim mapred-site.xml

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<property>

<name>mapreduce.application.classpath</name>

<value>

/opt/modules/hadoop-3.0.0/etc/hadoop,

/opt/modules/hadoop-3.0.0/share/hadoop/common/,

/opt/modules/hadoop-3.0.0/share/hadoop/common/lib/,

/opt/modules/hadoop-3.0.0/share/hadoop/hdfs/,

/opt/modules/hadoop-3.0.0/share/hadoop/hdfs/lib/,

/opt/modules/hadoop-3.0.0/share/hadoop/mapreduce/,

/opt/modules/hadoop-3.0.0/share/hadoop/mapreduce/lib/,

/opt/modules/hadoop-3.0.0/share/hadoop/yarn/,

/opt/modules/hadoop-3.0.0/share/hadoop/yarn/lib/

</value>

</property>

</configuration>

vim yarn-site.xml

<configuration>

<!-- Site specific YARN configuration properties -->

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.nodemanager.aux-services.mapreduce.shuffle.class</name>

<value>org.apache.hadoop.mapred.ShuffleHandle</value>

</property>

<property>

<name>yarn.resourcemanager.resource-tracker.address</name>

<value>master:8025</value>

</property>

<property>

<name>yarn.resourcemanager.scheduler.address</name>

<value>master:8030</value>

</property>

<property>

<name>yarn.resourcemanager.address</name>

<value>master:8040</value>

</property>

</configuration>

###resourcemanager和nodemanager配置

#yarn.acl.enable 允許ACLs,默認是false

#yarn.admin.acl 在集群上設置adminis。 ACLs are of for comma-separated-usersspacecomma-separated-groups.默認是指定值為表示任何人。特別的是空格表示皆無權限。

#yarn.log-aggregation-enable Configuration to enable or disable log aggregation 配置是否允許日志聚合。

###resourcemanager配置

#yarn.resourcemanager.address 值:ResourceManager host:port 用于客戶端任務提交.說明:如果設置host:port ,將覆蓋yarn.resourcemanager.hostname.host:port主機名。

#yarn.resourcemanager.scheduler.address 值:ResourceManager host:port 用于應用管理者向調度程序獲取資源。說明:如果設置host:port ,將覆蓋yarn.resourcemanager.hostname主機名

#yarn.resourcemanager.resource-tracker.address 值:ResourceManager host:port 用于NodeManagers.說明:如果設置host:port ,將覆蓋yarn.resourcemanager.hostname的主機名設置。

#yarn.resourcemanager.admin.address 值:ResourceManager host:port 用于管理命令。說明:如果設置host:port ,將覆蓋yarn.resourcemanager.hostname主機名的設置

#yarn.resourcemanager.webapp.address 值:ResourceManager web-ui host:port.說明:如果設置host:port ,將覆蓋yarn.resourcemanager.hostname主機名的設置

#yarn.resourcemanager.hostname 值:ResourceManager host. 說明:可設置為代替所有yarn.resourcemanager address 資源的主機單一主機名。其結果默認端口為ResourceManager組件。

#yarn.resourcemanager.scheduler.class 值:ResourceManager 調度類. 說明:Capacity調度 (推薦), Fair調度 (也推薦),或Fifo調度.使用完全限定類名,如 org.apache.hadoop.yarn.server.resourcemanager.scheduler.fair.FairScheduler.

#yarn.scheduler.minimum-allocation-mb 值:在 Resource Manager上為每個請求的容器分配的最小內存.

#yarn.scheduler.maximum-allocation-mb 值:在Resource Manager上為每個請求的容器分配的最大內存

#yarn.resourcemanager.nodes.include-path / yarn.resourcemanager.nodes.exclude-path 值:允許/摒棄的nodeManagers列表 說明:如果必要,可使用這些文件來控制允許的NodeManagers列表

vim workers

master

slave1

slave2

4.修改啟動文件

#因為測試環境以root賬戶啟動hadoop服務,所以需對啟動文件添加權限;

cd /opt/modules/hadoop-3.0.0/sbin

vim start-dfs.sh

#add lines

HDFS_DATANODE_USER=root

HDFS_DATANODE_SECURE_USER=root

HDFS_NAMENODE_USER=root

HDFS_SECONDARYNAMENODE_USER=root

HDFS_ZKFC_USER=root

HDFS_JOURNALNODE_USER=root

vim stop-dfs.sh

#add lines

HDFS_DATANODE_USER=root

HDFS_DATANODE_SECURE_USER=root

HDFS_NAMENODE_USER=root

HDFS_SECONDARYNAMENODE_USER=root

HDFS_ZKFC_USER=root

HDFS_JOURNALNODE_USER=root

vim start-yarn.sh

#add lines

YARN_RESOURCEMANAGER_USER=root

HADOOP_SECURE_DN_USER=yarn

YARN_NODEMANAGER_USER=root

vim stop-yarn.sh

#add lines

YARN_RESOURCEMANAGER_USER=root

HADOOP_SECURE_DN_USER=yarn

YARN_NODEMANAGER_USER=root

5.推送hadoop配置文件;

cd /opt/modules/hadoop-3.0.0/etc/hadoop

scp ./ root@node1:/opt/modules/hadoop-3.0.0/etc/hadoop/

scp ./ root@node2:/opt/modules/hadoop-3.0.0/etc/hadoop/

6.格式化hdfs;

#配置文件中指定hdfs存儲路徑為/data/tmp/

/opt/modules/hadoop-3.0.0/bin/hdfs namenode -format

7.啟動hadoop服務;

#namenode 三節點

cd /opt/modules/zookeeper-3.4.13

./bin/zkServer.sh start

cd /opt/modules/kafka_2.12-2.1.1

./bin/kafka-server-start.sh ./config/server.properties &

/opt/modules/hadoop-3.0.0/bin/hdfs journalnode &

#master節點

/opt/modules/hadoop-3.0.0/bin/hdfs namenode -format

/opt/modules/hadoop-3.0.0/bin/hdfs zkfc -formatZK

/opt/modules/hadoop-3.0.0/bin/hdfs namenode &

#slave1節點

/opt/modules/hadoop-3.0.0/bin/hdfs namenode -bootstrapStandby

/opt/modules/hadoop-3.0.0/bin/hdfs namenode &

/opt/modules/hadoop-3.0.0/bin/yarn resourcemanager &

/opt/modules/hadoop-3.0.0/bin/yarn nodemanager &

#slave2節點

/opt/modules/hadoop-3.0.0/bin/hdfs namenode -bootstrapStandby

/opt/modules/hadoop-3.0.0/bin/hdfs namenode &

/opt/modules/hadoop-3.0.0/bin/yarn resourcemanager &

/opt/modules/hadoop-3.0.0/bin/yarn nodemanager &

#namenode 三節點

/opt/modules/hadoop-3.0.0/bin/hdfs zkfc &

#master節點

cd /opt/modules/hadoop-3.0.0/

./sbin/start-all.sh

cd /opt/modules/hadoop-3.0.0/hbase-2.0.4

./bin/start-hbase.sh

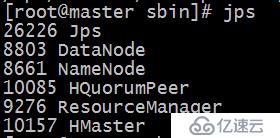

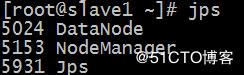

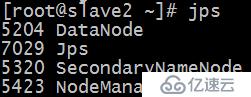

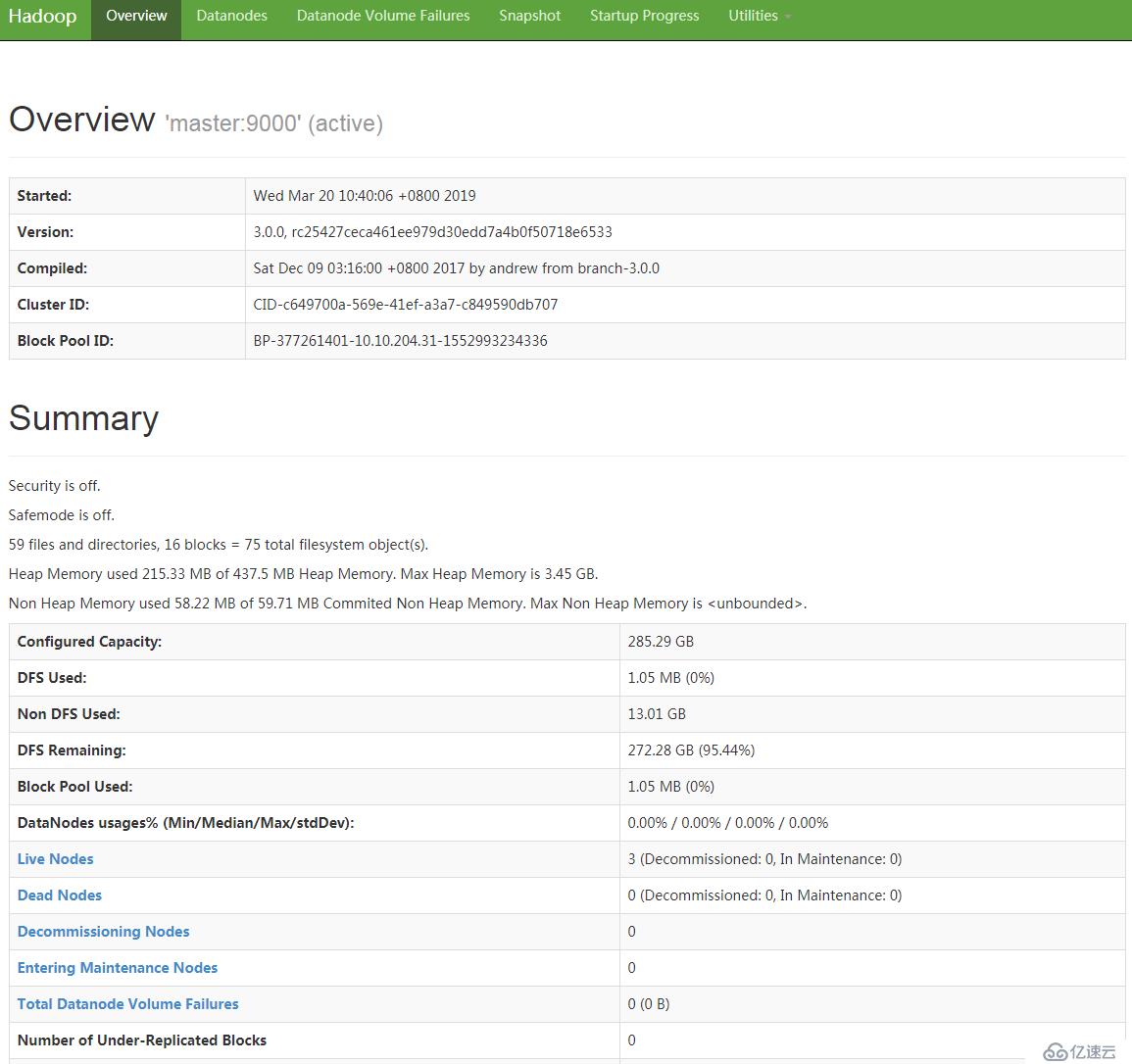

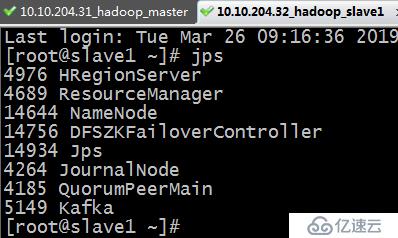

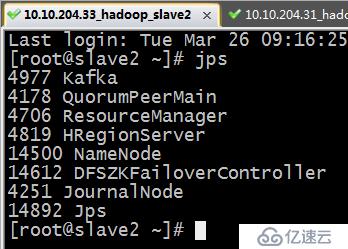

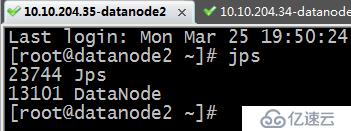

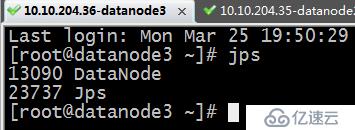

8.查看各個節點hadoop服務正常啟動;

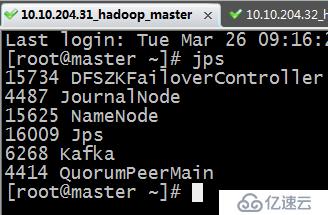

jps

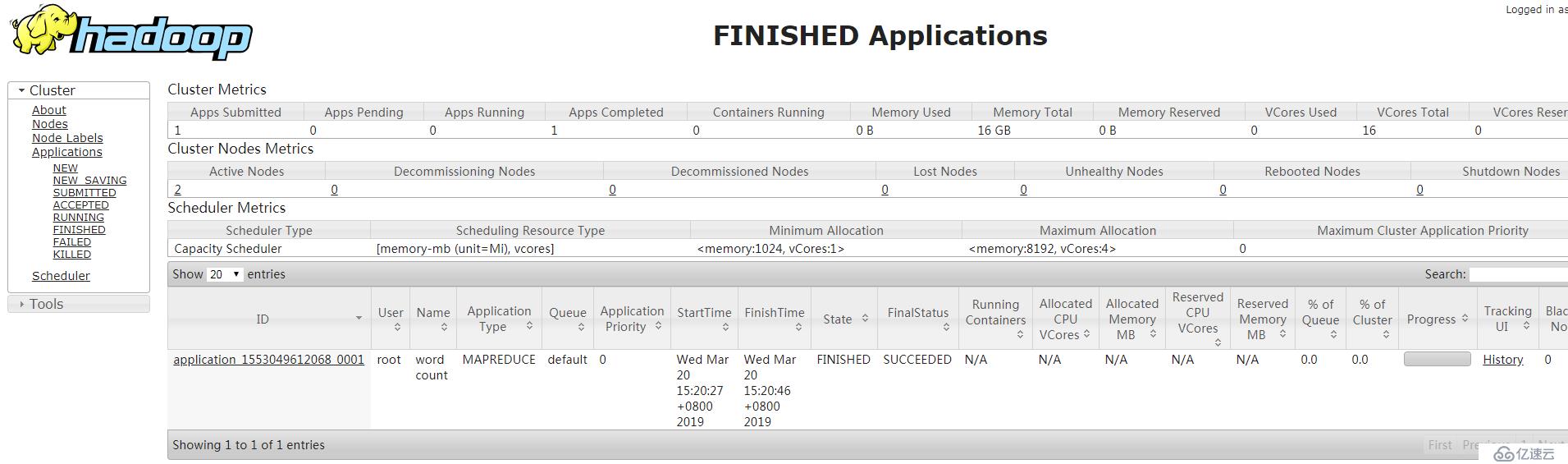

9.運行測試;

cd /opt/modules/hadoop-3.0.0

#hdfs上創建測試路徑

./bin/hdfs dfs -mkdir /testdir1

#創建測試文件

cd /opt

touch wc.input

vim wc.input

hadoop mapreduce hive

hbase spark storm

sqoop hadoop hive

spark hadoop

#將wc.input上傳到HDFS

bin/hdfs dfs -put /opt/wc.input /testdir1/wc.input

#運行hadoop自帶的mapreduce Demo

./bin/yarn jar /opt/modules/hadoop-3.0.0/share/hadoop/mapreduce/hadoop-mapreduce-examples-3.0.0.jar wordcount /testdir1/wc.input /output

#查看輸出文件

bin/hdfs dfs -ls /output

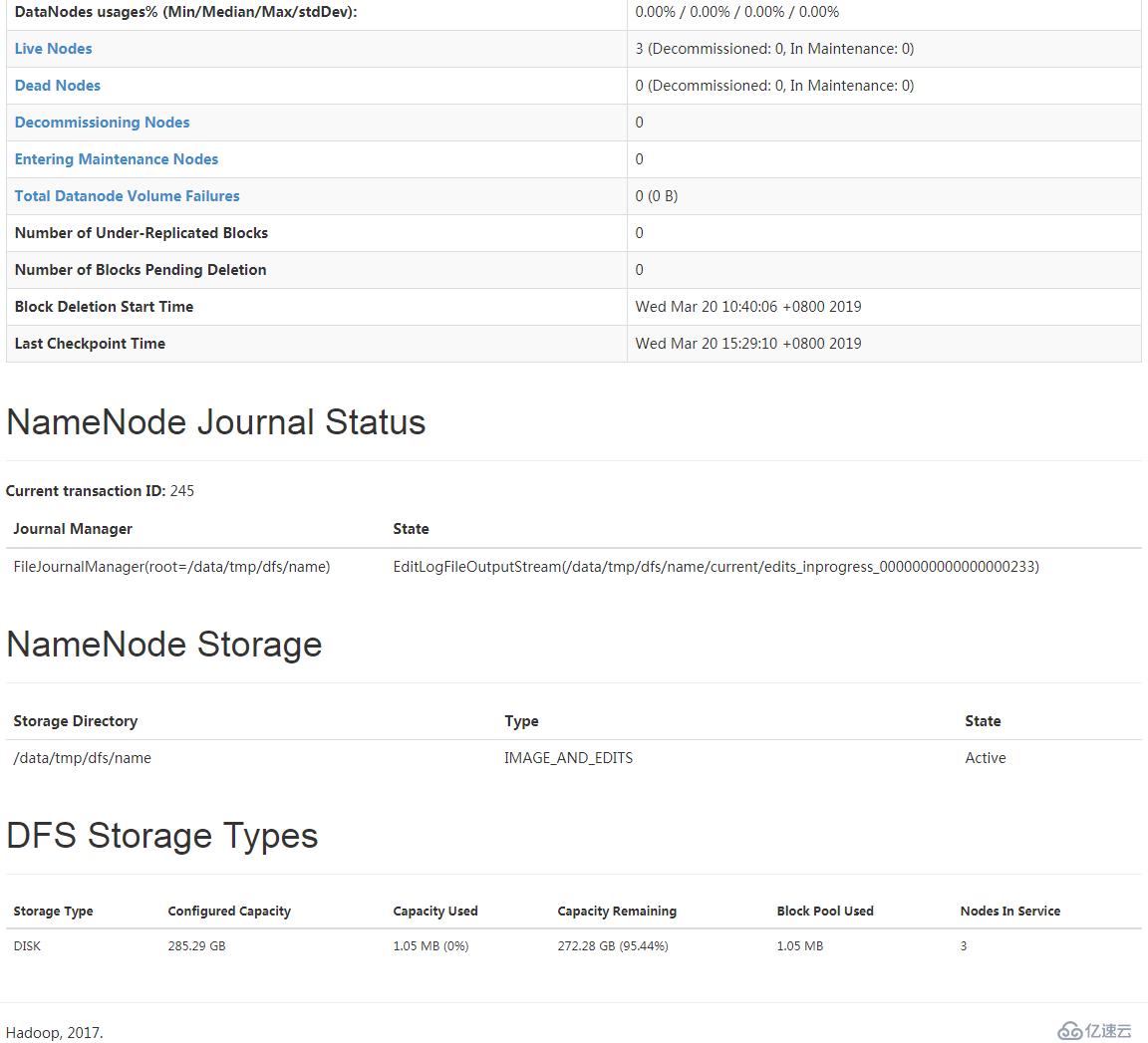

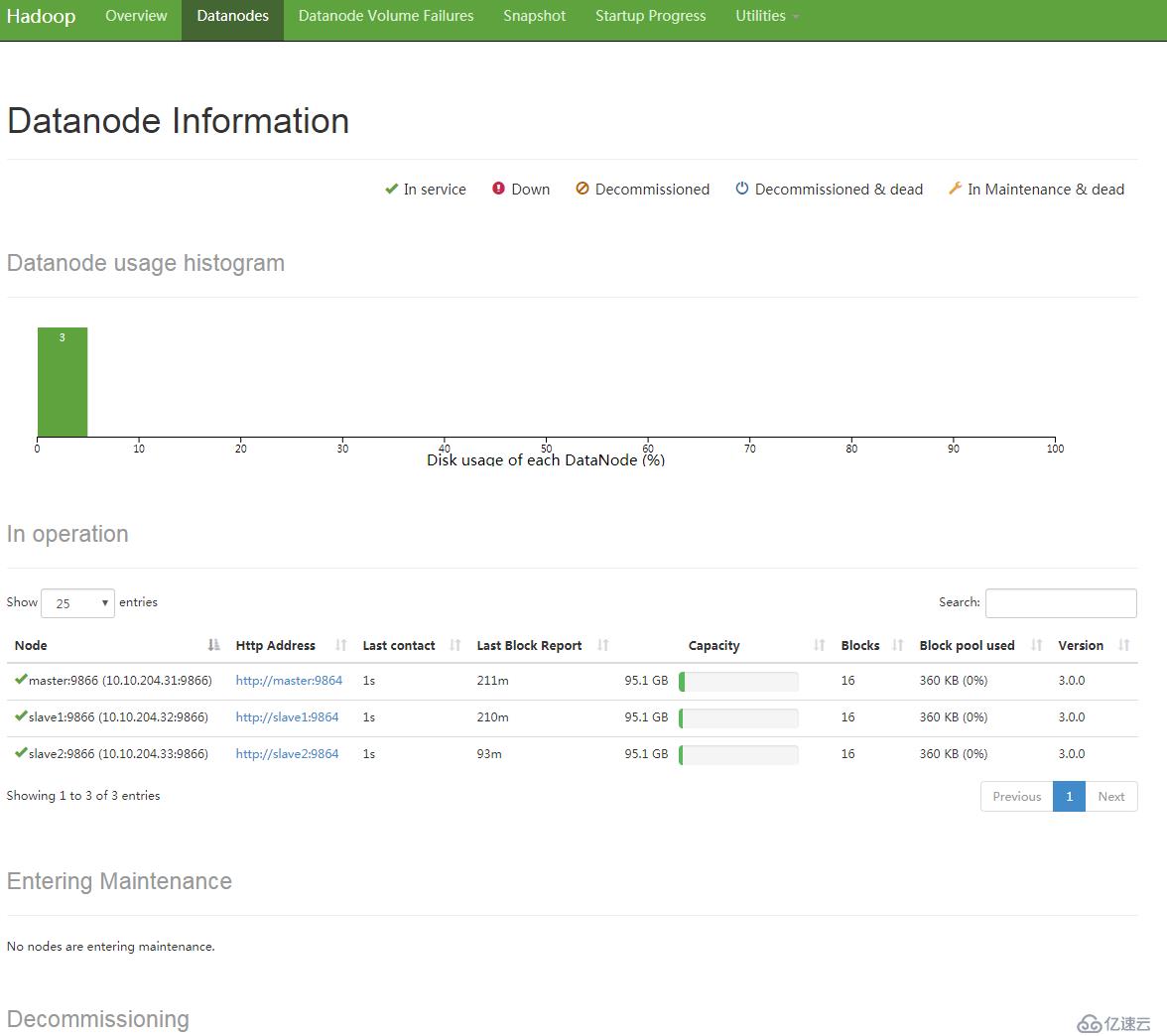

10.狀態截圖

所有服務正常啟動后截圖:

zookeeper+kafka+namenode+journalnode+hbase

路過點一贊,技術升一線,加油↖(^ω^)↗!

免責聲明:本站發布的內容(圖片、視頻和文字)以原創、轉載和分享為主,文章觀點不代表本網站立場,如果涉及侵權請聯系站長郵箱:is@yisu.com進行舉報,并提供相關證據,一經查實,將立刻刪除涉嫌侵權內容。