您好,登錄后才能下訂單哦!

您好,登錄后才能下訂單哦!

這篇文章將為大家詳細講解有關Android如何利用OpenCV制作人臉檢測APP,小編覺得挺實用的,因此分享給大家做個參考,希望大家閱讀完這篇文章后可以有所收獲。

無圖無真相,先把APP運行的結果給大家看看。

如上圖所示,APP運行后,點擊“選擇圖片”,從手機中選擇一張圖片,然后點擊“處理”,APP會將人臉用矩形給框起來,同時把鼻子也給檢測出來了。由于目的是給大家做演示,所以APP設計得很簡單,而且也只實現了檢測人臉和鼻子,沒有實現對其他五官的檢測。而且這個APP也只能檢測很簡單的圖片,如果圖片中背景太復雜就無法檢測出人臉。

下面我將一步一步教大家如何實現上面的APP!

為了保證大家能下載到和我相同版本的Android Studio,我把安裝包上傳了到微云。地址是:https://share.weiyun.com/bHVOWGC9

下載后,一路點擊下一步就安裝好了。當然,安裝過程中要聯網,所以可能會中途失敗,如果失敗了,重試幾次,如果還是有問題,那么可能要開啟VPN。

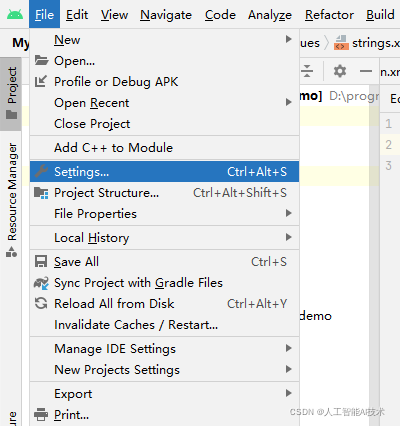

打開Android studio后,點擊“File”->“Settings”

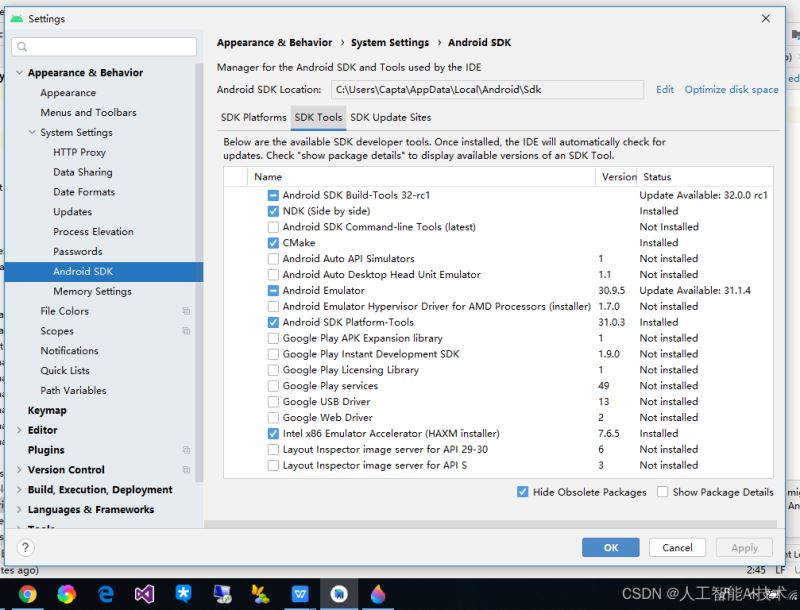

點擊“Appearance & Behavior”->“System Settings”->“Android SDK”->“SDK Tools”。

然后選中“NDK”和“CMake”,點擊“OK”。下載這兩個工具可能要花一點時間,如果失敗了請重試或開啟VPN。

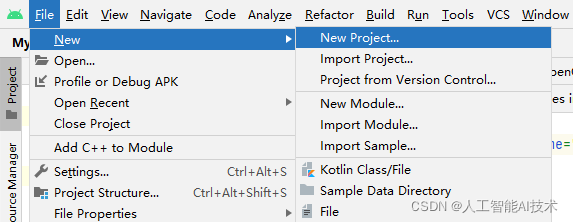

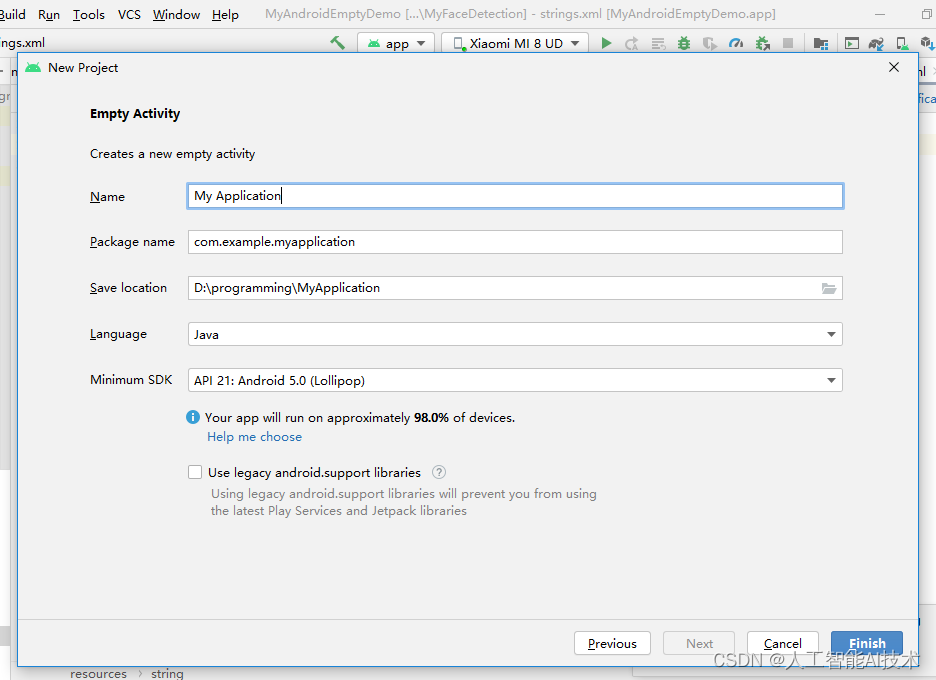

點擊“File”->“New”->“New Project”

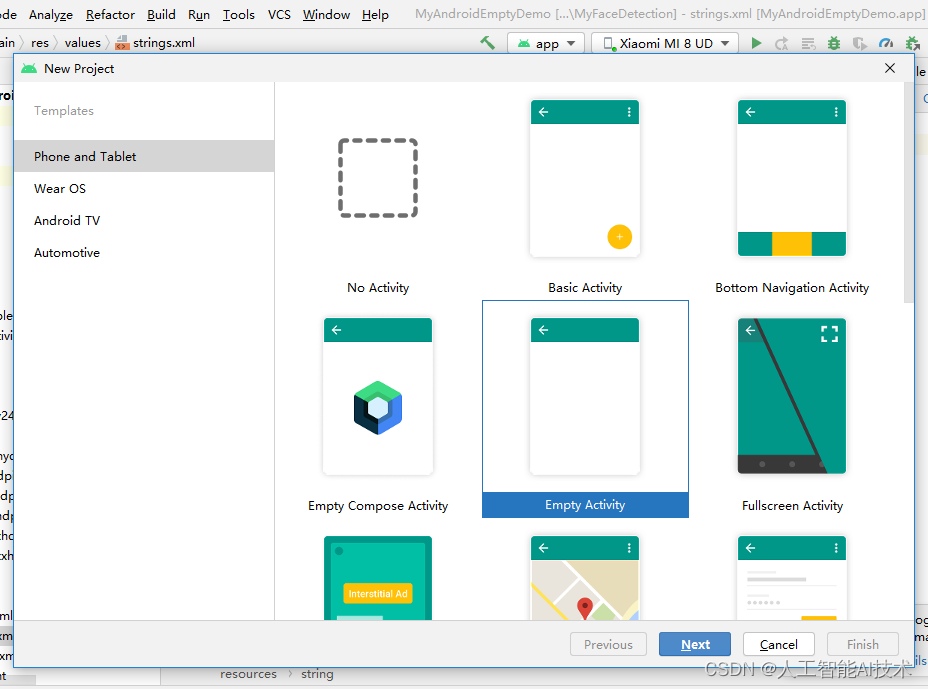

選中“Empty Activity”,點擊“Next”

“Language”選擇“Java”,Minimum SDK選擇“API 21”。點擊“Finish”

下載地址

下載后解壓。

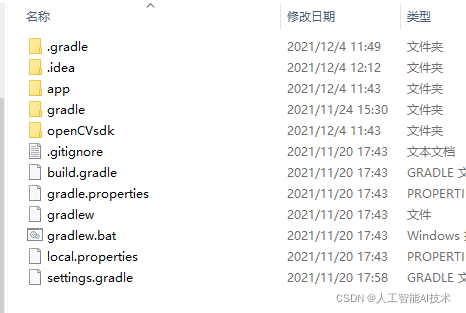

將opencv-4.5.4-android-sdk\OpenCV-android-sdk下面的sdk復制到你在第三步創建的Android項目下面。就是第三步圖中的D:\programming\MyApplication下面。然后將sdk文件夾改名為openCVsdk。

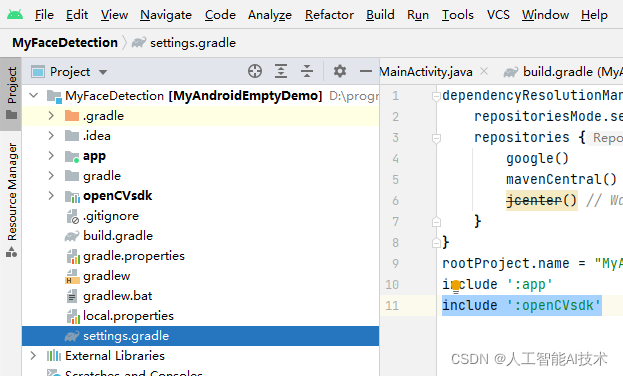

選擇“Project”->“settings.gradle”。在文件中添加include ‘:openCVsdk'

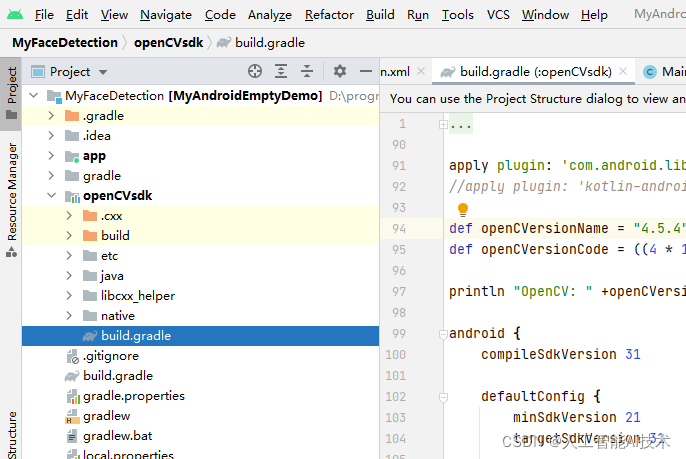

選擇“Project”->“openCVsdk”->“build.gradle”。

將apply plugin: 'kotlin-android'改為//apply plugin: ‘kotlin-android'

將compileSdkVersion和minSdkVersion,targetSdkVersion改為31,21,31。

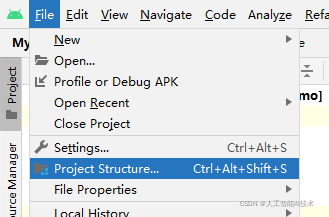

點擊“File”->“Project Structure”

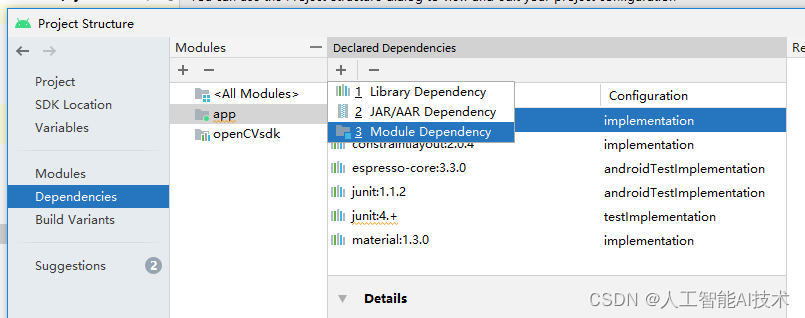

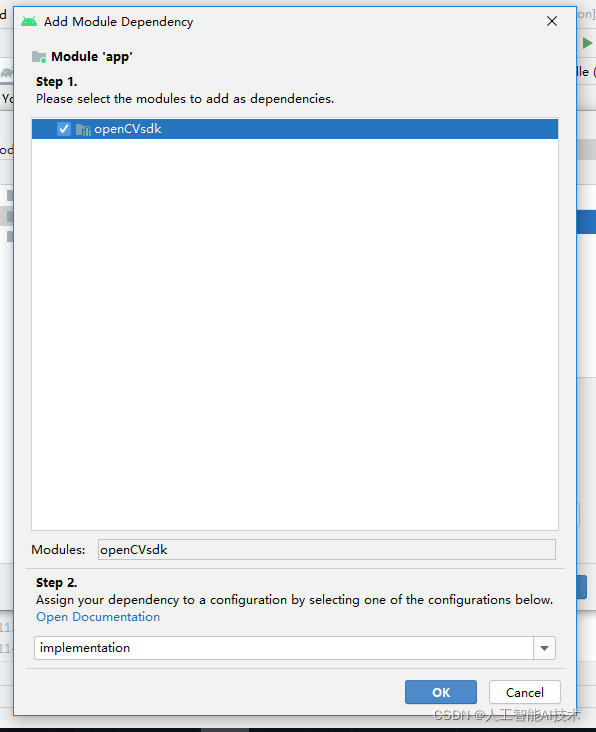

點擊“Dependencies”->“app”->“+”->“Module Dependency”

選中“openCVsdk”,點擊“OK”,以及母窗口的“OK”

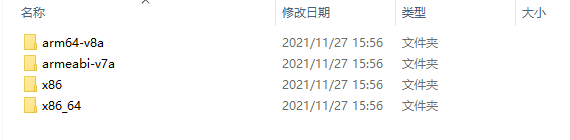

在Android項目文件夾的app\src里面創建一個新文件夾jniLibs,然后把openCV文件夾的opencv-4.5.4-android-sdk\OpenCV-android-sdk\sdk\native\staticlibs里面的東西都copy到jniLibs文件夾中。

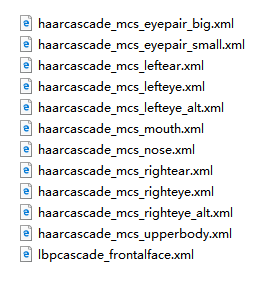

下載分類器。解壓后,將下圖中的文件都復制到項目文件夾的app\src\main\res\raw文件夾下。

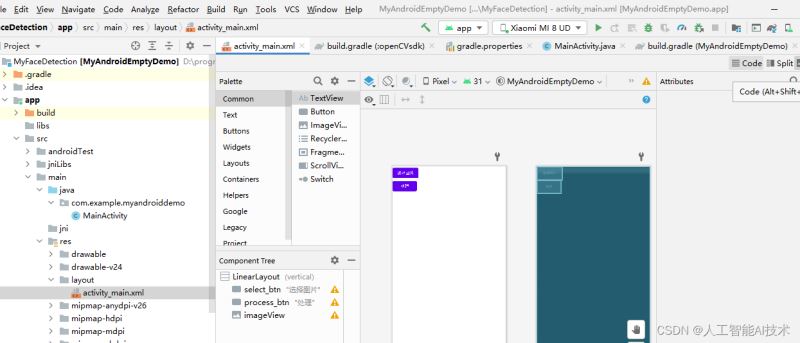

雙擊“Project”->“app”-》“main”-》“res”下面的“activity_main.xml”。然后點擊右上角的“code”。

然后將里面的代碼都換成下面的代碼

<?xml version="1.0" encoding="utf-8"?> <LinearLayout xmlns:android="http://schemas.android.com/apk/res/android" xmlns:app="http://schemas.android.com/apk/res-auto" xmlns:tools="http://schemas.android.com/tools" android:layout_width="match_parent" android:layout_height="match_parent" tools:context=".MainActivity" android:orientation="vertical" > <Button android:id="@+id/select_btn" android:layout_width="wrap_content" android:layout_height="wrap_content" android:text="選擇圖片" /> <Button android:id="@+id/process_btn" android:layout_width="wrap_content" android:layout_height="wrap_content" android:text="處理" /> <ImageView android:id="@+id/imageView" android:layout_width="wrap_content" android:layout_height="wrap_content" /> </LinearLayout>

雙擊“Project”->“app”-》“main”-》“java”-》“com.example…”下面的“MainActivity”。然后把里面的代碼都換成下面的代碼(保留原文件里的第一行代碼)

import androidx.appcompat.app.AppCompatActivity;

import android.os.Bundle;

import android.content.Intent;

import android.graphics.Bitmap;

import android.graphics.BitmapFactory;

import android.net.Uri;

import android.util.Log;

import android.view.View;

import android.widget.Button;

import android.widget.ImageView;

import org.opencv.android.OpenCVLoader;

import org.opencv.android.Utils;

import org.opencv.core.CvType;

import org.opencv.core.Mat;

import org.opencv.core.Point;

import org.opencv.imgproc.Imgproc;

import java.io.File;

import java.io.FileOutputStream;

import java.io.IOException;

import java.io.InputStream;

import org.opencv.core.MatOfRect;

import org.opencv.core.Rect;

import org.opencv.core.Scalar;

import org.opencv.core.Size;

import org.opencv.objdetect.CascadeClassifier;

import android.content.Context;

public class MainActivity extends AppCompatActivity {

private double max_size = 1024;

private int PICK_IMAGE_REQUEST = 1;

private ImageView myImageView;

private Bitmap selectbp;

private static final String TAG = "OCVSample::Activity";

private static final Scalar FACE_RECT_COLOR = new Scalar(0, 255, 0, 255);

public static final int JAVA_DETECTOR = 0;

public static final int NATIVE_DETECTOR = 1;

private Mat mGray;

private File mCascadeFile;

private CascadeClassifier mJavaDetector,mNoseDetector;

private int mDetectorType = JAVA_DETECTOR;

private float mRelativeFaceSize = 0.2f;

private int mAbsoluteFaceSize = 0;

@Override

protected void onCreate(Bundle savedInstanceState) {

super.onCreate(savedInstanceState);

setContentView(R.layout.activity_main);

staticLoadCVLibraries();

myImageView = (ImageView)findViewById(R.id.imageView);

myImageView.setScaleType(ImageView.ScaleType.FIT_CENTER);

Button selectImageBtn = (Button)findViewById(R.id.select_btn);

selectImageBtn.setOnClickListener(new View.OnClickListener() {

@Override

public void onClick(View v) {

// makeText(MainActivity.this.getApplicationContext(), "start to browser image", Toast.LENGTH_SHORT).show();

selectImage();

}

private void selectImage() {

Intent intent = new Intent();

intent.setType("image/*");

intent.setAction(Intent.ACTION_GET_CONTENT);

startActivityForResult(Intent.createChooser(intent,"選擇圖像..."), PICK_IMAGE_REQUEST);

}

});

Button processBtn = (Button)findViewById(R.id.process_btn);

processBtn.setOnClickListener(new View.OnClickListener() {

@Override

public void onClick(View v) {

// makeText(MainActivity.this.getApplicationContext(), "hello, image process", Toast.LENGTH_SHORT).show();

convertGray();

}

});

}

private void staticLoadCVLibraries() {

boolean load = OpenCVLoader.initDebug();

if(load) {

Log.i("CV", "Open CV Libraries loaded...");

}

}

private void convertGray() {

Mat src = new Mat();

Mat temp = new Mat();

Mat dst = new Mat();

Utils.bitmapToMat(selectbp, src);

Imgproc.cvtColor(src, temp, Imgproc.COLOR_BGRA2BGR);

Log.i("CV", "image type:" + (temp.type() == CvType.CV_8UC3));

Imgproc.cvtColor(temp, dst, Imgproc.COLOR_BGR2GRAY);

Utils.matToBitmap(dst, selectbp);

myImageView.setImageBitmap(selectbp);

mGray = dst;

mJavaDetector = loadDetector(R.raw.lbpcascade_frontalface,"lbpcascade_frontalface.xml");

mNoseDetector = loadDetector(R.raw.haarcascade_mcs_nose,"haarcascade_mcs_nose.xml");

if (mAbsoluteFaceSize == 0) {

int height = mGray.rows();

if (Math.round(height * mRelativeFaceSize) > 0) {

mAbsoluteFaceSize = Math.round(height * mRelativeFaceSize);

}

}

MatOfRect faces = new MatOfRect();

if (mJavaDetector != null) {

mJavaDetector.detectMultiScale(mGray, faces, 1.1, 2, 2, // TODO: objdetect.CV_HAAR_SCALE_IMAGE

new Size(mAbsoluteFaceSize, mAbsoluteFaceSize), new Size());

}

Rect[] facesArray = faces.toArray();

for (int i = 0; i < facesArray.length; i++) {

Log.e(TAG, "start to detect nose!");

Mat faceROI = mGray.submat(facesArray[i]);

MatOfRect noses = new MatOfRect();

mNoseDetector.detectMultiScale(faceROI, noses, 1.1, 2, 2,

new Size(30, 30));

Rect[] nosesArray = noses.toArray();

Imgproc.rectangle(src,

new Point(facesArray[i].tl().x + nosesArray[0].tl().x, facesArray[i].tl().y + nosesArray[0].tl().y) ,

new Point(facesArray[i].tl().x + nosesArray[0].br().x, facesArray[i].tl().y + nosesArray[0].br().y) ,

FACE_RECT_COLOR, 3);

Imgproc.rectangle(src, facesArray[i].tl(), facesArray[i].br(), FACE_RECT_COLOR, 3);

}

Utils.matToBitmap(src, selectbp);

myImageView.setImageBitmap(selectbp);

}

private CascadeClassifier loadDetector(int rawID,String fileName) {

CascadeClassifier classifier = null;

try {

// load cascade file from application resources

InputStream is = getResources().openRawResource(rawID);

File cascadeDir = getDir("cascade", Context.MODE_PRIVATE);

mCascadeFile = new File(cascadeDir, fileName);

FileOutputStream os = new FileOutputStream(mCascadeFile);

byte[] buffer = new byte[4096];

int bytesRead;

while ((bytesRead = is.read(buffer)) != -1) {

os.write(buffer, 0, bytesRead);

}

is.close();

os.close();

Log.e(TAG, "start to load file: " + mCascadeFile.getAbsolutePath());

classifier = new CascadeClassifier(mCascadeFile.getAbsolutePath());

if (classifier.empty()) {

Log.e(TAG, "Failed to load cascade classifier");

classifier = null;

} else

Log.i(TAG, "Loaded cascade classifier from " + mCascadeFile.getAbsolutePath());

cascadeDir.delete();

} catch (IOException e) {

e.printStackTrace();

Log.e(TAG, "Failed to load cascade. Exception thrown: " + e);

}

return classifier;

}

@Override

protected void onActivityResult(int requestCode, int resultCode, Intent data) {

super.onActivityResult(requestCode, resultCode, data);

if (requestCode == PICK_IMAGE_REQUEST && resultCode == RESULT_OK && data != null && data.getData() != null) {

Uri uri = data.getData();

try {

Log.d("image-tag", "start to decode selected image now...");

InputStream input = getContentResolver().openInputStream(uri);

BitmapFactory.Options options = new BitmapFactory.Options();

options.inJustDecodeBounds = true;

BitmapFactory.decodeStream(input, null, options);

int raw_width = options.outWidth;

int raw_height = options.outHeight;

int max = Math.max(raw_width, raw_height);

int newWidth = raw_width;

int newHeight = raw_height;

int inSampleSize = 1;

if (max > max_size) {

newWidth = raw_width / 2;

newHeight = raw_height / 2;

while ((newWidth / inSampleSize) > max_size || (newHeight / inSampleSize) > max_size) {

inSampleSize *= 2;

}

}

options.inSampleSize = inSampleSize;

options.inJustDecodeBounds = false;

options.inPreferredConfig = Bitmap.Config.ARGB_8888;

selectbp = BitmapFactory.decodeStream(getContentResolver().openInputStream(uri), null, options);

myImageView.setImageBitmap(selectbp);

} catch (Exception e) {

e.printStackTrace();

}

}

}

}首先要打開安卓手機的開發者模式,每個手機品牌的打開方式不一樣,你自行百度一下就知道了。例如在百度中搜索“小米手機如何開啟開發者模式”。

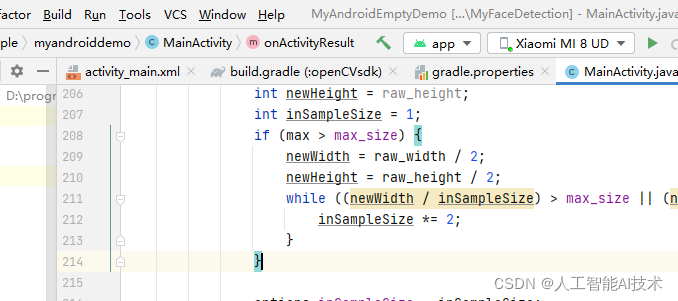

然后用數據線將手機和電腦連接起來。成功后,Android studio里面會顯示你的手機型號。如下圖中顯示的是“Xiaomi MI 8 UD”,本例中的開發手機是小米手機。

點擊上圖中的“Run”-》“Run ‘app'”就可以將APP運行到手機上面了,注意手機屏幕要處于打開狀態。你自拍的圖片可以檢測不成功,可以下載我的測試圖片試試。

關于“Android如何利用OpenCV制作人臉檢測APP”這篇文章就分享到這里了,希望以上內容可以對大家有一定的幫助,使各位可以學到更多知識,如果覺得文章不錯,請把它分享出去讓更多的人看到。

免責聲明:本站發布的內容(圖片、視頻和文字)以原創、轉載和分享為主,文章觀點不代表本網站立場,如果涉及侵權請聯系站長郵箱:is@yisu.com進行舉報,并提供相關證據,一經查實,將立刻刪除涉嫌侵權內容。