您好,登錄后才能下訂單哦!

您好,登錄后才能下訂單哦!

1.在終端輸入scrapy命令可以查看可用命令

Usage:

scrapy <command> [options] [args]

Available commands:

bench Run quick benchmark test

fetch Fetch a URL using the Scrapy downloader

genspider Generate new spider using pre-defined templates

runspider Run a self-contained spider (without creating a project)

settings Get settings values

shell Interactive scraping console

startproject Create new project

version Print Scrapy version

view Open URL in browser, as seen by Scrapyscrapy架構

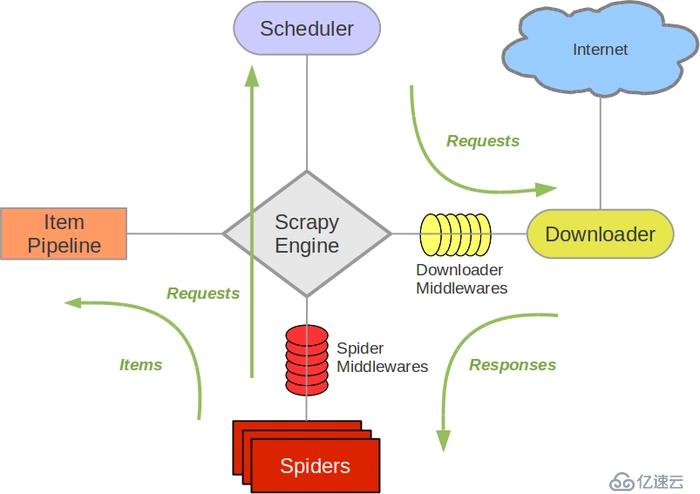

爬蟲概念流程

概念:亦可以稱為網絡蜘蛛或網絡機器人,是一個模擬瀏覽器請求網站行為的程序,可以自動請求網頁,把數據抓取下來,然后使用一定規則提取有價值的數據。

基本流程:

?發起請求-->獲取響應內容-->解析內容-->保存處理

import scrapy

class DoubanItem(scrapy.Item):

#序號

serial_number=scrapy.Field()

#名稱

movie_name=scrapy.Field()

#介紹

introduce=scrapy.Field()

#星級

star=scrapy.Field()

#評論

evalute=scrapy.Field()

#描述

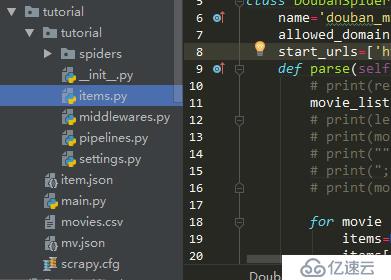

desc=scrapy.Field()3.編寫Spider文件,爬蟲主要是該文件實現

?進到根目錄,執行命令:scrapy genspider spider_name "domains" : spider_name為爬蟲名稱唯一,"domains",指定爬取域范圍,即可創建spider文件,當然這個文件可以自己手動創建。

?進程scrapy.Spider類,里面的方法可以覆蓋。

import scrapy

from ..items import DoubanItem

class DoubanSpider(scrapy.Spider):

name='douban_mv'

allowed_domains=['movie.douban.com']

start_urls=['https://movie.douban.com/top250']

def parse(self, response):

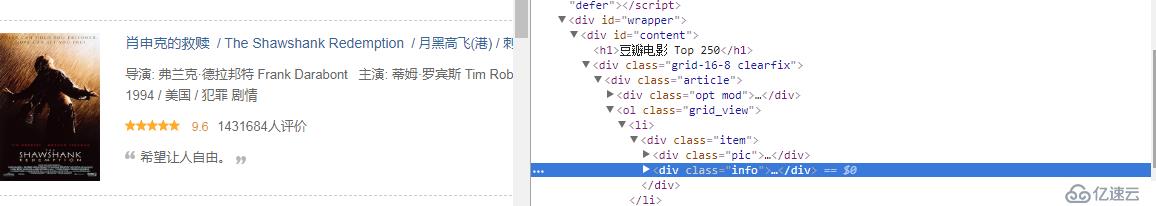

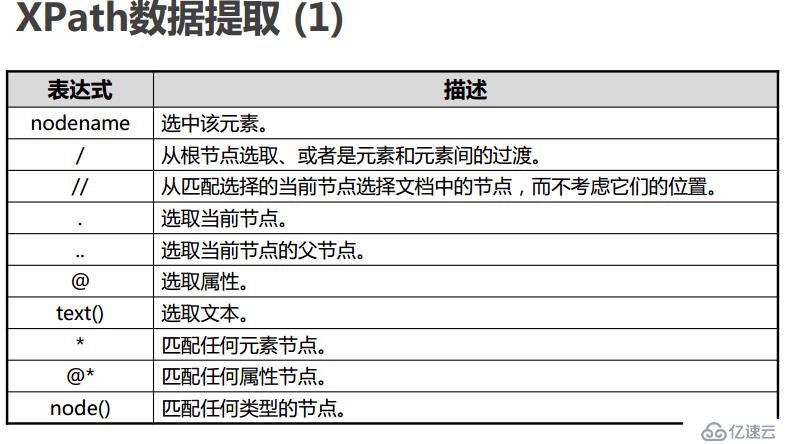

movie_list=response.xpath("http://div[@class='article']//ol[@class='grid_view']//li//div[@class='item']")

for movie in movie_list:

items=DoubanItem()

items['serial_number']=movie.xpath('.//div[@class="pic"]/em/text()').extract_first()

items['movie_name']=movie.xpath('.//div[@class="hd"]/a/span/text()').extract_first()

introduce=movie.xpath('.//div[@class="bd"]/p/text()').extract()

items['introduce']=";".join(introduce).replace(' ','').replace('\n','').strip(';')

items['star']=movie.xpath('.//div[@class="star"]/span[@class="rating_num"]/text()').extract_first()

items['evalute']=movie.xpath('.//div[@class="star"]/span[4]/text()').extract_first()

items['desc']=movie.xpath('.//p[@class="quote"]/span[@class="inq"]/text()').extract_first()

yield items

"next-page"實現翻頁操作

link=response.xpath('//span[@class="next"]/link/@href').extract_first()

if link:

yield response.follow(link,callback=self.parse) 4.編寫pipelines.py文件

?清理html數據、驗證爬蟲數據,去重并丟棄,文件保存csv,json,db.,每個item pipeline組件生效需要在setting中開啟才生效,且要調用process_item(self,item,spider)方法。

?open_spider(self,spider) :當spider被開啟時,這個方法被調用

?close_spider(self, spider) :當spider被關閉時,這個方法被調用

# -*- coding: utf-8 -*-

# Define your item pipelines here

# Don't forget to add your pipeline to the ITEM_PIPELINES setting

# See: https://doc.scrapy.org/en/latest/topics/item-pipeline.html

import json

import csv

class TutorialPipeline(object):

def open_spider(self,spider):

pass

def __init__(self):

self.file=open('item.json','w',encoding='utf-8')

def process_item(self, item, spider):

line=json.dumps(dict(item),ensure_ascii=False)+"\n"

self.file.write(line)

return item

class DoubanPipeline(object):

def open_spider(self,spider):

self.csv_file=open('movies.csv','w',encoding='utf8',newline='')

self.writer=csv.writer(self.csv_file)

self.writer.writerow(["serial_number","movie_name","introduce","star","evalute","desc"])

def process_item(self,item,spider):

self.writer.writerow([v for v in item.values()])

def close_spider(self,spider):

self.csv_file.close()5.開始運行爬蟲

?scrapy crawl "spider_name" 即可爬取在terminal中顯示信息,注spider_name為spider文件中name名稱。

?在命令行也可以直接輸出文件并保存,步驟4可以不開啟:

scrapy crawl demoz -o items.json

scrapy crawl itcast -o teachers.csv

scrapy crawl itcast -o teachers.xmlscrapy常用設置

修改配置文件:setting.py

每個pipeline后面有一個數值,這個數組的范圍是0-1000,這個數值確定了他們的運行順序,數字越小越優先

DEPTH_LIMIT :爬取網站最大允許的深度(depth)值。如果為0,則沒有限制

FEED_EXPORT_ENCODING = 'utf-8' 設置編碼

DOWNLOAD_DELAY=1:防止過于頻繁,誤認為爬蟲

USAGE_AGENT:設置代理

LOG_LEVEL = 'INFO':設置日志級別,資源

COOKIES_ENABLED = False:禁止cookie

CONCURRENT_REQUESTS = 32:并發數量

設置請求頭:DEFAULT_REQUEST_HEADERS={...}

免責聲明:本站發布的內容(圖片、視頻和文字)以原創、轉載和分享為主,文章觀點不代表本網站立場,如果涉及侵權請聯系站長郵箱:is@yisu.com進行舉報,并提供相關證據,一經查實,將立刻刪除涉嫌侵權內容。