您好,登錄后才能下訂單哦!

您好,登錄后才能下訂單哦!

小編給大家分享一下如何解決Pytorch中Batch Normalization layer的問題,相信大部分人都還不怎么了解,因此分享這篇文章給大家參考一下,希望大家閱讀完這篇文章后大有收獲,下面讓我們一起去了解一下吧!

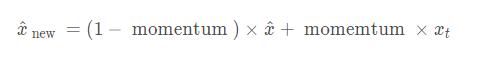

Pytorch中的BN層的動量平滑和常見的動量法計算方式是相反的,默認的momentum=0.1

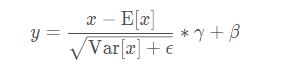

BN層里的表達式為:

其中γ和β是可以學習的參數。在Pytorch中,BN層的類的參數有:

CLASS torch.nn.BatchNorm2d(num_features, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

每個參數具體含義參見文檔,需要注意的是,affine定義了BN層的參數γ和β是否是可學習的(不可學習默認是常數1和0).

track_running_stats – a boolean value that when set to True, this module tracks the running mean and variance, and when set to False, this module does not track such statistics and always uses batch statistics in both training and eval modes. Default: True

在訓練過程中model.train(),train過程的BN的統計數值—均值和方差是通過當前batch數據估計的。

并且測試時,model.eval()后,若track_running_stats=True,模型此刻所使用的統計數據是Running status 中的,即通過指數衰減規則,積累到當前的數值。否則依然使用基于當前batch數據的估計值。

是在每一次訓練階段model.train()后的forward()方法中自動實現的,而不是在梯度計算與反向傳播中更新optim.step()中完成

從上面的分析可以看出來,正確的凍結BN的方式是在模型訓練時,把BN單獨挑出來,重新設置其狀態為eval (在model.train()之后覆蓋training狀態).

解決方案:

You should use apply instead of searching its children, while named_children() doesn't iteratively search submodules.

def set_bn_eval(m):

classname = m.__class__.__name__

if classname.find('BatchNorm') != -1:

m.eval()

model.apply(set_bn_eval)或者,重寫module中的train()方法:

def train(self, mode=True):

"""

Override the default train() to freeze the BN parameters

"""

super(MyNet, self).train(mode)

if self.freeze_bn:

print("Freezing Mean/Var of BatchNorm2D.")

if self.freeze_bn_affine:

print("Freezing Weight/Bias of BatchNorm2D.")

if self.freeze_bn:

for m in self.backbone.modules():

if isinstance(m, nn.BatchNorm2d):

m.eval()

if self.freeze_bn_affine:

m.weight.requires_grad = False

m.bias.requires_grad = False解決辦法:

import torch

import torch.nn as nn

from torch.nn import init

from torchvision import models

from torch.autograd import Variable

from apex.fp16_utils import *

def fix_bn(m):

classname = m.__class__.__name__

if classname.find('BatchNorm') != -1:

m.eval()

model = models.resnet50(pretrained=True)

model.cuda()

model = network_to_half(model)

model.train()

model.apply(fix_bn) # fix batchnorm

input = Variable(torch.FloatTensor(8, 3, 224, 224).cuda().half())

output = model(input)

output_mean = torch.mean(output)

output_mean.backward()Please do

def fix_bn(m):

classname = m.__class__.__name__

if classname.find('BatchNorm') != -1:

m.eval().half()Reason for this is, for regular training it is better (performance-wise) to use cudnn batch norm, which requires its weights to be in fp32, thus batch norm modules are not converted to half in network_to_half. However, cudnn does not support batchnorm backward in the eval mode , which is what you are doing, and to use pytorch implementation for this, weights have to be of the same type as inputs.

補充:深度學習總結:用pytorch做dropout和Batch Normalization時需要注意的地方,用tensorflow做dropout和BN時需要注意的地方

pytorch做dropout:

就是train的時候使用dropout,訓練的時候不使用dropout,

pytorch里面是通過net.eval()固定整個網絡參數,包括不會更新一些前向的參數,沒有dropout,BN參數固定,理論上對所有的validation set都要使用net.eval()

net.train()表示會納入梯度的計算。

net_dropped = torch.nn.Sequential( torch.nn.Linear(1, N_HIDDEN), torch.nn.Dropout(0.5), # drop 50% of the neuron torch.nn.ReLU(), torch.nn.Linear(N_HIDDEN, N_HIDDEN), torch.nn.Dropout(0.5), # drop 50% of the neuron torch.nn.ReLU(), torch.nn.Linear(N_HIDDEN, 1), ) for t in range(500): pred_drop = net_dropped(x) loss_drop = loss_func(pred_drop, y) optimizer_drop.zero_grad() loss_drop.backward() optimizer_drop.step() if t % 10 == 0: # change to eval mode in order to fix drop out effect net_dropped.eval() # parameters for dropout differ from train mode test_pred_drop = net_dropped(test_x) # change back to train mode net_dropped.train()

net.eval()固定整個網絡參數,固定BN的參數,moving_mean 和moving_var,不懂這個看下圖:

if self.do_bn:

bn = nn.BatchNorm1d(10, momentum=0.5)

setattr(self, 'bn%i' % i, bn) # IMPORTANT set layer to the Module

self.bns.append(bn)

for epoch in range(EPOCH):

print('Epoch: ', epoch)

for net, l in zip(nets, losses):

net.eval() # set eval mode to fix moving_mean and moving_var

pred, layer_input, pre_act = net(test_x)

net.train() # free moving_mean and moving_var

plot_histogram(*layer_inputs, *pre_acts)

dropout和BN都有一個training的參數表明到底是train還是test, 表明test那dropout就是不dropout,BN就是固定住了BN的參數;

tf_is_training = tf.placeholder(tf.bool, None) # to control dropout when training and testing

# dropout net

d1 = tf.layers.dense(tf_x, N_HIDDEN, tf.nn.relu)

d1 = tf.layers.dropout(d1, rate=0.5, training=tf_is_training) # drop out 50% of inputs

d2 = tf.layers.dense(d1, N_HIDDEN, tf.nn.relu)

d2 = tf.layers.dropout(d2, rate=0.5, training=tf_is_training) # drop out 50% of inputs

d_out = tf.layers.dense(d2, 1)

for t in range(500):

sess.run([o_train, d_train], {tf_x: x, tf_y: y, tf_is_training: True}) # train, set is_training=True

if t % 10 == 0:

# plotting

plt.cla()

o_loss_, d_loss_, o_out_, d_out_ = sess.run(

[o_loss, d_loss, o_out, d_out], {tf_x: test_x, tf_y: test_y, tf_is_training: False} # test, set is_training=False

)

# pytorch

def add_layer(self, x, out_size, ac=None):

x = tf.layers.dense(x, out_size, kernel_initializer=self.w_init, bias_initializer=B_INIT)

self.pre_activation.append(x)

# the momentum plays important rule. the default 0.99 is too high in this case!

if self.is_bn: x = tf.layers.batch_normalization(x, momentum=0.4, training=tf_is_train) # when have BN

out = x if ac is None else ac(x)

return out當BN的training的參數為train時,只是表示BN的參數是可變化的,并不是代表BN會自己更新moving_mean 和moving_var,因為這個操作是前向更新的op,在做train之前必須確保moving_mean 和moving_var更新了,更新moving_mean 和moving_var的操作在tf.GraphKeys.UPDATE_OPS

# !! IMPORTANT !! the moving_mean and moving_variance need to be updated, # pass the update_ops with control_dependencies to the train_op update_ops = tf.get_collection(tf.GraphKeys.UPDATE_OPS) with tf.control_dependencies(update_ops): self.train = tf.train.AdamOptimizer(LR).minimize(self.loss)

以上是“如何解決Pytorch中Batch Normalization layer的問題”這篇文章的所有內容,感謝各位的閱讀!相信大家都有了一定的了解,希望分享的內容對大家有所幫助,如果還想學習更多知識,歡迎關注億速云行業資訊頻道!

免責聲明:本站發布的內容(圖片、視頻和文字)以原創、轉載和分享為主,文章觀點不代表本網站立場,如果涉及侵權請聯系站長郵箱:is@yisu.com進行舉報,并提供相關證據,一經查實,將立刻刪除涉嫌侵權內容。