您好,登錄后才能下訂單哦!

您好,登錄后才能下訂單哦!

線性回歸實戰

使用PyTorch定義線性回歸模型一般分以下幾步:

1.設計網絡架構

2.構建損失函數(loss)和優化器(optimizer)

3.訓練(包括前饋(forward)、反向傳播(backward)、更新模型參數(update))

#author:yuquanle

#data:2018.2.5

#Study of LinearRegression use PyTorch

import torch

from torch.autograd import Variable

# train data

x_data = Variable(torch.Tensor([[1.0], [2.0], [3.0]]))

y_data = Variable(torch.Tensor([[2.0], [4.0], [6.0]]))

class Model(torch.nn.Module):

def __init__(self):

super(Model, self).__init__()

self.linear = torch.nn.Linear(1, 1) # One in and one out

def forward(self, x):

y_pred = self.linear(x)

return y_pred

# our model

model = Model()

criterion = torch.nn.MSELoss(size_average=False) # Defined loss function

optimizer = torch.optim.SGD(model.parameters(), lr=0.01) # Defined optimizer

# Training: forward, loss, backward, step

# Training loop

for epoch in range(50):

# Forward pass

y_pred = model(x_data)

# Compute loss

loss = criterion(y_pred, y_data)

print(epoch, loss.data[0])

# Zero gradients

optimizer.zero_grad()

# perform backward pass

loss.backward()

# update weights

optimizer.step()

# After training

hour_var = Variable(torch.Tensor([[4.0]]))

print("predict (after training)", 4, model.forward(hour_var).data[0][0])

迭代十次打印結果:

0 123.87958526611328

1 55.19491195678711

2 24.61777114868164

3 11.005026817321777

4 4.944361686706543

5 2.2456750869750977

6 1.0436556339263916

7 0.5079189538955688

8 0.2688019871711731

9 0.16174012422561646

predict (after training) 4 7.487752914428711

loss還在繼續下降,此時輸入4得到的結果還不是預測的很準

當迭代次數設置為50時:

0 35.38422393798828

5 0.6207122802734375

10 0.012768605723977089

15 0.0020055510103702545

20 0.0016929294215515256

25 0.0015717096393927932

30 0.0014619173016399145

35 0.0013598509831354022

40 0.0012649153359234333

45 0.00117658288218081

50 0.001094428705982864

predict (after training) 4 8.038028717041016

此時,函數已經擬合比較好了

再運行一次:

0 159.48605346679688

5 2.827991485595703

10 0.08624256402254105

15 0.03573693335056305

20 0.032463930547237396

25 0.030183646827936172

30 0.02807590737938881

35 0.026115568354725838

40 0.02429218217730522

45 0.022596003487706184

50 0.0210183784365654

predict (after training) 4 7.833342552185059

發現同為迭代50次,但是當輸入為4時,結果不同,感覺應該是使用pytorch定義線性回歸模型時:

torch.nn.Linear(1, 1),只需要知道輸入和輸出維度,里面的參數矩陣是隨機初始化的(具體是不是隨機的還是按照一定約束條件初始化的我不確定),所有每次計算loss會下降到不同的位置(模型的參數更新從而也不同),導致結果不一樣。

邏輯回歸實戰

線性回歸是解決回歸問題的,邏輯回歸和線性回歸很像,但是它是解決分類問題的(一般二分類問題:0 or 1)。也可以多分類問題(用softmax可以實現)。

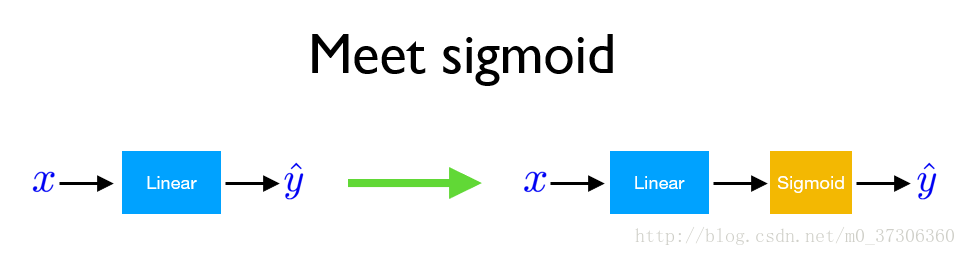

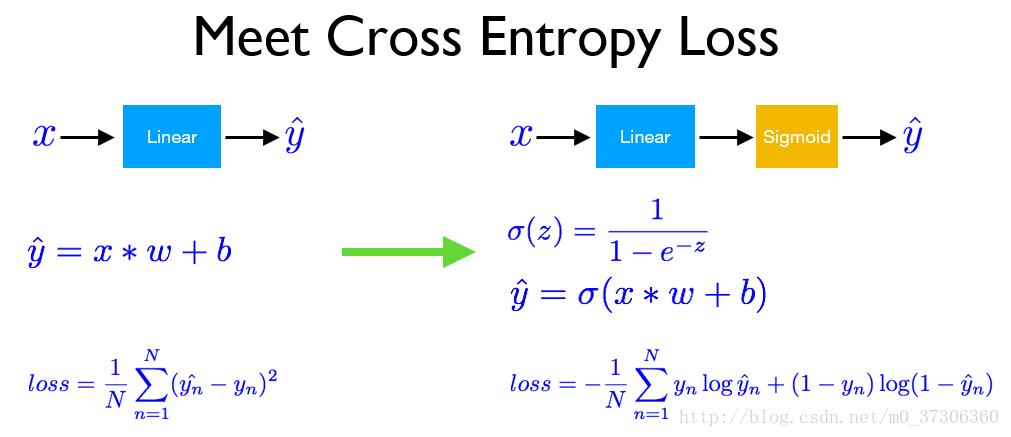

使用pytorch實現邏輯回歸的基本過程和線性回歸差不多,但是有以下幾個區別:

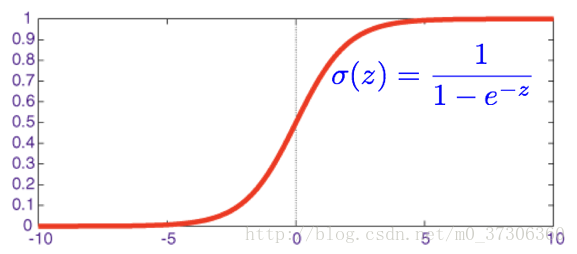

下面為sigmoid函數:

在邏輯回歸中,我們預測如果 當輸出大于0.5時,y=1;否則y=0。

損失函數一般采用交叉熵loss:

# date:2018.2.6

# LogisticRegression

import torch

from torch.autograd import Variable

x_data = Variable(torch.Tensor([[0.6], [1.0], [3.5], [4.0]]))

y_data = Variable(torch.Tensor([[0.], [0.], [1.], [1.]]))

class Model(torch.nn.Module):

def __init__(self):

super(Model, self).__init__()

self.linear = torch.nn.Linear(1, 1) # One in one out

self.sigmoid = torch.nn.Sigmoid()

def forward(self, x):

y_pred = self.sigmoid(self.linear(x))

return y_pred

# Our model

model = Model()

# Construct loss function and optimizer

criterion = torch.nn.BCELoss(size_average=True)

optimizer = torch.optim.SGD(model.parameters(), lr=0.01)

# Training loop

for epoch in range(500):

# Forward pass

y_pred = model(x_data)

# Compute loss

loss = criterion(y_pred, y_data)

if epoch % 20 == 0:

print(epoch, loss.data[0])

# Zero gradients

optimizer.zero_grad()

# Backward pass

loss.backward()

# update weights

optimizer.step()

# After training

hour_var = Variable(torch.Tensor([[0.5]]))

print("predict (after training)", 0.5, model.forward(hour_var).data[0][0])

hour_var = Variable(torch.Tensor([[7.0]]))

print("predict (after training)", 7.0, model.forward(hour_var).data[0][0])

輸入結果:

0 0.9983477592468262

20 0.850886881351471

40 0.7772406339645386

60 0.7362991571426392

80 0.7096697092056274

100 0.6896909475326538

120 0.6730546355247498

140 0.658246636390686

160 0.644534170627594

180 0.6315458416938782

200 0.6190851330757141

220 0.607043981552124

240 0.5953611731529236

260 0.5840001106262207

280 0.5729377269744873

300 0.5621585845947266

320 0.5516515970230103

340 0.5414079427719116

360 0.5314203500747681

380 0.5216821432113647

400 0.512187123298645

420 0.5029295086860657

440 0.49390339851379395

460 0.4851033389568329

480 0.47652381658554077

predict (after training) 0.5 0.49599987268447876

predict (after training) 7.0 0.9687209129333496Process finished with exit code 0

訓練完模型之后,輸入新的數據0.5,此時輸出小于0.5,則為0類別,輸入7輸出大于0.5,則為1類別。使用softmax做多分類時,那個維度的數值大,則為那個數值所對應位置的類別。

更深更寬的網絡

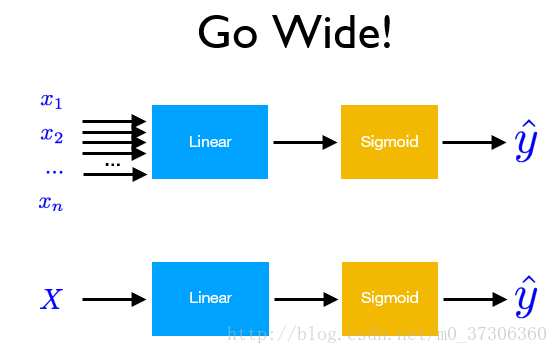

前面的例子都是淺層輸入為一維的網絡,如果需要更深更寬的網絡,使用pytorch也可以很好的實現,以邏輯回歸為例:

當輸入x的維度很大時,需要更寬的網絡:

更深的網絡:

采用下面數據集(下載地址:https://github.com/hunkim/PyTorchZeroToAll/tree/master/data)

輸入維度為八。

#author:yuquanle

#date:2018.2.7

#Deep and Wide

import torch

from torch.autograd import Variable

import numpy as np

xy = np.loadtxt('./data/diabetes.csv', delimiter=',', dtype=np.float32)

x_data = Variable(torch.from_numpy(xy[:, 0:-1]))

y_data = Variable(torch.from_numpy(xy[:, [-1]]))

#print(x_data.data.shape)

#print(y_data.data.shape)

class Model(torch.nn.Module):

def __init__(self):

super(Model, self).__init__()

self.l1 = torch.nn.Linear(8, 6)

self.l2 = torch.nn.Linear(6, 4)

self.l3 = torch.nn.Linear(4, 1)

self.sigmoid = torch.nn.Sigmoid()

def forward(self, x):

x = self.sigmoid(self.l1(x))

x = self.sigmoid(self.l2(x))

y_pred = self.sigmoid(self.l3(x))

return y_pred

# our model

model = Model()

cirterion = torch.nn.BCELoss(size_average=True)

optimizer = torch.optim.SGD(model.parameters(), lr=0.001)

hour_var = Variable(torch.Tensor([[-0.294118,0.487437,0.180328,-0.292929,0,0.00149028,-0.53117,-0.0333333]]))

print("(Before training)", model.forward(hour_var).data[0][0])

# Training loop

for epoch in range(1000):

y_pred = model(x_data)

# y_pred,y_data不能寫反(因為損失函數為交叉熵loss)

loss = cirterion(y_pred, y_data)

optimizer.zero_grad()

loss.backward()

optimizer.step()

if epoch % 50 == 0:

print(epoch, loss.data[0])

# After training

hour_var = Variable(torch.Tensor([[-0.294118,0.487437,0.180328,-0.292929,0,0.00149028,-0.53117,-0.0333333]]))

print("predict (after training)", model.forward(hour_var).data[0][0])

結果:

(Before training) 0.5091859698295593

0 0.6876295208930969

50 0.6857835650444031

100 0.6840178370475769

150 0.6823290586471558

200 0.6807141900062561

250 0.6791688203811646

300 0.6776910424232483

350 0.6762782335281372

400 0.6749269366264343

450 0.6736343502998352

500 0.6723981499671936

550 0.6712161302566528

600 0.6700847744941711

650 0.6690039038658142

700 0.667969822883606

750 0.666980504989624

800 0.6660353541374207

850 0.6651310324668884

900 0.664265513420105

950 0.6634389758110046

predict (after training) 0.5618339776992798

Process finished with exit code 0

參考:

1.https://github.com/hunkim/PyTorchZeroToAll

2.http://pytorch.org/tutorials/beginner/deep_learning_60min_blitz.html

以上就是本文的全部內容,希望對大家的學習有所幫助,也希望大家多多支持億速云。

免責聲明:本站發布的內容(圖片、視頻和文字)以原創、轉載和分享為主,文章觀點不代表本網站立場,如果涉及侵權請聯系站長郵箱:is@yisu.com進行舉報,并提供相關證據,一經查實,將立刻刪除涉嫌侵權內容。