您好,登錄后才能下訂單哦!

您好,登錄后才能下訂單哦!

這篇文章主要介紹了CoordConv如何實現卷積加上坐標的相關知識,內容詳細易懂,操作簡單快捷,具有一定借鑒價值,相信大家閱讀完這篇CoordConv如何實現卷積加上坐標文章都會有所收獲,下面我們一起來看看吧。

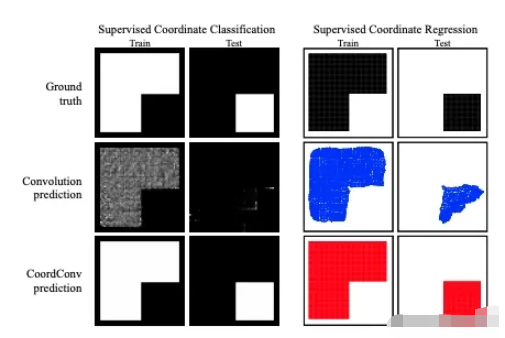

這是一篇考古的論文復現項目,在2018年Uber團隊提出這個CoordConv模塊的時候有很多文章對其進行批評,認為這個不值得發布一篇論文,但是現在重新看一下這個idea,同時再對比一下目前Transformer中提出的位置編碼(Position Encoding),你就會感概歷史是個圈,在角點卷積中,為卷積添加兩個坐標編碼實際上與Transformer中提出的位置編碼是同樣的道理。 眾所周知,深度學習里的卷積運算是具有平移等變性的,這樣可以在圖像的不同位置共享統一的卷積核參數,但是這樣卷積學習過程中是不能感知當前特征在圖像中的坐標的,論文中的實驗證明如下圖所示。通過該實驗,作者證明了傳統卷積在卷積核進行局部運算時,僅僅能感受到局部信息,并且是無法感受到位置信息的。CoordConv就是通過在卷積的輸入特征圖中新增對應的通道來表征特征圖像素點的坐標,讓卷積學習過程中能夠一定程度感知坐標來提升檢測精度。

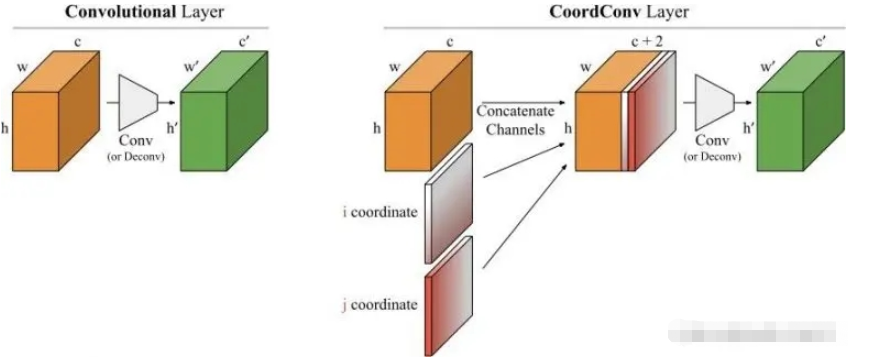

傳統卷積無法將空間表示轉換成笛卡爾空間中的坐標和one-hot像素空間中的坐標。卷積是等變的,也就是說當每個過濾器應用到輸入上時,它不知道每個過濾器在哪。我們可以幫助卷積,讓它知道過濾器的位置。這一過程需要在輸入上添加兩個通道實現,一個在i坐標,另一個在j坐標。通過上面的添加坐標的操作,我們可以的出一種新的卷積結構--CoordConv,其結構如下圖所示:

本部分根據CoordConv論文并參考飛槳的官方實現完成CoordConv的復現。

import paddle import paddle.nn as nn import paddle.nn.functional as F from paddle import ParamAttr from paddle.regularizer import L2Decay from paddle.nn import AvgPool2D, Conv2D

首先繼承nn.Layer基類,其次使用paddle.arange定義gx``gy兩個坐標,并且停止它們的梯度反傳gx.stop_gradient = True,最后將它們concat到一起送入卷積即可。

class CoordConv(nn.Layer): def __init__(self, in_channels, out_channels, kernel_size, stride, padding): super(CoordConv, self).__init__() self.conv = Conv2D( in_channels + 2, out_channels , kernel_size , stride , padding) def forward(self, x): b = x.shape[0] h = x.shape[2] w = x.shape[3] gx = paddle.arange(w, dtype='float32') / (w - 1.) * 2.0 - 1. gx = gx.reshape([1, 1, 1, w]).expand([b, 1, h, w]) gx.stop_gradient = True gy = paddle.arange(h, dtype='float32') / (h - 1.) * 2.0 - 1. gy = gy.reshape([1, 1, h, 1]).expand([b, 1, h, w]) gy.stop_gradient = True y = paddle.concat([x, gx, gy], axis=1) y = self.conv(y) return y

class dcn2(paddle.nn.Layer): def __init__(self, num_classes=1): super(dcn2, self).__init__() self.conv1 = paddle.nn.Conv2D(in_channels=3, out_channels=32, kernel_size=(3, 3), stride=1, padding = 1) self.conv2 = paddle.nn.Conv2D(in_channels=32, out_channels=64, kernel_size=(3,3), stride=2, padding = 0) self.conv3 = paddle.nn.Conv2D(in_channels=64, out_channels=64, kernel_size=(3,3), stride=2, padding = 0) self.offsets = paddle.nn.Conv2D(64, 18, kernel_size=3, stride=2, padding=1) self.mask = paddle.nn.Conv2D(64, 9, kernel_size=3, stride=2, padding=1) self.conv4 = CoordConv(64, 64, (3,3), 2, 1) self.flatten = paddle.nn.Flatten() self.linear1 = paddle.nn.Linear(in_features=1024, out_features=64) self.linear2 = paddle.nn.Linear(in_features=64, out_features=num_classes) def forward(self, x): x = self.conv1(x) x = F.relu(x) x = self.conv2(x) x = F.relu(x) x = self.conv3(x) x = F.relu(x) x = self.conv4(x) x = F.relu(x) x = self.flatten(x) x = self.linear1(x) x = F.relu(x) x = self.linear2(x) return x

cnn3 = dcn2() model3 = paddle.Model(cnn3) model3.summary((64, 3, 32, 32))

---------------------------------------------------------------------------

Layer (type) Input Shape Output Shape Param #

===========================================================================

Conv2D-26 [[64, 3, 32, 32]] [64, 32, 32, 32] 896

Conv2D-27 [[64, 32, 32, 32]] [64, 64, 15, 15] 18,496

Conv2D-28 [[64, 64, 15, 15]] [64, 64, 7, 7] 36,928

Conv2D-31 [[64, 66, 7, 7]] [64, 64, 4, 4] 38,080

CoordConv-4 [[64, 64, 7, 7]] [64, 64, 4, 4] 0

Flatten-1 [[64, 64, 4, 4]] [64, 1024] 0

Linear-1 [[64, 1024]] [64, 64] 65,600

Linear-2 [[64, 64]] [64, 1] 65

===========================================================================

Total params: 160,065

Trainable params: 160,065

Non-trainable params: 0

---------------------------------------------------------------------------

Input size (MB): 0.75

Forward/backward pass size (MB): 26.09

Params size (MB): 0.61

Estimated Total Size (MB): 27.45

---------------------------------------------------------------------------

{'total_params': 160065, 'trainable_params': 160065}class MyNet(paddle.nn.Layer): def __init__(self, num_classes=1): super(MyNet, self).__init__() self.conv1 = paddle.nn.Conv2D(in_channels=3, out_channels=32, kernel_size=(3, 3), stride=1, padding = 1) self.conv2 = paddle.nn.Conv2D(in_channels=32, out_channels=64, kernel_size=(3,3), stride=2, padding = 0) self.conv3 = paddle.nn.Conv2D(in_channels=64, out_channels=64, kernel_size=(3,3), stride=2, padding = 0) self.conv4 = paddle.nn.Conv2D(in_channels=64, out_channels=64, kernel_size=(3,3), stride=2, padding = 1) self.flatten = paddle.nn.Flatten() self.linear1 = paddle.nn.Linear(in_features=1024, out_features=64) self.linear2 = paddle.nn.Linear(in_features=64, out_features=num_classes) def forward(self, x): x = self.conv1(x) x = F.relu(x) x = self.conv2(x) x = F.relu(x) x = self.conv3(x) x = F.relu(x) x = self.conv4(x) x = F.relu(x) x = self.flatten(x) x = self.linear1(x) x = F.relu(x) x = self.linear2(x) return x

# 可視化模型 cnn1 = MyNet() model1 = paddle.Model(cnn1) model1.summary((64, 3, 32, 32))

---------------------------------------------------------------------------

Layer (type) Input Shape Output Shape Param #

===========================================================================

Conv2D-1 [[64, 3, 32, 32]] [64, 32, 32, 32] 896

Conv2D-2 [[64, 32, 32, 32]] [64, 64, 15, 15] 18,496

Conv2D-3 [[64, 64, 15, 15]] [64, 64, 7, 7] 36,928

Conv2D-4 [[64, 64, 7, 7]] [64, 64, 4, 4] 36,928

Flatten-1 [[64, 64, 4, 4]] [64, 1024] 0

Linear-1 [[64, 1024]] [64, 64] 65,600

Linear-2 [[64, 64]] [64, 1] 65

===========================================================================

Total params: 158,913

Trainable params: 158,913

Non-trainable params: 0

---------------------------------------------------------------------------

Input size (MB): 0.75

Forward/backward pass size (MB): 25.59

Params size (MB): 0.61

Estimated Total Size (MB): 26.95

---------------------------------------------------------------------------

{'total_params': 158913, 'trainable_params': 158913}關于“CoordConv如何實現卷積加上坐標”這篇文章的內容就介紹到這里,感謝各位的閱讀!相信大家對“CoordConv如何實現卷積加上坐標”知識都有一定的了解,大家如果還想學習更多知識,歡迎關注億速云行業資訊頻道。

免責聲明:本站發布的內容(圖片、視頻和文字)以原創、轉載和分享為主,文章觀點不代表本網站立場,如果涉及侵權請聯系站長郵箱:is@yisu.com進行舉報,并提供相關證據,一經查實,將立刻刪除涉嫌侵權內容。