您好,登錄后才能下訂單哦!

您好,登錄后才能下訂單哦!

這篇“Centos中怎么使用kubeadm部署kubernetes1.18”文章的知識點大部分人都不太理解,所以小編給大家總結了以下內容,內容詳細,步驟清晰,具有一定的借鑒價值,希望大家閱讀完這篇文章能有所收獲,下面我們一起來看看這篇“Centos中怎么使用kubeadm部署kubernetes1.18”文章吧。

Kubernetes是一個開源的 Linux 容器自動化運維平臺,它消除了容器化應用程序在部署、伸縮時涉及到的許多手動操作。換句話說,你可以將多臺主機組合成集群來運行 Linux 容器,而 Kubernetes 可以幫助你簡單高效地管理那些集群。

查看系統版本

[root@localhost]# cat /etc/centos-releaseCentOS Linux release 8.1.1911 (Core)

[root@localhost ~]# cat /etc/sysconfig/network-scripts/ifcfg-enp0s3TYPE=Ethernet PROXY_METHOD=none BROWSER_ONLY=no BOOTPROTO=static DEFROUTE=yes IPV4_FAILURE_FATAL=no IPV6INIT=yes IPV6_AUTOCONF=yes IPV6_DEFROUTE=yes IPV6_FAILURE_FATAL=no IPV6_ADDR_GEN_MODE=stable-privacy NAME=enp0s3 UUID=039303a5-c70d-4973-8c91-97eaa071c23d DEVICE=enp0s3 ONBOOT=yes IPADDR=192.168.122.21 NETMASK=255.255.255.0 GATEWAY=192.168.122.1 DNS1=223.5.5.5

添加阿里源

[root@localhost ~]# rm -rfv /etc/yum.repos.d/*[root@localhost ~]# curl -o /etc/yum.repos.d/CentOS-Base.repo http://mirrors.aliyun.com/repo/Centos-8.repo

配置主機名

[root@master01 ~]# cat /etc/hosts127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4 ::1 localhost localhost.localdomain localhost6 localhost6.localdomain6 192.168.122.21 master01.paas.com master01

關閉swap,注釋swap分區

[root@master01 ~]# swapoff -a[root@master01 ~]# cat /etc/fstab## /etc/fstab# Created by anaconda on Tue Mar 31 22:44:34 2020## Accessible filesystems, by reference, are maintained under '/dev/disk/'.# See man pages fstab(5), findfs(8), mount(8) and/or blkid(8) for more info.## After editing this file, run 'systemctl daemon-reload' to update systemd# units generated from this file.#/dev/mapper/cl-root / xfs defaults 0 0 UUID=5fecb240-379b-4331-ba04-f41338e81a6e /boot ext4 defaults 1 2 /dev/mapper/cl-home /home xfs defaults 0 0#/dev/mapper/cl-swap swap swap defaults 0 0

配置內核參數,將橋接的IPv4流量傳遞到iptables的鏈

[root@master01 ~]# vim /etc/sysctl.d/k8s.confnet.bridge.bridge-nf-call-ip6tables = 1 net.bridge.bridge-nf-call-iptables = 1 sysctl --system

[root@master01 ~]# yum install vim bash-completion net-tools gcc -y

使用aliyun源安裝docker-ce

[root@master01 ~]# yum install -y yum-utils device-mapper-persistent-data lvm2[root@master01 ~]# yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo[root@master01 ~]# yum -y install docker-ce

安裝docker-ce如果出現以下錯

[root@master01 ~]# yum -y install docker-ceCentOS-8 - Base - mirrors.aliyun.com 14 kB/s | 3.8 kB 00:00CentOS-8 - Extras - mirrors.aliyun.com 6.4 kB/s | 1.5 kB 00:00CentOS-8 - AppStream - mirrors.aliyun.com 16 kB/s | 4.3 kB 00:00Docker CE Stable - x86_64 40 kB/s | 22 kB 00:00Error:Problem: package docker-ce-3:19.03.8-3.el7.x86_64 requires containerd.io >= 1.2.2-3, but none of the providers can be installed - cannot install the best candidate for the job - package containerd.io-1.2.10-3.2.el7.x86_64 is excluded - package containerd.io-1.2.13-3.1.el7.x86_64 is excluded - package containerd.io-1.2.2-3.3.el7.x86_64 is excluded - package containerd.io-1.2.2-3.el7.x86_64 is excluded - package containerd.io-1.2.4-3.1.el7.x86_64 is excluded - package containerd.io-1.2.5-3.1.el7.x86_64 is excluded - package containerd.io-1.2.6-3.3.el7.x86_64 is excluded(try to add '--skip-broken' to skip uninstallable packages or '--nobest' to use not only best candidate packages)

解決方法

[root@master01 ~]# wget https://download.docker.com/linux/centos/7/x86_64/edge/Packages/containerd.io-1.2.6-3.3.el7.x86_64.rpm[root@master01 ~]# yum install containerd.io-1.2.6-3.3.el7.x86_64.rpm

然后再安裝docker-ce即可成功

添加aliyundocker倉庫加速器

[root@master01 ~]# mkdir -p /etc/docker[root@master01 ~]# tee /etc/docker/daemon.json

添加阿里kubernetes源

[root@master01 ~]# cat

安裝

[root@master01 ~]# yum install kubectl kubelet kubeadm[root@master01 ~]# systemctl enable kubelet

[root@master01 ~]# kubeadm init --kubernetes-version=1.18.0 \--apiserver-advertise-address=192.168.122.21 \--image-repository registry.aliyuncs.com/google_containers \--service-cidr=10.10.0.0/16 --pod-network-cidr=10.122.0.0/16

POD的網段為: 10.122.0.0/16, api server地址就是master本機IP。

這一步很關鍵,由于kubeadm 默認從官網k8s.grc.io下載所需鏡像,國內無法訪問,因此需要通過–image-repository指定阿里云鏡像倉庫地址。

集群初始化成功后返回如下信息:

W0408 09:36:36.121603 14098 configset.go:202] WARNING: kubeadm cannot validate component configs for API groups [kubelet.config.k8s.io kubeproxy.config.k8s.io][init] Using Kubernetes version: v1.18.0[preflight] Running pre-flight checks [WARNING FileExisting-tc]: tc not found in system path[preflight] Pulling images required for setting up a Kubernetes cluster[preflight] This might take a minute or two, depending on the speed of your internet connection[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"[kubelet-start] Starting the kubelet[certs] Using certificateDir folder "/etc/kubernetes/pki"[certs] Generating "ca" certificate and key[certs] Generating "apiserver" certificate and key[certs] apiserver serving cert is signed for DNS names [master01.paas.com kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.10.0.1 192.168.122.21][certs] Generating "apiserver-kubelet-client" certificate and key[certs] Generating "front-proxy-ca" certificate and key[certs] Generating "front-proxy-client" certificate and key[certs] Generating "etcd/ca" certificate and key[certs] Generating "etcd/server" certificate and key[certs] etcd/server serving cert is signed for DNS names [master01.paas.com localhost] and IPs [192.168.122.21 127.0.0.1 ::1][certs] Generating "etcd/peer" certificate and key[certs] etcd/peer serving cert is signed for DNS names [master01.paas.com localhost] and IPs [192.168.122.21 127.0.0.1 ::1][certs] Generating "etcd/healthcheck-client" certificate and key[certs] Generating "apiserver-etcd-client" certificate and key[certs] Generating "sa" key and public key[kubeconfig] Using kubeconfig folder "/etc/kubernetes"[kubeconfig] Writing "admin.conf" kubeconfig file[kubeconfig] Writing "kubelet.conf" kubeconfig file[kubeconfig] Writing "controller-manager.conf" kubeconfig file[kubeconfig] Writing "scheduler.conf" kubeconfig file[control-plane] Using manifest folder "/etc/kubernetes/manifests"[control-plane] Creating static Pod manifest for "kube-apiserver"[control-plane] Creating static Pod manifest for "kube-controller-manager"W0408 09:36:43.343191 14098 manifests.go:225] the default kube-apiserver authorization-mode is "Node,RBAC"; using "Node,RBAC"[control-plane] Creating static Pod manifest for "kube-scheduler"W0408 09:36:43.344303 14098 manifests.go:225] the default kube-apiserver authorization-mode is "Node,RBAC"; using "Node,RBAC"[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s[apiclient] All control plane components are healthy after 23.002541 seconds[upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace[kubelet] Creating a ConfigMap "kubelet-config-1.18" in namespace kube-system with the configuration for the kubelets in the cluster[upload-certs] Skipping phase. Please see --upload-certs[mark-control-plane] Marking the node master01.paas.com as control-plane by adding the label "node-role.kubernetes.io/master=''"[mark-control-plane] Marking the node master01.paas.com as control-plane by adding the taints [node-role.kubernetes.io/master:NoSchedule][bootstrap-token] Using token: v2r5a4.veazy2xhzetpktfz[bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to get nodes[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials[bootstrap-token] configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token[bootstrap-token] configured RBAC rules to allow certificate rotation for all node client certificates in the cluster[bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace[kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key[addons] Applied essential addon: CoreDNS[addons] Applied essential addon: kube-proxyYour Kubernetes control-plane has initialized successfully!To start using your cluster, you need to run the following as a regular user: mkdir -p $HOME/.kube sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config sudo chown $(id -u):$(id -g) $HOME/.kube/configYou should now deploy a pod network to the cluster.Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at: https://kubernetes.io/docs/concepts/cluster-administration/addons/Then you can join any number of worker nodes by running the following on each as root:kubeadm join 192.168.122.21:6443 --token v2r5a4.veazy2xhzetpktfz \ --discovery-token-ca-cert-hash sha256:daded8514c8350f7c238204979039ff9884d5b595ca950ba8bbce80724fd65d4[root@master01 ~]#

記錄生成的最后部分內容,此內容需要在其它節點加入Kubernetes集群時執行。

根據提示創建kubectl

[root@master01 ~]# mkdir -p $HOME/.kube[root@master01 ~]# sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config[root@master01 ~]# sudo chown $(id -u):$(id -g) $HOME/.kube/config

執行下面命令,使kubectl可以自動補充

[root@master01 ~]# source

查看節點,pod

[root@master01 ~]# kubectl get nodeNAME STATUS ROLES AGE VERSIONmaster01.paas.com NotReady master 2m29s v1.18.0[root@master01 ~]# kubectl get pod --all-namespacesNAMESPACE NAME READY STATUS RESTARTS AGEkube-system coredns-7ff77c879f-fsj9l 0/1 Pending 0 2m12skube-system coredns-7ff77c879f-q5ll2 0/1 Pending 0 2m12skube-system etcd-master01.paas.com 1/1 Running 0 2m22skube-system kube-apiserver-master01.paas.com 1/1 Running 0 2m22skube-system kube-controller-manager-master01.paas.com 1/1 Running 0 2m22skube-system kube-proxy-th572 1/1 Running 0 2m12skube-system kube-scheduler-master01.paas.com 1/1 Running 0 2m22s[root@master01 ~]#

node節點為NotReady,因為corednspod沒有啟動,缺少網絡pod

[root@master01 ~]# kubectl apply -f https://docs.projectcalico.org/manifests/calico.yamlconfigmap/calico-config createdcustomresourcedefinition.apiextensions.k8s.io/bgpconfigurations.crd.projectcalico.org createdcustomresourcedefinition.apiextensions.k8s.io/bgppeers.crd.projectcalico.org createdcustomresourcedefinition.apiextensions.k8s.io/blockaffinities.crd.projectcalico.org createdcustomresourcedefinition.apiextensions.k8s.io/clusterinformations.crd.projectcalico.org createdcustomresourcedefinition.apiextensions.k8s.io/felixconfigurations.crd.projectcalico.org createdcustomresourcedefinition.apiextensions.k8s.io/globalnetworkpolicies.crd.projectcalico.org createdcustomresourcedefinition.apiextensions.k8s.io/globalnetworksets.crd.projectcalico.org createdcustomresourcedefinition.apiextensions.k8s.io/hostendpoints.crd.projectcalico.org createdcustomresourcedefinition.apiextensions.k8s.io/ipamblocks.crd.projectcalico.org createdcustomresourcedefinition.apiextensions.k8s.io/ipamconfigs.crd.projectcalico.org createdcustomresourcedefinition.apiextensions.k8s.io/ipamhandles.crd.projectcalico.org createdcustomresourcedefinition.apiextensions.k8s.io/ippools.crd.projectcalico.org createdcustomresourcedefinition.apiextensions.k8s.io/networkpolicies.crd.projectcalico.org createdcustomresourcedefinition.apiextensions.k8s.io/networksets.crd.projectcalico.org createdclusterrole.rbac.authorization.k8s.io/calico-kube-controllers createdclusterrolebinding.rbac.authorization.k8s.io/calico-kube-controllers createdclusterrole.rbac.authorization.k8s.io/calico-node createdclusterrolebinding.rbac.authorization.k8s.io/calico-node createddaemonset.apps/calico-node createdserviceaccount/calico-node createddeployment.apps/calico-kube-controllers createdserviceaccount/calico-kube-controllers created

查看pod和node

[root@master01 ~]# kubectl get pod --all-namespacesNAMESPACE NAME READY STATUS RESTARTS AGEkube-system calico-kube-controllers-555fc8cc5c-k8rbk 1/1 Running 0 36skube-system calico-node-5km27 1/1 Running 0 36skube-system coredns-7ff77c879f-fsj9l 1/1 Running 0 5m22skube-system coredns-7ff77c879f-q5ll2 1/1 Running 0 5m22skube-system etcd-master01.paas.com 1/1 Running 0 5m32skube-system kube-apiserver-master01.paas.com 1/1 Running 0 5m32skube-system kube-controller-manager-master01.paas.com 1/1 Running 0 5m32skube-system kube-proxy-th572 1/1 Running 0 5m22skube-system kube-scheduler-master01.paas.com 1/1 Running 0 5m32s[root@master01 ~]# kubectl get nodeNAME STATUS ROLES AGE VERSIONmaster01.paas.com Ready master 5m47s v1.18.0[root@master01 ~]#

此時集群狀態正常

官方部署dashboard的服務沒使用nodeport,將yaml文件下載到本地,在service里添加nodeport

[root@master01 ~]# wget https://raw.githubusercontent.com/kubernetes/dashboard/v2.0.0-rc7/aio/deploy/recommended.yaml[root@master01 ~]# vim recommended.yamlkind: ServiceapiVersion: v1metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard namespace: kubernetes-dashboardspec: type: NodePort ports: - port: 443 targetPort: 8443 nodePort: 30000 selector: k8s-app: kubernetes-dashboard[root@master01 ~]# kubectl create -f recommended.yamlnamespace/kubernetes-dashboard createdserviceaccount/kubernetes-dashboard createdservice/kubernetes-dashboard createdsecret/kubernetes-dashboard-certs createdsecret/kubernetes-dashboard-csrf createdsecret/kubernetes-dashboard-key-holder createdconfigmap/kubernetes-dashboard-settings createdrole.rbac.authorization.k8s.io/kubernetes-dashboard createdclusterrole.rbac.authorization.k8s.io/kubernetes-dashboard createdrolebinding.rbac.authorization.k8s.io/kubernetes-dashboard createdclusterrolebinding.rbac.authorization.k8s.io/kubernetes-dashboard createddeployment.apps/kubernetes-dashboard createdservice/dashboard-metrics-scraper createddeployment.apps/dashboard-metrics-scraper created

查看pod,service

NAME READY STATUS RESTARTS AGEdashboard-metrics-scraper-dc6947fbf-869kf 1/1 Running 0 37skubernetes-dashboard-5d4dc8b976-sdxxt 1/1 Running 0 37s[root@master01 ~]# kubectl get svc -n kubernetes-dashboardNAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGEdashboard-metrics-scraper ClusterIP 10.10.58.93 8000/TCP 44skubernetes-dashboard NodePort 10.10.132.66 443:30000/TCP 44s[root@master01 ~]#

使用token進行登錄,執行下面命令獲取token

[root@master01 ~]# kubectl describe secrets -n kubernetes-dashboard kubernetes-dashboard-token-t4hxz | grep token | awk 'NR==3{print $2}'eyJhbGciOiJSUzI1NiIsImtpZCI6IlhJaDgyTWEzZ3FtWE9hTnJqUHN1akdHZU1pRHN3QWM2RUlQbUVOT0g0Qm8ifQ.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlcm5ldGVzLWRhc2hib2FyZCIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJrdWJlcm5ldGVzLWRhc2hib2FyZC10b2tlbi10NGh5eiIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VydmljZS1hY2NvdW50Lm5hbWUiOiJrdWJlcm5ldGVzLWRhc2hib2FyZCIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VydmljZS1hY2NvdW50LnVpZCI6ImUxOWYwMGI5LTI3MWItNDY5OS1hMjI3LTAzZWEyZTllMDE4YiIsInN1YiI6InN5c3RlbTpzZXJ2aWNlYWNjb3VudDprdWJlcm5ldGVzLWRhc2hib2FyZDprdWJlcm5ldGVzLWRhc2hib2FyZCJ9.Mcw9zYSbTfhaYV38vlEaI0CSomYLtb05F2AIGpyT_PjIN8xmRdnIQhWGANBDuuDjdxScSXHOytHAKdj3pzBFVw_lfU5PseBg6hmdv_EPFPh3GvRd9XCs0TE5CVX8qfHkAGKc-DltA7jPwt5VqIFjnolLLGXB-exhiU73YMG_Xy9dZE-u0KKCvSq7XZDR87P_X30JYCAZXDlxcv8iOsuI4I-wlacm6LRF6HgyJqctJNVyE7seVVIgLqetAtt9LicTo6BBozbefHeK6zqRYeITU8AHhe-PLS4xo2fey5up77v4vyPHy_SEnKOtZcBzje1XKNPolGfiXItLYF7u95m9_A通過查看dashboard日志,得到如下 信息

[root@master01 ~]# kubectl logs -f -n kubernetes-dashboard kubernetes-dashboard-5d4dc8b976-sdxxt2020/04/08 01:54:31 Non-critical error occurred during resource retrieval: namespaces is forbidden: User "system:serviceaccount:kubernetes-dashboard:kubernetes-dashboard" cannot list resource "namespaces" in API group "" at the cluster scope2020/04/08 01:54:31 [2020-04-08T01:54:31Z] Outcoming response to 192.168.122.21:7788 with 200 status code2020/04/08 01:54:31 [2020-04-08T01:54:31Z] Incoming HTTP/2.0 GET /api/v1/cronjob/default?itemsPerPage=10&page=1&sortBy=d,creationTimestamp request from 192.168.122.21:7788:2020/04/08 01:54:31 Getting list of all cron jobs in the cluster2020/04/08 01:54:31 Non-critical error occurred during resource retrieval: cronjobs.batch is forbidden: User "system:serviceaccount:kubernetes-dashboard:kubernetes-dashboard" cannot list resource "cronjobs" in API group "batch" in the namespace "default"2020/04/08 01:54:31 [2020-04-08T01:54:31Z] Outcoming response to 192.168.122.21:7788 with 200 status code2020/04/08 01:54:31 [2020-04-08T01:54:31Z] Incoming HTTP/2.0 POST /api/v1/token/refresh request from 192.168.122.21:7788: { contents hidden }2020/04/08 01:54:31 [2020-04-08T01:54:31Z] Outcoming response to 192.168.122.21:7788 with 200 status code2020/04/08 01:54:31 [2020-04-08T01:54:31Z] Incoming HTTP/2.0 GET /api/v1/daemonset/default?itemsPerPage=10&page=1&sortBy=d,creationTimestamp request from 192.168.122.21:7788:2020/04/08 01:54:31 [2020-04-08T01:54:31Z] Incoming HTTP/2.0 GET /api/v1/deployment/default?itemsPerPage=10&page=1&sortBy=d,creationTimestamp request from 192.168.122.21:7788:2020/04/08 01:54:31 Non-critical error occurred during resource retrieval: daemonsets.apps is forbidden: User "system:serviceaccount:kubernetes-dashboard:kubernetes-dashboard" cannot list resource "daemonsets" in API group "apps" in the namespace "default"2020/04/08 01:54:31 Non-critical error occurred during resource retrieval: pods is forbidden: User "system:serviceaccount:kubernetes-dashboard:kubernetes-dashboard" cannot list resource "pods" in API group "" in the namespace "default"2020/04/08 01:54:31 Non-critical error occurred during resource retrieval: events is forbidden: User "system:serviceaccount:kubernetes-dashboard:kubernetes-dashboard" cannot list resource "events" in API group "" in the namespace "default"2020/04/08 01:54:31 [2020-04-08T01:54:31Z] Outcoming response to 192.168.122.21:7788 with 200 status code2020/04/08 01:54:31 [2020-04-08T01:54:31Z] Incoming HTTP/2.0 GET /api/v1/csrftoken/token request from 192.168.122.21:7788:2020/04/08 01:54:31 [2020-04-08T01:54:31Z] Outcoming response to 192.168.122.21:7788 with 200 status code2020/04/08 01:54:31 Getting list of all deployments in the cluster2020/04/08 01:54:31 Non-critical error occurred during resource retrieval: deployments.apps is forbidden: User "system:serviceaccount:kubernetes-dashboard:kubernetes-dashboard" cannot list resource "deployments" in API group "apps" in the namespace "default"2020/04/08 01:54:31 Non-critical error occurred during resource retrieval: pods is forbidden: User "system:serviceaccount:kubernetes-dashboard:kubernetes-dashboard" cannot list resource "pods" in API group "" in the namespace "default"2020/04/08 01:54:31 Non-critical error occurred during resource retrieval: events is forbidden: User "system:serviceaccount:kubernetes-dashboard:kubernetes-dashboard" cannot list resource "events" in API group "" in the namespace "default"2020/04/08 01:54:31 Non-critical error occurred during resource retrieval: replicasets.apps is forbidden: User "system:serviceaccount:kubernetes-dashboard:kubernetes-dashboard" cannot list resource "replicasets" in API group "apps" in the namespace "default"2020/04/08 01:54:31 [2020-04-08T01:54:31Z] Outcoming response to 192.168.122.21:7788 with 200 status code2020/04/08 01:54:31 [2020-04-08T01:54:31Z] Incoming HTTP/2.0 GET /api/v1/job/default?itemsPerPage=10&page=1&sortBy=d,creationTimestamp request from 192.168.122.21:7788:2020/04/08 01:54:31 [2020-04-08T01:54:31Z] Incoming HTTP/2.0 GET /api/v1/pod/default?itemsPerPage=10&page=1&sortBy=d,creationTimestamp request from 192.168.122.21:7788:2020/04/08 01:54:31 Getting list of all jobs in the cluster2020/04/08 01:54:31 Non-critical error occurred during resource retrieval: jobs.batch is forbidden: User "system:serviceaccount:kubernetes-dashboard:kubernetes-dashboard" cannot list resource "jobs" in API group "batch" in the namespace "default"2020/04/08 01:54:31 Non-critical error occurred during resource retrieval: pods is forbidden: User "system:serviceaccount:kubernetes-dashboard:kubernetes-dashboard" cannot list resource "pods" in API group "" in the namespace "default"2020/04/08 01:54:31 Non-critical error occurred during resource retrieval: events is forbidden: User "system:serviceaccount:kubernetes-dashboard:kubernetes-dashboard" cannot list resource "events" in API group "" in the namespace "default"2020/04/08 01:54:31 [2020-04-08T01:54:31Z] Outcoming response to 192.168.122.21:7788 with 200 status code2020/04/08 01:54:31 Getting list of all pods in the cluster2020/04/08 01:54:31 Non-critical error occurred during resource retrieval: pods is forbidden: User "system:serviceaccount:kubernetes-dashboard:kubernetes-dashboard" cannot list resource "pods" in API group "" in the namespace "default"2020/04/08 01:54:31 Non-critical error occurred during resource retrieval: events is forbidden: User "system:serviceaccount:kubernetes-dashboard:kubernetes-dashboard" cannot list resource "events" in API group "" in the namespace "default"2020/04/08 01:54:31 Getting pod metrics2020/04/08 01:54:31 [2020-04-08T01:54:31Z] Outcoming response to 192.168.122.21:7788 with 200 status code2020/04/08 01:54:31 [2020-04-08T01:54:31Z] Incoming HTTP/2.0 GET /api/v1/replicaset/default?itemsPerPage=10&page=1&sortBy=d,creationTimestamp request from 192.168.122.21:7788:2020/04/08 01:54:31 Getting list of all replica sets in the cluster2020/04/08 01:54:31 [2020-04-08T01:54:31Z] Incoming HTTP/2.0 GET /api/v1/replicationcontroller/default?itemsPerPage=10&page=1&sortBy=d,creationTimestamp request from 192.168.122.21:7788:2020/04/08 01:54:31 Non-critical error occurred during resource retrieval: replicasets.apps is forbidden: User "system:serviceaccount:kubernetes-dashboard:kubernetes-dashboard" cannot list resource "replicasets" in API group "apps" in the namespace "default"2020/04/08 01:54:31 Non-critical error occurred during resource retrieval: pods is forbidden: User "system:serviceaccount:kubernetes-dashboard:kubernetes-dashboard" cannot list resource "pods" in API group "" in the namespace "default"2020/04/08 01:54:31 Non-critical error occurred during resource retrieval: events is forbidden: User "system:serviceaccount:kubernetes-dashboard:kubernetes-dashboard" cannot list resource "events" in API group "" in the namespace "default"2020/04/08 01:54:31 [2020-04-08T01:54:31Z] Outcoming response to 192.168.122.21:7788 with 200 status code2020/04/08 01:54:31 Getting list of all replication controllers in the cluster2020/04/08 01:54:31 Non-critical error occurred during resource retrieval: replicationcontrollers is forbidden: User "system:serviceaccount:kubernetes-dashboard:kubernetes-dashboard" cannot list resource "replicationcontrollers" in API group "" in the namespace "default"解決方法

[root@master01 ~]# kubectl create clusterrolebinding serviceaccount-cluster-admin --clusterrole=cluster-admin --group=system:serviceaccountclusterrolebinding.rbac.authorization.k8s.io/serviceaccount-cluster-admin created

查看dashboard日志

[root@master01 ~]# kubectl logs -f -n kubernetes-dashboard kubernetes-dashboard-5d4dc8b976-sdxx2020/04/08 02:07:03 Getting list of namespaces 2020/04/08 02:07:03 [2020-04-08T02:07:03Z] Outcoming response to 192.168.122.21:7788 with 200 status code 2020/04/08 02:07:08 [2020-04-08T02:07:08Z] Incoming HTTP/2.0 GET /api/v1/node?itemsPerPage=10&page=1&sortBy=d,creationTimestamp request from 192.168.122.21:7788: 2020/04/08 02:07:08 [2020-04-08T02:07:08Z] Outcoming response to 192.168.122.21:7788 with 200 status code 2020/04/08 02:07:08 [2020-04-08T02:07:08Z] Incoming HTTP/2.0 GET /api/v1/namespace request from 192.168.122.21:7788: 2020/04/08 02:07:08 Getting list of namespaces 2020/04/08 02:07:08 [2020-04-08T02:07:08Z] Outcoming response to 192.168.122.21:7788 with 200 status code 2020/04/08 02:07:13 [2020-04-08T02:07:13Z] Incoming HTTP/2.0 GET /api/v1/node?itemsPerPage=10&page=1&sortBy=d,creationTimestamp request from 192.168.122.21:7788: 2020/04/08 02:07:13 [2020-04-08T02:07:13Z] Outcoming response to 192.168.122.21:7788 with 200 status code 2020/04/08 02:07:13 [2020-04-08T02:07:13Z] Incoming HTTP/2.0 GET /api/v1/namespace request from 192.168.122.21:7788: 2020/04/08 02:07:13 Getting list of namespaces 2020/04/08 02:07:13 [2020-04-08T02:07:13Z] Outcoming response to 192.168.122.21:7788 with 200 status code 2020/04/08 02:07:18 [2020-04-08T02:07:18Z] Incoming HTTP/2.0 GET /api/v1/node?itemsPerPage=10&page=1&sortBy=d,creationTimestamp request from 192.168.122.21:7788: 2020/04/08 02:07:18 [2020-04-08T02:07:18Z] Outcoming response to 192.168.122.21:7788 with 200 status code 2020/04/08 02:07:18 [2020-04-08T02:07:18Z] Incoming HTTP/2.0 GET /api/v1/namespace request from 192.168.122.21:7788: 2020/04/08 02:07:18 Getting list of namespaces 2020/04/08 02:07:18 [2020-04-08T02:07:18Z] Outcoming response to 192.168.122.21:7788 with 200 status code 2020/04/08 02:07:23 [2020-04-08T02:07:23Z] Incoming HTTP/2.0 GET /api/v1/node?itemsPerPage=10&page=1&sortBy=d,creationTimestamp request from 192.168.122.21:7788: 2020/04/08 02:07:23 [2020-04-08T02:07:23Z] Outcoming response to 192.168.122.21:7788 with 200 status code 2020/04/08 02:07:23 [2020-04-08T02:07:23Z] Incoming HTTP/2.0 GET /api/v1/namespace request from 192.168.122.21:7788: 2020/04/08 02:07:23 Getting list of namespaces 2020/04/08 02:07:23 [2020-04-08T02:07:23Z] Outcoming response to 192.168.122.21:7788 with 200 status code 2020/04/08 02:07:28 [2020-04-08T02:07:28Z] Incoming HTTP/2.0 GET /api/v1/node?itemsPerPage=10&page=1&sortBy=d,creationTimestamp request from 192.168.122.21:7788: 2020/04/08 02:07:28 [2020-04-08T02:07:28Z] Outcoming response to 192.168.122.21:7788 with 200 status code 2020/04/08 02:07:28 [2020-04-08T02:07:28Z] Incoming HTTP/2.0 GET /api/v1/namespace request from 192.168.122.21:7788: 2020/04/08 02:07:28 Getting list of namespaces 2020/04/08 02:07:28 [2020-04-08T02:07:28Z] Outcoming response to 192.168.122.21:7788 with 200 status code 2020/04/08 02:07:33 [2020-04-08T02:07:33Z] Incoming HTTP/2.0 GET /api/v1/node?itemsPerPage=10&page=1&sortBy=d,creationTimestamp request from 192.168.122.21:7788: 2020/04/08 02:07:33 [2020-04-08T02:07:33Z] Outcoming response to 192.168.122.21:7788 with 200 status code

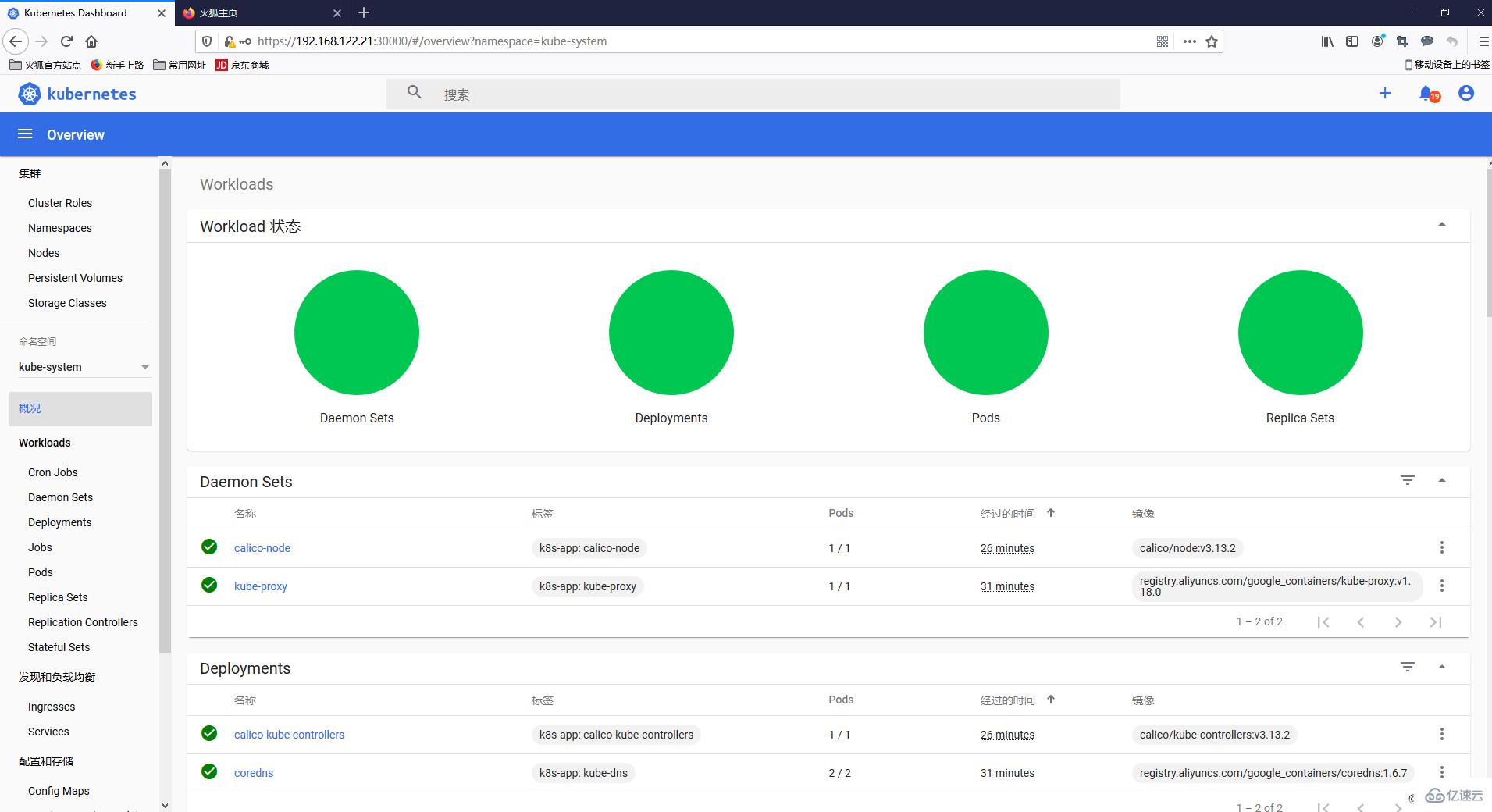

此時再查看dashboard,即可看到有資源展示

以上就是關于“Centos中怎么使用kubeadm部署kubernetes1.18”這篇文章的內容,相信大家都有了一定的了解,希望小編分享的內容對大家有幫助,若想了解更多相關的知識內容,請關注億速云行業資訊頻道。

免責聲明:本站發布的內容(圖片、視頻和文字)以原創、轉載和分享為主,文章觀點不代表本網站立場,如果涉及侵權請聯系站長郵箱:is@yisu.com進行舉報,并提供相關證據,一經查實,將立刻刪除涉嫌侵權內容。