您好,登錄后才能下訂單哦!

您好,登錄后才能下訂單哦!

第一次看到Spark崩潰

Spark Shell內存OOM的現象

要搞Spark圖計算,所以用了Google的web-Google.txt,大小71.8MB。

以命令:

val graph = GraphLoader.edgeListFile(sc,"hdfs://192.168.0.10:9000/input/graph/web-Google.txt")

建立圖的時候,運算了半天后直接退回了控制臺。

界面xian

scala> val graph = GraphLoader.edgeListFile(sc,"hdfs://192.168.0.10:9000/input/graph/web-Google.txt")

[Stage 0:> (0 + 2) / 2]./bin/spark-shell: line 44: 3592 Killed "${SPARK_HOME}"/bin/spark-submit --class org.apache.spark.repl.Main --name "Spark shell" "$@"

第二次Spark崩潰!

執行pagerank算法測試,使用的是google的數據web-Google.txt,大小71.8MB。

val rank = graph.pageRank(0.01).vertices

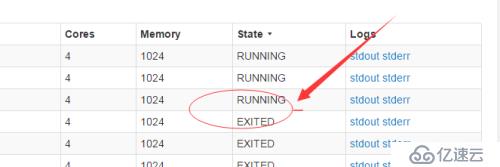

Spark是一個master,2個worker的集群,結果slave2掛了

16/11/14 09:51:44 INFO cluster.SparkDeploySchedulerBackend: Granted executor ID app-20161114084026-0000/1333 on hostPort 192.168.0.5:42898 with 4 cores, 1024.0 MB RAM

16/11/14 09:51:44 INFO client.AppClient$ClientEndpoint: Executor updated: app-20161114084026-0000/1333 is now RUNNING

16/11/14 09:51:44 INFO client.AppClient$ClientEndpoint: Executor updated: app-20161114084026-0000/1333 is now EXITED (Command exited with code 1)

16/11/14 09:51:44 INFO cluster.SparkDeploySchedulerBackend: Executor app-20161114084026-0000/1333 removed: Command exited with code 1

16/11/14 09:51:44 INFO cluster.SparkDeploySchedulerBackend: Asked to remove non-existent executor 1333

16/11/14 09:51:44 INFO client.AppClient$ClientEndpoint: Executor added: app-20161114084026-0000/1334 on worker-20161114083607-192.168.0.5-42898 (192.168.0.5:42898) with 4 cores

結果一直嘗試連接slave2,呵呵

免責聲明:本站發布的內容(圖片、視頻和文字)以原創、轉載和分享為主,文章觀點不代表本網站立場,如果涉及侵權請聯系站長郵箱:is@yisu.com進行舉報,并提供相關證據,一經查實,將立刻刪除涉嫌侵權內容。