您好,登錄后才能下訂單哦!

您好,登錄后才能下訂單哦!

本篇內容主要講解“Linux日志對接Kibana如何進行配置與部署”,感興趣的朋友不妨來看看。本文介紹的方法操作簡單快捷,實用性強。下面就讓小編來帶大家學習“Linux日志對接Kibana如何進行配置與部署”吧!

在騰訊云帳號下,申請一臺CVM(Linux操作系統)、一個ElasticSearch集群(后面簡稱ES),使用最簡配置即可;申請的CVM和ES,必須在同一個VPC的同一個子網下。

CVM詳情信息

CVM詳情信息

ElasticSearch詳情信息

ElasticSearch詳情信息

為了將Linux日志提取到ES中,我們需要使用Filebeat工具。Filebeat是一個日志文件托運工具,在你的服務器上安裝客戶端后,Filebeat會監控日志目錄或者指定的日志文件,追蹤讀取這些文件(追蹤文件的變化,不停的讀),并且轉發這些信息到ElasticSearch或者logstarsh中存放。當你開啟Filebeat程序的時候,它會啟動一個或多個探測器(prospectors)去檢測你指定的日志目錄或文件,對于探測器找出的每一個日志文件,Filebeat啟動收割進程(harvester),每一個收割進程讀取一個日志文件的新內容,并發送這些新的日志數據到處理程序(spooler),處理程序會集合這些事件,最后Filebeat會發送集合的數據到你指定的地點。

官網簡介:https://www.elastic.co/products/beats/filebeat

首先,登錄待接管日志的CVM,在CVM上下載Filebeat工具:

[root@VM_3_7_centos ~]# cd /opt/ [root@VM_3_7_centos opt]# ll total 4 drwxr-xr-x. 2 root root 4096 Sep 7 2017 rh [root@VM_3_7_centos opt]# wget https://artifacts.elastic.co/downloads/beats/filebeat/filebeat-6.2.2-x86_64.rpm --2018-12-10 20:24:26-- https://artifacts.elastic.co/downloads/beats/filebeat/filebeat-6.2.2-x86_64.rpm Resolving artifacts.elastic.co (artifacts.elastic.co)... 107.21.202.15, 107.21.127.184, 54.225.214.74, ... Connecting to artifacts.elastic.co (artifacts.elastic.co)|107.21.202.15|:443... connected. HTTP request sent, awaiting response... 200 OK Length: 12697788 (12M) [binary/octet-stream] Saving to: ‘filebeat-6.2.2-x86_64.rpm’ 100%[=================================================================================================>] 12,697,788 160KB/s in 1m 41s 2018-12-10 20:26:08 (123 KB/s) - ‘filebeat-6.2.2-x86_64.rpm’ saved [12697788/12697788]

然后,進行安裝filebeat:

[root@VM_3_7_centos opt]# rpm -vi filebeat-6.2.2-x86_64.rpm warning: filebeat-6.2.2-x86_64.rpm: Header V4 RSA/SHA512 Signature, key ID d88e42b4: NOKEY Preparing packages... filebeat-6.2.2-1.x86_64 [root@VM_3_7_centos opt]#

至此,Filebeat安裝完成。

進入Filebeat配置文件目錄:/etc/filebeat/

[root@VM_3_7_centos opt]# cd /etc/filebeat/ [root@VM_3_7_centos filebeat]# ll total 108 -rw-r--r-- 1 root root 44384 Feb 17 2018 fields.yml -rw-r----- 1 root root 52193 Feb 17 2018 filebeat.reference.yml -rw------- 1 root root 7264 Feb 17 2018 filebeat.yml drwxr-xr-x 2 root root 4096 Dec 10 20:35 modules.d [root@VM_3_7_centos filebeat]#

其中,filebeat.yml就是我們需要修改的配置文件。建議修改配置前,先備份此文件。

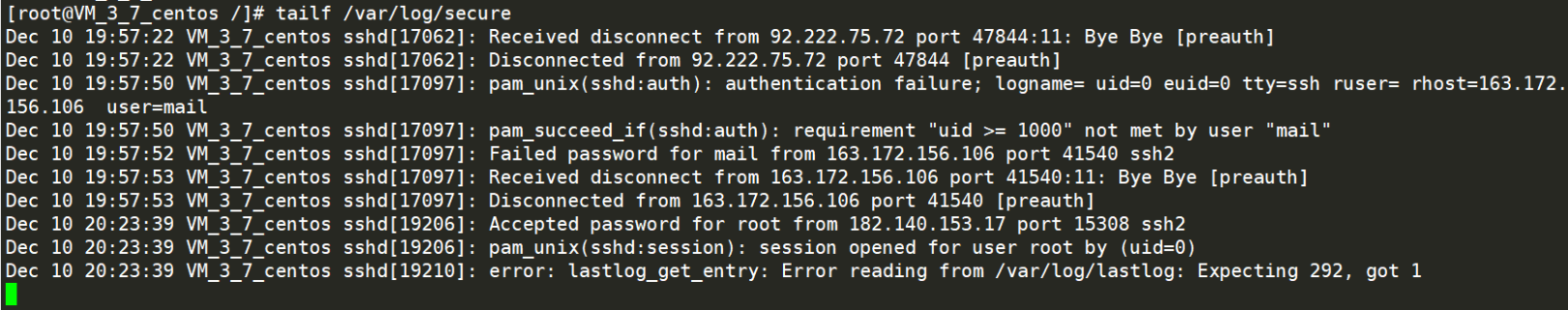

然后,確認需要對接ElasticSearch的Linux的日志目錄,我們以下圖(/var/log/secure)為例。

/var/log/secure日志文件

/var/log/secure日志文件

使用vim打開/etc/filebeat/filebeat.yml文件,修改其中的:

1)Filebeat prospectors類目中,enable默認為false,我們要改為true

2)paths,默認為/var/log/*.log,我們要改為待接管的日志路徑:/var/log/secure

3)Outputs類目中,有ElasticSearchoutput配置,其中hosts默認為"localhost:9200",需要我們手工修改為上面申請的ES子網地址和端口,即**"10.0.3.8:9200"**。

修改好上述內容后,保存退出。

修改好的配置文件全文如下:

[root@VM_3_7_centos /]# vim /etc/filebeat/filebeat.yml

[root@VM_3_7_centos /]# cat /etc/filebeat/filebeat.yml

###################### Filebeat Configuration Example #########################

# This file is an example configuration file highlighting only the most common

# options. The filebeat.reference.yml file from the same directory contains all the

# supported options with more comments. You can use it as a reference.

#

# You can find the full configuration reference here:

# https://www.elastic.co/guide/en/beats/filebeat/index.html

# For more available modules and options, please see the filebeat.reference.yml sample

# configuration file.

#=========================== Filebeat prospectors =============================

filebeat.prospectors:

# Each - is a prospector. Most options can be set at the prospector level, so

# you can use different prospectors for various configurations.

# Below are the prospector specific configurations.

- type: log

# Change to true to enable this prospector configuration.

enabled: true

# Paths that should be crawled and fetched. Glob based paths.

paths:

- /var/log/secure

#- c:\programdata\elasticsearch\logs\*

# Exclude lines. A list of regular expressions to match. It drops the lines that are

# matching any regular expression from the list.

#exclude_lines: ['^DBG']

# Include lines. A list of regular expressions to match. It exports the lines that are

# matching any regular expression from the list.

#include_lines: ['^ERR', '^WARN']

# Exclude files. A list of regular expressions to match. Filebeat drops the files that

# are matching any regular expression from the list. By default, no files are dropped.

#exclude_files: ['.gz$']

# Optional additional fields. These fields can be freely picked

# to add additional information to the crawled log files for filtering

#fields:

# level: debug

# review: 1

### Multiline options

# Mutiline can be used for log messages spanning multiple lines. This is common

# for Java Stack Traces or C-Line Continuation

# The regexp Pattern that has to be matched. The example pattern matches all lines starting with [

#multiline.pattern: ^\[

# Defines if the pattern set under pattern should be negated or not. Default is false.

#multiline.negate: false

# Match can be set to "after" or "before". It is used to define if lines should be append to a pattern

# that was (not) matched before or after or as long as a pattern is not matched based on negate.

# Note: After is the equivalent to previous and before is the equivalent to to next in Logstash

#multiline.match: after

#============================= Filebeat modules ===============================

filebeat.config.modules:

# Glob pattern for configuration loading

path: ${path.config}/modules.d/*.yml

# Set to true to enable config reloading

reload.enabled: false

# Period on which files under path should be checked for changes

#reload.period: 10s

#==================== Elasticsearch template setting ==========================

setup.template.settings:

index.number_of_shards: 3

#index.codec: best_compression

#_source.enabled: false

#================================ General =====================================

# The name of the shipper that publishes the network data. It can be used to group

# all the transactions sent by a single shipper in the web interface.

#name:

# The tags of the shipper are included in their own field with each

# transaction published.

#tags: ["service-X", "web-tier"]

# Optional fields that you can specify to add additional information to the

# output.

#fields:

# env: staging

#============================== Dashboards =====================================

# These settings control loading the sample dashboards to the Kibana index. Loading

# the dashboards is disabled by default and can be enabled either by setting the

# options here, or by using the `-setup` CLI flag or the `setup` command.

#setup.dashboards.enabled: false

# The URL from where to download the dashboards archive. By default this URL

# has a value which is computed based on the Beat name and version. For released

# versions, this URL points to the dashboard archive on the artifacts.elastic.co

# website.

#setup.dashboards.url:

#============================== Kibana =====================================

# Starting with Beats version 6.0.0, the dashboards are loaded via the Kibana API.

# This requires a Kibana endpoint configuration.

setup.kibana:

# Kibana Host

# Scheme and port can be left out and will be set to the default (http and 5601)

# In case you specify and additional path, the scheme is required: http://localhost:5601/path

# IPv6 addresses should always be defined as: https://[2001:db8::1]:5601

#host: "localhost:5601"

#============================= Elastic Cloud ==================================

# These settings simplify using filebeat with the Elastic Cloud (https://cloud.elastic.co/).

# The cloud.id setting overwrites the `output.elasticsearch.hosts` and

# `setup.kibana.host` options.

# You can find the `cloud.id` in the Elastic Cloud web UI.

#cloud.id:

# The cloud.auth setting overwrites the `output.elasticsearch.username` and

# `output.elasticsearch.password` settings. The format is `<user>:<pass>`.

#cloud.auth:

#================================ Outputs =====================================

# Configure what output to use when sending the data collected by the beat.

#-------------------------- Elasticsearch output ------------------------------

output.elasticsearch:

# Array of hosts to connect to.

hosts: ["10.0.3.8:9200"]

# Optional protocol and basic auth credentials.

#protocol: "https"

#username: "elastic"

#password: "changeme"

#----------------------------- Logstash output --------------------------------

#output.logstash:

# The Logstash hosts

#hosts: ["localhost:5044"]

# Optional SSL. By default is off.

# List of root certificates for HTTPS server verifications

#ssl.certificate_authorities: ["/etc/pki/root/ca.pem"]

# Certificate for SSL client authentication

#ssl.certificate: "/etc/pki/client/cert.pem"

# Client Certificate Key

#ssl.key: "/etc/pki/client/cert.key"

#================================ Logging =====================================

# Sets log level. The default log level is info.

# Available log levels are: error, warning, info, debug

#logging.level: debug

# At debug level, you can selectively enable logging only for some components.

# To enable all selectors use ["*"]. Examples of other selectors are "beat",

# "publish", "service".

#logging.selectors: ["*"]

#============================== Xpack Monitoring ===============================

# filebeat can export internal metrics to a central Elasticsearch monitoring

# cluster. This requires xpack monitoring to be enabled in Elasticsearch. The

# reporting is disabled by default.

# Set to true to enable the monitoring reporter.

#xpack.monitoring.enabled: false

# Uncomment to send the metrics to Elasticsearch. Most settings from the

# Elasticsearch output are accepted here as well. Any setting that is not set is

# automatically inherited from the Elasticsearch output configuration, so if you

# have the Elasticsearch output configured, you can simply uncomment the

# following line.

#xpack.monitoring.elasticsearch:

[root@VM_3_7_centos /]#執行下列命令啟動filebeat

[root@VM_3_7_centos /]# sudo /etc/init.d/filebeat start Starting filebeat (via systemctl): [ OK ] [root@VM_3_7_centos /]#

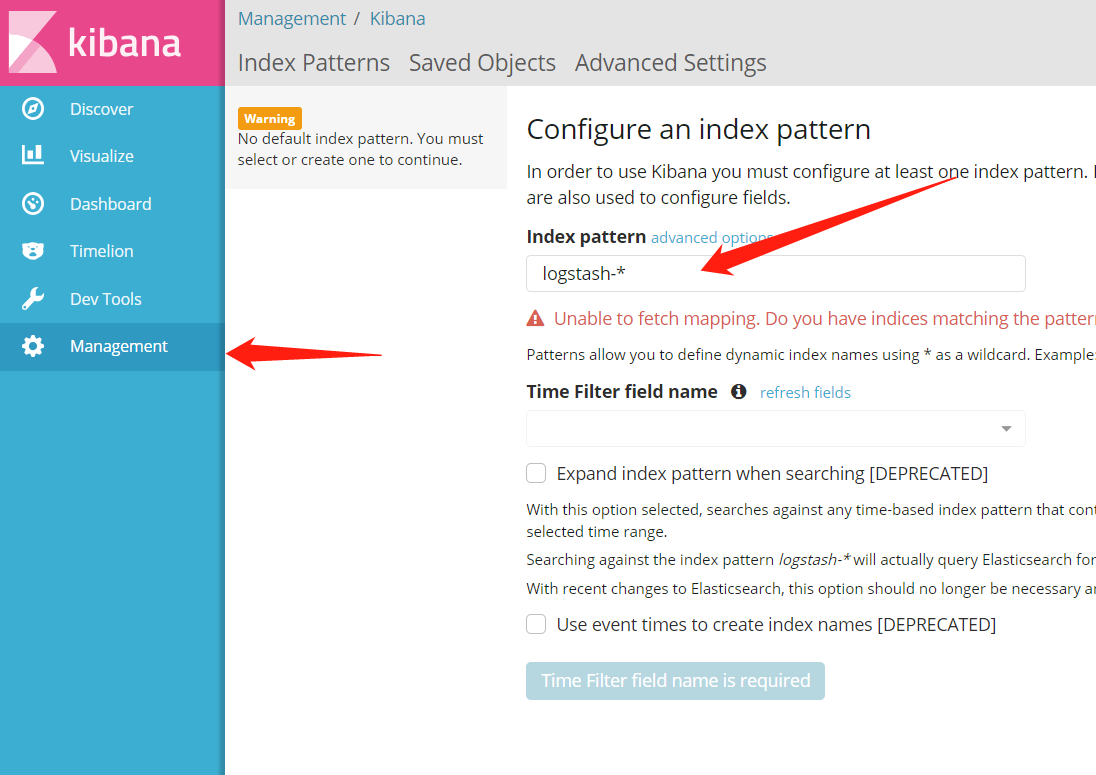

進入ElasticSearch對應的Kibana管理頁,如下圖。

首次訪問Kibana默認會顯示管理頁

首次訪問Kibana默認會顯示管理頁

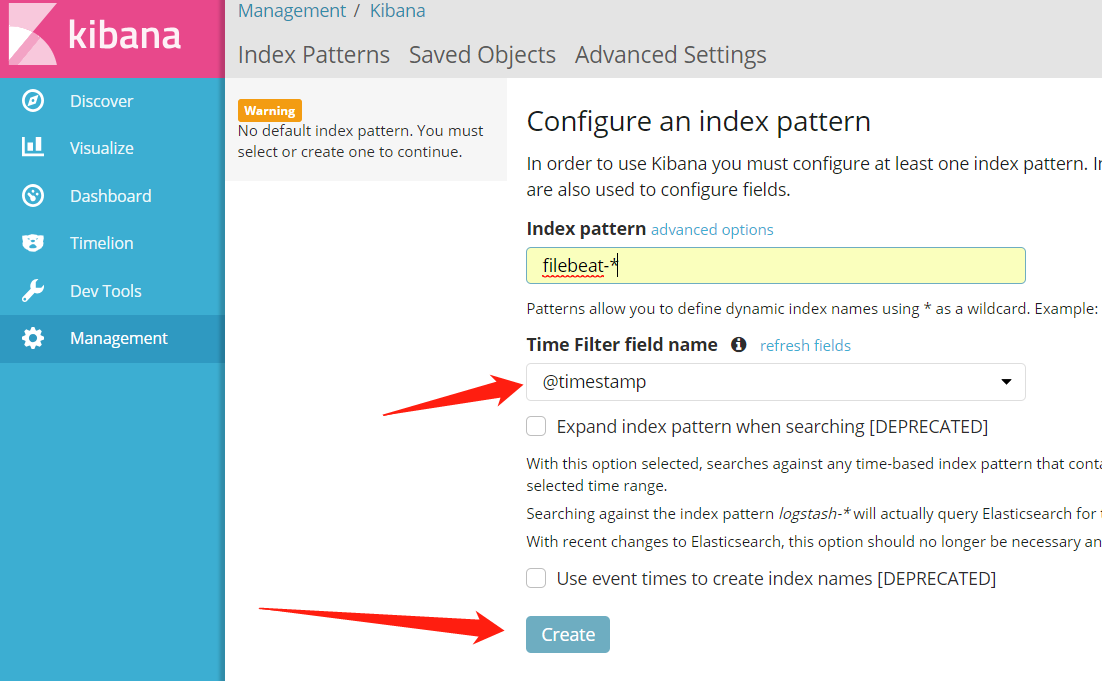

首次登陸,會默認進入Management頁面,我們需要將Index pattern內容修改為:filebeat-*,然后頁面會自動填充**Time Filter field name,**不需手動設置,直接點擊Create即可。點擊Create后,頁面需要一定時間來加載配置和數據,請稍等。如下圖:

將Index pattern內容修改為:filebeat-*,然后點擊Create

將Index pattern內容修改為:filebeat-*,然后點擊Create

至此,CVM上,/var/log/secure日志文件,已對接到ElasticSearch中,歷史日志可以通過Kibana進行查詢,最新產生的日志也會實時同步到Kibana中。

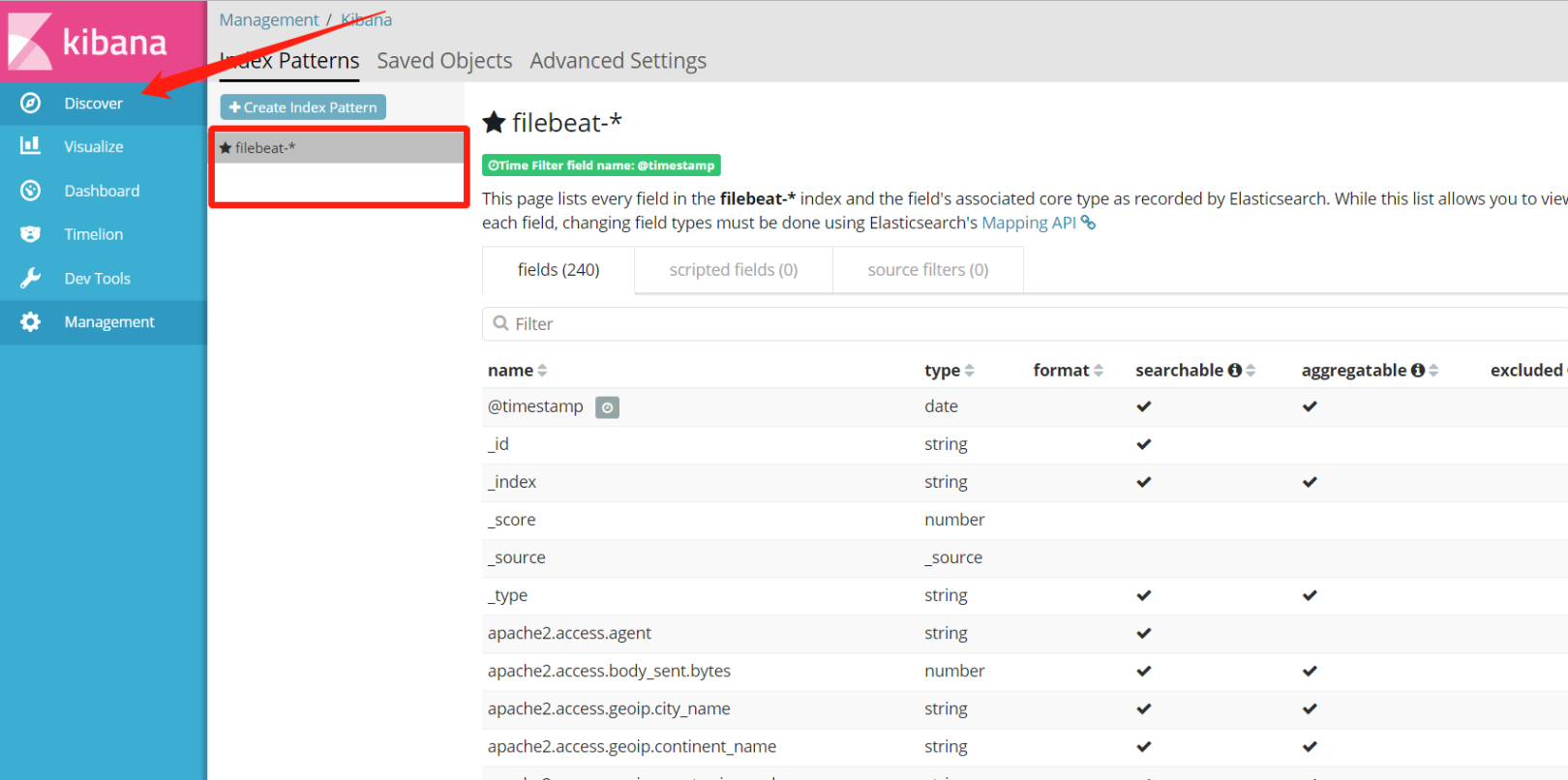

日志接管已完成配置,如何使用呢?

如下圖:

在Index Patterns中可以看到我們配置過的filebeat-*

在Index Patterns中可以看到我們配置過的filebeat-*

點擊Discover,即可看到secure中的所有日志,頁面上方的搜索框中輸入關鍵字,即可完成日志的檢索。如下圖(點擊圖片,可查看高清大圖):

使用Kibana進行日志檢索

使用Kibana進行日志檢索

實際上,檢索只是Kibana提供的諸多功能之一,還有其他功能如可視化、分詞檢索等,還有待后續研究。

到此,相信大家對“Linux日志對接Kibana如何進行配置與部署”有了更深的了解,不妨來實際操作一番吧!這里是億速云網站,更多相關內容可以進入相關頻道進行查詢,關注我們,繼續學習!

免責聲明:本站發布的內容(圖片、視頻和文字)以原創、轉載和分享為主,文章觀點不代表本網站立場,如果涉及侵權請聯系站長郵箱:is@yisu.com進行舉報,并提供相關證據,一經查實,將立刻刪除涉嫌侵權內容。