您好,登錄后才能下訂單哦!

您好,登錄后才能下訂單哦!

這篇文章給大家介紹 基于Flume+Kafka+Spark-Streaming的實時流式處理過程是怎樣的,內容非常詳細,感興趣的小伙伴們可以參考借鑒,希望對大家能有所幫助。

基于Flume+Kafka+Spark-Streaming的實時流式處理完整流程

1、環境準備,四臺測試服務器

spark集群三臺,spark1,spark2,spark3

kafka集群三臺,spark1,spark2,spark3

zookeeper集群三臺,spark1,spark2,spark3

日志接收服務器, spark1

日志收集服務器,redis (這臺機器用來做redis開發的,現在用來做日志收集的測試,主機名就不改了)

日志收集流程:

日志收集服務器->日志接收服務器->kafka集群->spark集群處理

說明: 日志收集服務器,在實際生產中很有可能是應用系統服務器,日志接收服務器為大數據服務器中一臺,日志通過網絡傳輸到日志接收服務器,再入集群處理。

因為,生產環境中,往往網絡只是單向開放給某臺服務器的某個端口訪問的。

Flume版本: apache-flume-1.5.0-cdh6.4.9 ,該版本已經較好地集成了對kafka的支持

2、日志收集服務器(匯總端)

配置flume動態收集特定的日志,collect.conf 配置如下:

# Name the components on this agent a1.sources = tailsource-1 a1.sinks = remotesink a1.channels = memoryChnanel-1 # Describe/configure the source a1.sources.tailsource-1.type = exec a1.sources.tailsource-1.command = tail -F /opt/modules/tmpdata/logs/1.log a1.sources.tailsource-1.channels = memoryChnanel-1 # Describe the sink a1.sinks.k1.type = logger # Use a channel which buffers events in memory a1.channels.memoryChnanel-1.type = memory a1.channels.memoryChnanel-1.keep-alive = 10 a1.channels.memoryChnanel-1.capacity = 100000 a1.channels.memoryChnanel-1.transactionCapacity = 100000 # Bind the source and sink to the channel a1.sinks.remotesink.type = avro a1.sinks.remotesink.hostname = spark1 a1.sinks.remotesink.port = 666 a1.sinks.remotesink.channel = memoryChnanel-1

日志實時監控日志后,通過網絡avro類型,傳輸到spark1服務器的666端口上

啟動日志收集端腳本:

bin/flume-ng agent --conf conf --conf-file conf/collect.conf --name a1 -Dflume.root.logger=INFO,console

3、日志接收服務器

配置flume實時接收日志,collect.conf 配置如下:

#agent section producer.sources = s producer.channels = c producer.sinks = r #source section producer.sources.s.type = avro producer.sources.s.bind = spark1 producer.sources.s.port = 666 producer.sources.s.channels = c # Each sink's type must be defined producer.sinks.r.type = org.apache.flume.sink.kafka.KafkaSink producer.sinks.r.topic = mytopic producer.sinks.r.brokerList = spark1:9092,spark2:9092,spark3:9092 producer.sinks.r.requiredAcks = 1 producer.sinks.r.batchSize = 20 producer.sinks.r.channel = c1 #Specify the channel the sink should use producer.sinks.r.channel = c # Each channel's type is defined. producer.channels.c.type = org.apache.flume.channel.kafka.KafkaChannel producer.channels.c.capacity = 10000 producer.channels.c.transactionCapacity = 1000 producer.channels.c.brokerList=spark1:9092,spark2:9092,spark3:9092 producer.channels.c.topic=channel1 producer.channels.c.zookeeperConnect=spark1:2181,spark2:2181,spark3:2181

關鍵是指定如源為接收網絡端口的666來的數據,并輸入kafka的集群,需配置好topic及zk的地址

啟動接收端腳本:

bin/flume-ng agent --conf conf --conf-file conf/receive.conf --name producer -Dflume.root.logger=INFO,console

4、spark集群處理接收數據

import org.apache.spark.SparkConf

import org.apache.spark.SparkContext

import org.apache.spark.streaming.kafka.KafkaUtils

import org.apache.spark.streaming.Seconds

import org.apache.spark.streaming.StreamingContext

import kafka.serializer.StringDecoder

import scala.collection.immutable.HashMap

import org.apache.log4j.Level

import org.apache.log4j.Logger

/**

* @author Administrator

*/

object KafkaDataTest {

def main(args: Array[String]): Unit = {

Logger.getLogger("org.apache.spark").setLevel(Level.WARN);

Logger.getLogger("org.eclipse.jetty.server").setLevel(Level.ERROR);

val conf = new SparkConf().setAppName("stocker").setMaster("local[2]")

val sc = new SparkContext(conf)

val ssc = new StreamingContext(sc, Seconds(1))

// Kafka configurations

val topics = Set("mytopic")

val brokers = "spark1:9092,spark2:9092,spark3:9092"

val kafkaParams = Map[String, String]("metadata.broker.list" -> brokers, "serializer.class" -> "kafka.serializer.StringEncoder")

// Create a direct stream

val kafkaStream = KafkaUtils.createDirectStream[String, String, StringDecoder, StringDecoder](ssc, kafkaParams, topics)

val urlClickLogPairsDStream = kafkaStream.flatMap(_._2.split(" ")).map((_, 1))

val urlClickCountDaysDStream = urlClickLogPairsDStream.reduceByKeyAndWindow(

(v1: Int, v2: Int) => {

v1 + v2

},

Seconds(60),

Seconds(5));

urlClickCountDaysDStream.print();

ssc.start()

ssc.awaitTermination()

}

}

spark-streaming接收到kafka集群后的數據,每5s計算60s內的wordcount值

5、測試結果

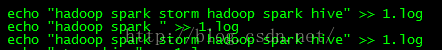

往日志中依次追加三次日志

spark-streaming處理結果如下:

(hive,1)

(spark,2)

(hadoop,2)

(storm,1)

---------------------------------------

(hive,1)

(spark,3)

(hadoop,3)

(storm,1)

---------------------------------------

(hive,2)

(spark,5)

(hadoop,5)

(storm,2)

與預期一樣,充分體現了spark-streaming滑動窗口的特性

關于 基于Flume+Kafka+Spark-Streaming的實時流式處理過程是怎樣的就分享到這里了,希望以上內容可以對大家有一定的幫助,可以學到更多知識。如果覺得文章不錯,可以把它分享出去讓更多的人看到。

免責聲明:本站發布的內容(圖片、視頻和文字)以原創、轉載和分享為主,文章觀點不代表本網站立場,如果涉及侵權請聯系站長郵箱:is@yisu.com進行舉報,并提供相關證據,一經查實,將立刻刪除涉嫌侵權內容。