您好,登錄后才能下訂單哦!

您好,登錄后才能下訂單哦!

小編給大家分享一下如何使用jar包安裝部署Hadoop2.6+jdk8,相信大部分人都還不怎么了解,因此分享這篇文章給大家參考一下,希望大家閱讀完這篇文章后大有收獲,下面讓我們一起去了解一下吧!

Hadoop的安裝部署可以分為三類:

一. 自動安裝部署

Ambari:http://ambari.apache.org/,它是有Hortonworks開源的。

Minos:https://github.com/XiaoMi/minos,中國小米公司開源(為的是把大家的手機變成分布式集群,哈哈。。)

Cloudera Manager(收費,但是當節點數非常少的時候是免費的。很好的策略!并且非常好用)

二. 使用RPM包安裝部署

Apache Hadoop不提供

HDP和CDH提供

三. 使用JAR包安裝部署

各版本均提供。

這種方式是最靈活的,可以任意更換所需要的版本,但是缺點是需要人的很多參與,不夠自動化。

Hadoop 2.0安裝部署流程

步驟1:準備硬件(linux操作系統,本人的機器是Fedora 21 WorkStation,CentOS適用)

步驟2:準備軟件安裝包,并安裝基礎軟件(主要是JDK,本人用的是最新的jdk8)

步驟3:將Hadoop安裝包分發到各個節點的同一個目錄下,并解壓

步驟4:修改配置文件(關鍵!!)

步驟5:啟動服務(關鍵!!)

步驟6:驗證是否啟動成功

Hadoop各個發行版:

Apache Hadoop

最原始版本,所有其他發行版均基于該發行版實現的

0.23.x:非穩定版

2.x:穩定版

HDP

Hortonworks公司的發行版

CDH

Cloudera公司的的Hadoop發行版

包含CDH4和CDH5兩個版本

CDH4:基于Apache Hadoop 0.23.0版本開發

CDH5:基于Apache Hadoop 2.2.0版本開發

不同發行版兼容性

架構、部署和使用方法一致,不同之處僅在若干內部實現。

CDH的安裝方法可以參照下面的步驟:詳細參見官網。

Ideal for trying Cloudera enterprise data hub, the installer will download Cloudera Manager from Cloudera's website and guide you through the setup process.

Pre-requisites: multiple, Internet-connected Linux machines, with SSH access, and significant free space in /var and /opt.

$ wget http://archive.cloudera.com/cm5/installer/latest/cloudera-manager-installer.bin $ chmod u+x cloudera-manager-installer.bin $ sudo ./cloudera-manager-installer.bin

Users setting up Cloudera enterprise data hub for production use are encouraged to follow the installation instructions in our documentation. These instructions suggest explicitly provisioning the databases used by Cloudera Manager and walk through explicitly which packages need installation.

本文的將要重點介紹的還是適用apache hadoop2.6的安裝配置方法:

1. 首先,jdk要安裝好,注意:請選擇oracle的jdk,我這里用的jdk8.

千萬別用fedora和opensuse系統自帶的openjdk。貌似jps都沒有。

2. 從apache官網下在最新的hadoop2.6,然后解壓:

[neil@neilhost Servers]$ tar zxvf hadoop-2.6.0.tar.gz hadoop-2.6.0/ hadoop-2.6.0/etc/ hadoop-2.6.0/etc/hadoop/ hadoop-2.6.0/etc/hadoop/hdfs-site.xml hadoop-2.6.0/etc/hadoop/hadoop-metrics2.properties hadoop-2.6.0/etc/hadoop/container-executor.cfg ... ... tar: 歸檔文件中異常的 EOF tar: 歸檔文件中異常的 EOF tar: Error is not recoverable: exiting now

解壓最后包了個錯誤,聽說其他人也有類似的情況,但不影響后面的使用。

3. 配置/etc/hosts

增加一行 127.0.1.1 YARN001。配置成127.0.0.1也是可以的。

127.0.0.1 localhost.localdomain localhost ::1 localhost6.localdomain6 localhost6 127.0.1.1 YARN001

4. 修改hadoop里的各個配置文件:

4.1 解壓包etc/hadoop/hadoop-en.sh

配置JAVA_HOME,將jdk路徑配置上

# The java implementation to use.

export JAVA_HOME=/usr/java/jdk1.8.0_40/

#${JAVA_HOME}4.2增加一個解壓包etc/hadoop/mapred-site.xml

哎呀!這個文件應該怎么寫呢?不要著急,etc/hadoop/mapred-site.xml.template。基本格式只要復制里面的就可以了。

然后需要在etc/hadoop/mapred-site.xml需要增加一個configuration節點,里面增加一個property,然后的然后如下:

<?xml version="1.0"?> <?xml-stylesheet type="text/xsl" href="configuration.xsl"?> <!-- Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with the License. You may obtain a copy of the License at http://www.apache.org/licenses/LICENSE-2.0 Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the specific language governing permissions and limitations under the License. See accompanying LICENSE file. --> <!-- Put site-specific property overrides in this file. --> <configuration> <property> <name>mapreduce.framework.name</name> <value>yarn</value> </property> </configuration>

4.3 解壓目錄etc/hadoop/core-site.xml

增加內容如下:

<?xml version="1.0" encoding="UTF-8"?> <?xml-stylesheet type="text/xsl" href="configuration.xsl"?> <!-- Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with the License. You may obtain a copy of the License at http://www.apache.org/licenses/LICENSE-2.0 Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the specific language governing permissions and limitations under the License. See accompanying LICENSE file. --> <!-- Put site-specific property overrides in this file. --> <configuration> <property> <name>fs.default.name</name> <value>hdfs://YARN001:8020</value> </property> </configuration>

注意:這里的value里的YARN001就是前面在系統/etc/hosts文件里增加的YARN001.

如果沒有設置,這里可以寫成hdfs://localhost:8020或hdfs://127.0.0.1:8020,或者換為本機的IP都是可以的。

后面的端口可以配置成任意開放的端口,這里我配置成8020,配置成其他如9001等也是可以的。

4.4 解壓目錄下的etc/hadoop/hdfs-site.xml

第一個配置dfs.replication,即配置副本數量。這里配置成1,因為這里是單機版的。默認是3,如果用默認3的話,這里回報錯。

第二個和第三個配置的是namenode和datanode。這里需要指定兩個路徑,如果不設置,會默認設置為系統/tmp目錄下,這樣,如果你用的是虛擬機模擬系統環境,那么每次重啟虛擬機之后,/tmp會被清空,里面的信息也就沒了,所以這里建議設置這里兩個目錄,目錄可以不存在,hadoop運行時會自動按照這里的配置信息生成這兩個目錄。

<?xml version="1.0" encoding="UTF-8"?> <?xml-stylesheet type="text/xsl" href="configuration.xsl"?> <!-- Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with the License. You may obtain a copy of the License at http://www.apache.org/licenses/LICENSE-2.0 Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the specific language governing permissions and limitations under the License. See accompanying LICENSE file. --> <!-- Put site-specific property overrides in this file. --> <configuration> <property> <name>dfs.replication</name> <value>1</value> </property> <property> <name>dfs.namenode.name.dir</name> <value>/home/neil/Servers/hadoop-2.6.0/dfs/name</value> </property> <property> <name>dfs.datanode.data.dir</name> <value>/home/neil/Servers/hadoop-2.6.0/dfs/data</value> </property> </configuration>

4.5 解壓目錄下的etc/hadoop/yarn-site.xml

<?xml version="1.0"?> <!-- Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with the License. You may obtain a copy of the License at http://www.apache.org/licenses/LICENSE-2.0 Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the specific language governing permissions and limitations under the License. See accompanying LICENSE file. --> <configuration> <!-- Site specific YARN configuration properties --> <property> <name>yarn.nodemanager.aux-services</name> <value>mapreduce_shuffle</value> </property> </configuration>

4.6 還有一個可以改也可以不改的,就是解壓目錄下etc/hadoop/slave

可以將localhost改為之前設置的YARN001,也可以改為127.0.0.1

5.開始正式操作。

啟動hadoop的方法有很多,在sbin目錄下有很多腳本命令。其中,有一步到位的命令腳本start-all.sh,但是不建議這么做。雖然這樣做比較方便,能夠自動啟動dfs、yarn等,但是很可能中間某幾步啟動失敗,造成整個服務啟動不完整。

另外,sbin中的其他腳本如start-dfs.sh,start-yarn.sh等也不能完全解決這樣的問題。例如,啟動dfs包括啟動namenode和datanode,如果當中有節點啟動失敗,就很麻煩了。所以,我建議一步一步啟動。

5.0 首先第一次使用hadoop之前,需要對namenode進行格式化。

[neil@neilhost hadoop-2.6.0]$ ll bin 總用量 440 -rwxr-xr-x. 1 neil neil 159183 11月 14 05:20 container-executor -rwxr-xr-x. 1 neil neil 5479 11月 14 05:20 hadoop -rwxr-xr-x. 1 neil neil 8298 11月 14 05:20 hadoop.cmd -rwxr-xr-x. 1 neil neil 11142 11月 14 05:20 hdfs -rwxr-xr-x. 1 neil neil 6923 11月 14 05:20 hdfs.cmd -rwxr-xr-x. 1 neil neil 5205 11月 14 05:20 mapred -rwxr-xr-x. 1 neil neil 5949 11月 14 05:20 mapred.cmd -rwxr-xr-x. 1 neil neil 1776 11月 14 05:20 rcc -rwxr-xr-x. 1 neil neil 201659 11月 14 05:20 test-container-executor -rwxr-xr-x. 1 neil neil 11380 11月 14 05:20 yarn -rwxr-xr-x. 1 neil neil 10895 11月 14 05:20 yarn.cmd [neil@neilhost hadoop-2.6.0]$ bin/hadoop namenode -format DEPRECATED: Use of this script to execute hdfs command is deprecated. Instead use the hdfs command for it. 15/04/01 20:57:32 INFO namenode.NameNode: STARTUP_MSG: /************************************************************ STARTUP_MSG: Starting NameNode STARTUP_MSG: host = neilhost.neildomain/192.168.1.101 STARTUP_MSG: args = [-format] STARTUP_MSG: version = 2.6.0 STARTUP_MSG: classpath = /home/neil/Servers/hadoop-2.6.0/etc/hadoop:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/java-xmlbuilder-0.4.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/curator-client-2.6.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/jettison-1.1.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/jasper-runtime-5.5.23.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/commons-httpclient-3.1.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/hadoop-auth-2.6.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/jersey-core-1.9.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/commons-el-1.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/jasper-compiler-5.5.23.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/jackson-mapper-asl-1.9.13.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/xmlenc-0.52.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/asm-3.2.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/commons-beanutils-core-1.8.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/protobuf-java-2.5.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/commons-configuration-1.6.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/commons-io-2.4.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/snappy-java-1.0.4.1.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/commons-beanutils-1.7.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/jetty-util-6.1.26.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/commons-cli-1.2.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/httpcore-4.2.5.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/avro-1.7.4.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/apacheds-i18n-2.0.0-M15.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/jsr305-1.3.9.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/activation-1.1.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/zookeeper-3.4.6.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/servlet-api-2.5.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/commons-digester-1.8.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/curator-framework-2.6.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/commons-logging-1.1.3.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/jaxb-api-2.2.2.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/gson-2.2.4.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/junit-4.11.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/jsp-api-2.1.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/apacheds-kerberos-codec-2.0.0-M15.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/stax-api-1.0-2.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/jetty-6.1.26.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/htrace-core-3.0.4.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/commons-compress-1.4.1.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/jersey-json-1.9.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/commons-collections-3.2.1.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/jackson-core-asl-1.9.13.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/api-asn1-api-1.0.0-M20.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/slf4j-api-1.7.5.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/jaxb-impl-2.2.3-1.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/commons-math4-3.1.1.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/curator-recipes-2.6.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/paranamer-2.3.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/netty-3.6.2.Final.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/xz-1.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/guava-11.0.2.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/commons-net-3.1.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/commons-codec-1.4.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/jackson-jaxrs-1.9.13.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/hadoop-annotations-2.6.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/api-util-1.0.0-M20.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/jets3t-0.9.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/log4j-1.2.17.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/jersey-server-1.9.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/httpclient-4.2.5.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/jsch-0.1.42.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/commons-lang-2.6.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/slf4j-log4j12-1.7.5.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/jackson-xc-1.9.13.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/hamcrest-core-1.3.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/lib/mockito-all-1.8.5.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/hadoop-common-2.6.0-tests.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/hadoop-nfs-2.6.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/common/hadoop-common-2.6.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/hdfs:/home/neil/Servers/hadoop-2.6.0/share/hadoop/hdfs/lib/jasper-runtime-5.5.23.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/hdfs/lib/jersey-core-1.9.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/hdfs/lib/commons-el-1.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/hdfs/lib/jackson-mapper-asl-1.9.13.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/hdfs/lib/xmlenc-0.52.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/hdfs/lib/asm-3.2.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/hdfs/lib/protobuf-java-2.5.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/hdfs/lib/commons-io-2.4.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/hdfs/lib/jetty-util-6.1.26.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/hdfs/lib/commons-cli-1.2.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/hdfs/lib/xercesImpl-2.9.1.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/hdfs/lib/jsr305-1.3.9.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/hdfs/lib/commons-daemon-1.0.13.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/hdfs/lib/servlet-api-2.5.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/hdfs/lib/commons-logging-1.1.3.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/hdfs/lib/jsp-api-2.1.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/hdfs/lib/jetty-6.1.26.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/hdfs/lib/htrace-core-3.0.4.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/hdfs/lib/jackson-core-asl-1.9.13.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/hdfs/lib/xml-apis-1.3.04.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/hdfs/lib/netty-3.6.2.Final.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/hdfs/lib/guava-11.0.2.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/hdfs/lib/commons-codec-1.4.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/hdfs/lib/log4j-1.2.17.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/hdfs/lib/jersey-server-1.9.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/hdfs/lib/commons-lang-2.6.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/hdfs/hadoop-hdfs-2.6.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/hdfs/hadoop-hdfs-nfs-2.6.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/hdfs/hadoop-hdfs-2.6.0-tests.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/lib/jettison-1.1.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/lib/commons-httpclient-3.1.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/lib/jersey-core-1.9.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/lib/jackson-mapper-asl-1.9.13.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/lib/asm-3.2.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/lib/protobuf-java-2.5.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/lib/javax.inject-1.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/lib/jline-0.9.94.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/lib/commons-io-2.4.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/lib/guice-3.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/lib/jersey-client-1.9.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/lib/jetty-util-6.1.26.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/lib/jersey-guice-1.9.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/lib/commons-cli-1.2.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/lib/jsr305-1.3.9.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/lib/activation-1.1.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/lib/zookeeper-3.4.6.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/lib/servlet-api-2.5.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/lib/guice-servlet-3.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/lib/commons-logging-1.1.3.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/lib/jaxb-api-2.2.2.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/lib/stax-api-1.0-2.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/lib/jetty-6.1.26.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/lib/commons-compress-1.4.1.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/lib/jersey-json-1.9.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/lib/commons-collections-3.2.1.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/lib/jackson-core-asl-1.9.13.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/lib/jaxb-impl-2.2.3-1.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/lib/netty-3.6.2.Final.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/lib/xz-1.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/lib/guava-11.0.2.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/lib/commons-codec-1.4.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/lib/jackson-jaxrs-1.9.13.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/lib/log4j-1.2.17.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/lib/jersey-server-1.9.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/lib/leveldbjni-all-1.8.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/lib/commons-lang-2.6.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/lib/aopalliance-1.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/lib/jackson-xc-1.9.13.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/hadoop-yarn-applications-unmanaged-am-launcher-2.6.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/hadoop-yarn-server-tests-2.6.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/hadoop-yarn-registry-2.6.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/hadoop-yarn-server-nodemanager-2.6.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/hadoop-yarn-server-web-proxy-2.6.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/hadoop-yarn-applications-distributedshell-2.6.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/hadoop-yarn-api-2.6.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/hadoop-yarn-common-2.6.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/hadoop-yarn-server-resourcemanager-2.6.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/hadoop-yarn-client-2.6.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/hadoop-yarn-server-applicationhistoryservice-2.6.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/yarn/hadoop-yarn-server-common-2.6.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/mapreduce/lib/jersey-core-1.9.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/mapreduce/lib/jackson-mapper-asl-1.9.13.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/mapreduce/lib/asm-3.2.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/mapreduce/lib/protobuf-java-2.5.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/mapreduce/lib/javax.inject-1.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/mapreduce/lib/commons-io-2.4.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/mapreduce/lib/guice-3.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/mapreduce/lib/snappy-java-1.0.4.1.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/mapreduce/lib/jersey-guice-1.9.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/mapreduce/lib/avro-1.7.4.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/mapreduce/lib/guice-servlet-3.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/mapreduce/lib/junit-4.11.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/mapreduce/lib/commons-compress-1.4.1.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/mapreduce/lib/jackson-core-asl-1.9.13.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/mapreduce/lib/paranamer-2.3.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/mapreduce/lib/netty-3.6.2.Final.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/mapreduce/lib/xz-1.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/mapreduce/lib/hadoop-annotations-2.6.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/mapreduce/lib/log4j-1.2.17.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/mapreduce/lib/jersey-server-1.9.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/mapreduce/lib/leveldbjni-all-1.8.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/mapreduce/lib/aopalliance-1.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/mapreduce/lib/hamcrest-core-1.3.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/mapreduce/hadoop-mapreduce-client-hs-2.6.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/mapreduce/hadoop-mapreduce-examples-2.6.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/mapreduce/hadoop-mapreduce-client-common-2.6.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/mapreduce/hadoop-mapreduce-client-core-2.6.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/mapreduce/hadoop-mapreduce-client-jobclient-2.6.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/mapreduce/hadoop-mapreduce-client-app-2.6.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/mapreduce/hadoop-mapreduce-client-jobclient-2.6.0-tests.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/mapreduce/hadoop-mapreduce-client-shuffle-2.6.0.jar:/home/neil/Servers/hadoop-2.6.0/share/hadoop/mapreduce/hadoop-mapreduce-client-hs-plugins-2.6.0.jar:/contrib/capacity-scheduler/*.jar:/contrib/capacity-scheduler/*.jar STARTUP_MSG: build = https://git-wip-us.apache.org/repos/asf/hadoop.git -r e3496499ecb8d220fba99dc5ed4c99c8f9e33bb1; compiled by 'jenkins' on 2014-11-13T21:10Z STARTUP_MSG: java = 1.8.0_40 ************************************************************/ 15/04/01 20:57:32 INFO namenode.NameNode: registered UNIX signal handlers for [TERM, HUP, INT] 15/04/01 20:57:32 INFO namenode.NameNode: createNameNode [-format] 15/04/01 20:57:32 WARN common.Util: Path /home/neil/Servers/hadoop-2.6.0/dfs/name should be specified as a URI in configuration files. Please update hdfs configuration. 15/04/01 20:57:32 WARN common.Util: Path /home/neil/Servers/hadoop-2.6.0/dfs/name should be specified as a URI in configuration files. Please update hdfs configuration. Formatting using clusterid: CID-fb38ac3b-414f-4643-b62d-2e9897b5db27 15/04/01 20:57:32 INFO namenode.FSNamesystem: No KeyProvider found. 15/04/01 20:57:33 INFO namenode.FSNamesystem: fsLock is fair:true 15/04/01 20:57:33 INFO blockmanagement.DatanodeManager: dfs.block.invalidate.limit=1000 15/04/01 20:57:33 INFO blockmanagement.DatanodeManager: dfs.namenode.datanode.registration.ip-hostname-check=true 15/04/01 20:57:33 INFO blockmanagement.BlockManager: dfs.namenode.startup.delay.block.deletion.sec is set to 000:00:00:00.000 15/04/01 20:57:33 INFO blockmanagement.BlockManager: The block deletion will start around 2015 四月 01 20:57:33 15/04/01 20:57:33 INFO util.GSet: Computing capacity for map BlocksMap 15/04/01 20:57:33 INFO util.GSet: VM type = 64-bit 15/04/01 20:57:33 INFO util.GSet: 2.0% max memory 889 MB = 17.8 MB 15/04/01 20:57:33 INFO util.GSet: capacity = 2^21 = 2097152 entries 15/04/01 20:57:33 INFO blockmanagement.BlockManager: dfs.block.access.token.enable=false 15/04/01 20:57:33 INFO blockmanagement.BlockManager: defaultReplication = 1 15/04/01 20:57:33 INFO blockmanagement.BlockManager: maxReplication = 512 15/04/01 20:57:33 INFO blockmanagement.BlockManager: minReplication = 1 15/04/01 20:57:33 INFO blockmanagement.BlockManager: maxReplicationStreams = 2 15/04/01 20:57:33 INFO blockmanagement.BlockManager: shouldCheckForEnoughRacks = false 15/04/01 20:57:33 INFO blockmanagement.BlockManager: replicationRecheckInterval = 3000 15/04/01 20:57:33 INFO blockmanagement.BlockManager: encryptDataTransfer = false 15/04/01 20:57:33 INFO blockmanagement.BlockManager: maxNumBlocksToLog = 1000 15/04/01 20:57:33 INFO namenode.FSNamesystem: fsOwner = neil (auth:SIMPLE) 15/04/01 20:57:33 INFO namenode.FSNamesystem: supergroup = supergroup 15/04/01 20:57:33 INFO namenode.FSNamesystem: isPermissionEnabled = true 15/04/01 20:57:33 INFO namenode.FSNamesystem: HA Enabled: false 15/04/01 20:57:33 INFO namenode.FSNamesystem: Append Enabled: true 15/04/01 20:57:33 INFO util.GSet: Computing capacity for map INodeMap 15/04/01 20:57:33 INFO util.GSet: VM type = 64-bit 15/04/01 20:57:33 INFO util.GSet: 1.0% max memory 889 MB = 8.9 MB 15/04/01 20:57:33 INFO util.GSet: capacity = 2^20 = 1048576 entries 15/04/01 20:57:33 INFO namenode.NameNode: Caching file names occuring more than 10 times 15/04/01 20:57:33 INFO util.GSet: Computing capacity for map cachedBlocks 15/04/01 20:57:33 INFO util.GSet: VM type = 64-bit 15/04/01 20:57:33 INFO util.GSet: 0.25% max memory 889 MB = 2.2 MB 15/04/01 20:57:33 INFO util.GSet: capacity = 2^18 = 262144 entries 15/04/01 20:57:33 INFO namenode.FSNamesystem: dfs.namenode.safemode.threshold-pct = 0.9990000128746033 15/04/01 20:57:33 INFO namenode.FSNamesystem: dfs.namenode.safemode.min.datanodes = 0 15/04/01 20:57:33 INFO namenode.FSNamesystem: dfs.namenode.safemode.extension = 30000 15/04/01 20:57:33 INFO namenode.FSNamesystem: Retry cache on namenode is enabled 15/04/01 20:57:33 INFO namenode.FSNamesystem: Retry cache will use 0.03 of total heap and retry cache entry expiry time is 600000 millis 15/04/01 20:57:33 INFO util.GSet: Computing capacity for map NameNodeRetryCache 15/04/01 20:57:33 INFO util.GSet: VM type = 64-bit 15/04/01 20:57:33 INFO util.GSet: 0.029999999329447746% max memory 889 MB = 273.1 KB 15/04/01 20:57:33 INFO util.GSet: capacity = 2^15 = 32768 entries 15/04/01 20:57:33 INFO namenode.NNConf: ACLs enabled? false 15/04/01 20:57:33 INFO namenode.NNConf: XAttrs enabled? true 15/04/01 20:57:33 INFO namenode.NNConf: Maximum size of an xattr: 16384 15/04/01 20:57:33 INFO namenode.FSImage: Allocated new BlockPoolId: BP-546681589-192.168.1.101-1427893053846 15/04/01 20:57:34 INFO common.Storage: Storage directory /home/neil/Servers/hadoop-2.6.0/dfs/name has been successfully formatted. 15/04/01 20:57:34 INFO namenode.NNStorageRetentionManager: Going to retain 1 images with txid >= 0 15/04/01 20:57:34 INFO util.ExitUtil: Exiting with status 0 15/04/01 20:57:34 INFO namenode.NameNode: SHUTDOWN_MSG: /************************************************************ SHUTDOWN_MSG: Shutting down NameNode at neilhost.neildomain/192.168.1.101 ************************************************************/ [neil@neilhost hadoop-2.6.0]$

這里需要萬分注意:這一步僅限于第一部署新集群時用,它會清空所有dfs上的數據。如果在線上環境下,你手賤了,吃不了兜著走!!!!

這時候,你會發現,目錄下多了一個dfs目錄,dfs下有個name,這是我們之前配置的etc/hadoop/hdfs-site.xml

[neil@neilhost hadoop-2.6.0]$ ll dfs 總用量 4 drwxrwxr-x. 3 neil neil 4096 4月 1 20:57 name

5.1 啟動namenode

使用sbin下的hadoop-daemon.sh啟動namenode。

啟動之后,可以用jdk的jps命令來進行查看JVM進程。注意:我用的fedora/centos系列,所以用rpm安裝jdk后一切都配置好,如果你用的ubuntu或你下載的是解壓版的jdk需要按全路徑輸入命令,不然也配置一下jdk環境變量吧。

[neil@neilhost hadoop-2.6.0]$ ^C [neil@neilhost hadoop-2.6.0]$ sbin/hadoop-daemon.sh start namenode starting namenode, logging to /home/neil/Servers/hadoop-2.6.0/logs/hadoop-neil-namenode-neilhost.neildomain.out [neil@neilhost hadoop-2.6.0]$ jps 4192 Jps 4117 NameNode

我們可以看到NameNode成功啟動了。

注意:如果沒有啟動成功,請去查看logs目錄下的namenode.log日志文件。

[neil@neilhost hadoop-2.6.0]$ ll logs 總用量 36 -rw-rw-r--. 1 neil neil 31591 4月 1 21:19 hadoop-neil-namenode-neilhost.neildomain.log -rw-rw-r--. 1 neil neil 715 4月 1 21:13 hadoop-neil-namenode-neilhost.neildomain.out -rw-rw-r--. 1 neil neil 0 4月 1 21:13 SecurityAuth-neil.audit

5.2 啟動datanode。

[neil@neilhost hadoop-2.6.0]$ sbin/hadoop-daemon.sh start datanode starting datanode, logging to /home/neil/Servers/hadoop-2.6.0/logs/hadoop-neil-datanode-neilhost.neildomain.out [neil@neilhost hadoop-2.6.0]$ jps 4276 DataNode 4117 NameNode 4351 Jps

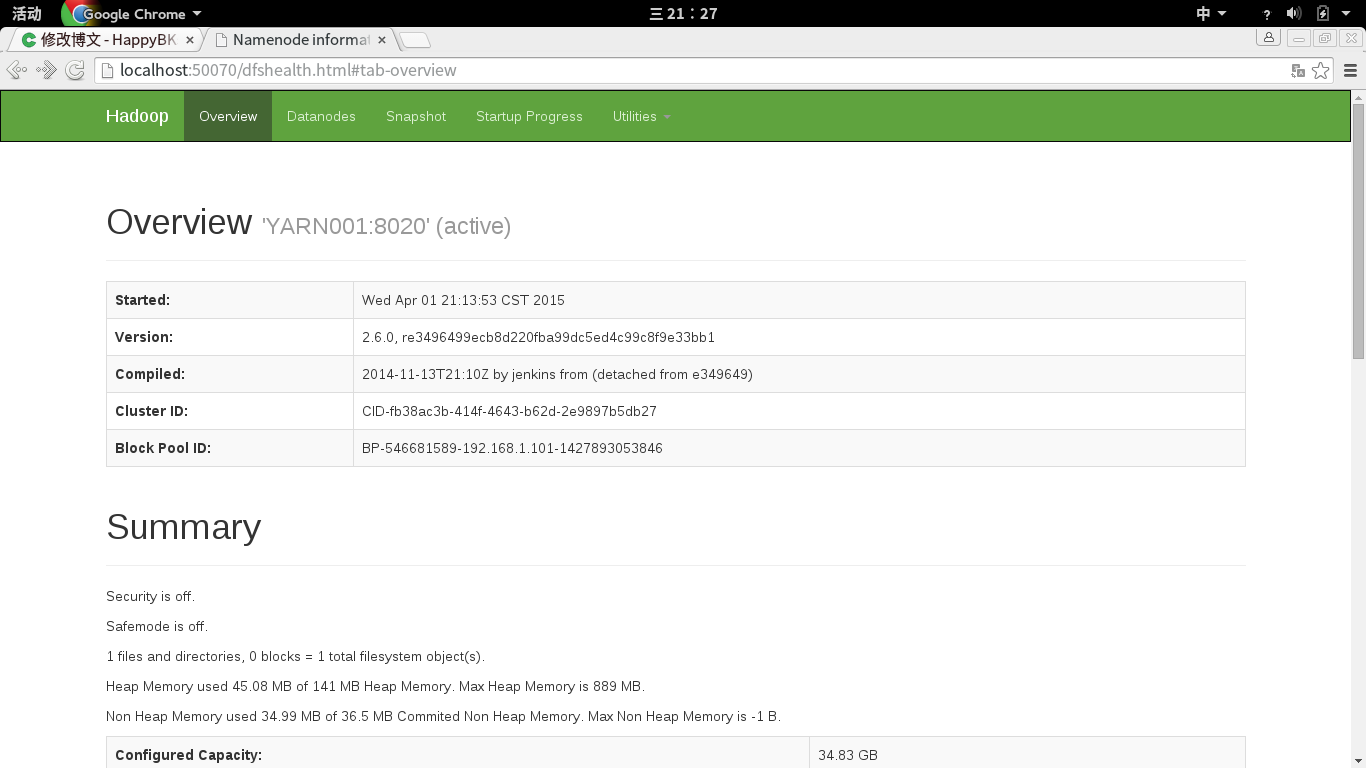

這時候,我們還可以通過網頁http訪問dfs。dfs默認端口50070.

輸入http://yarn001:50070/

http://127.0.0.1:50070/

http://127.0.1.1:50070/

http://localhost:50070/

都是可以的。

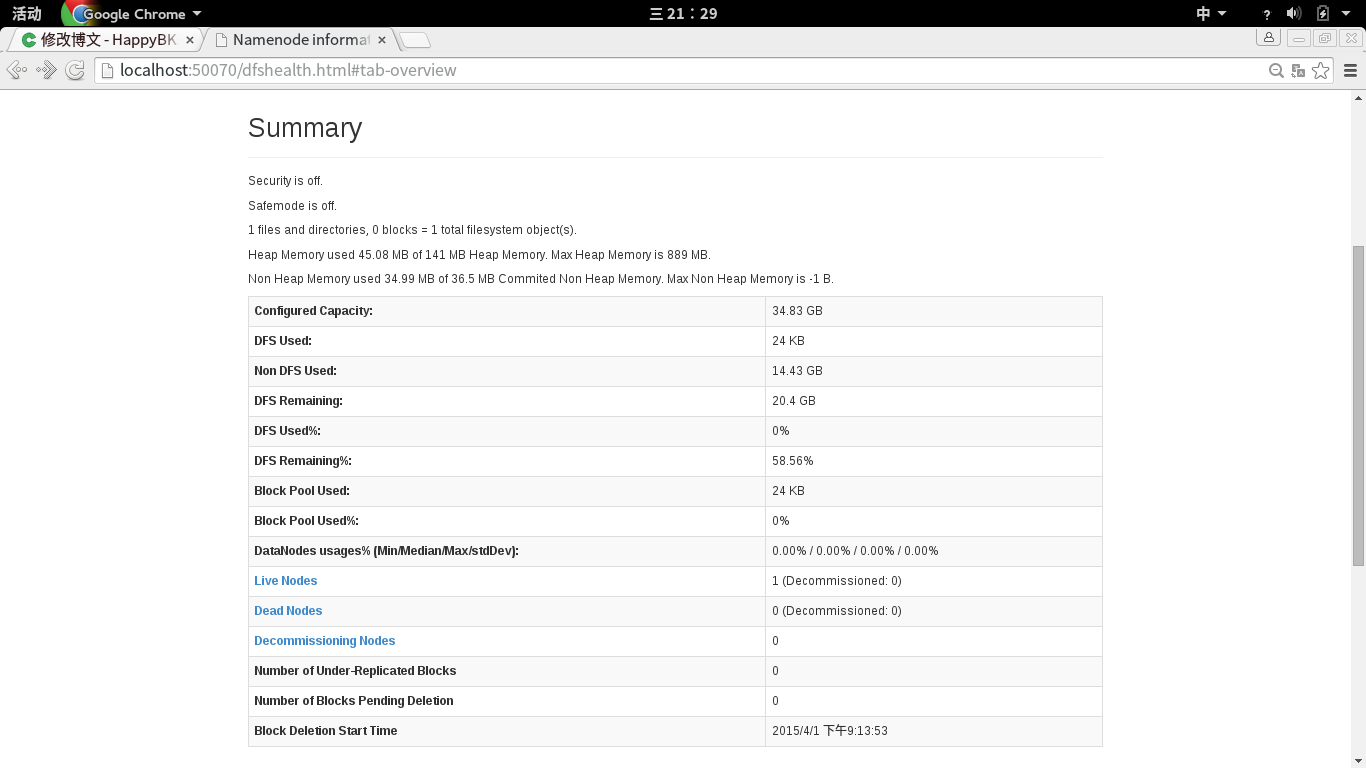

里面有當前狀態的綜述:

可以看到livenode這時候是1.

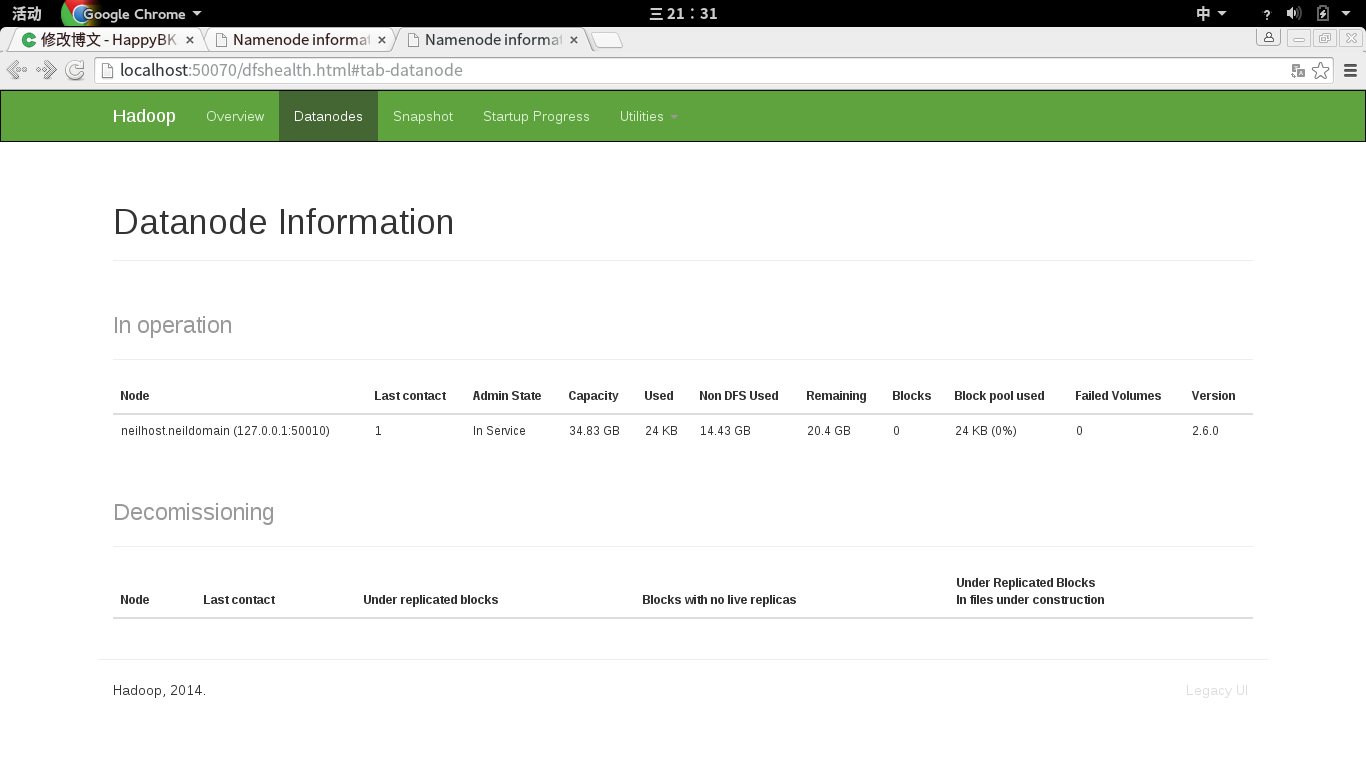

這里,livenode可以點擊鏈接進去查看:

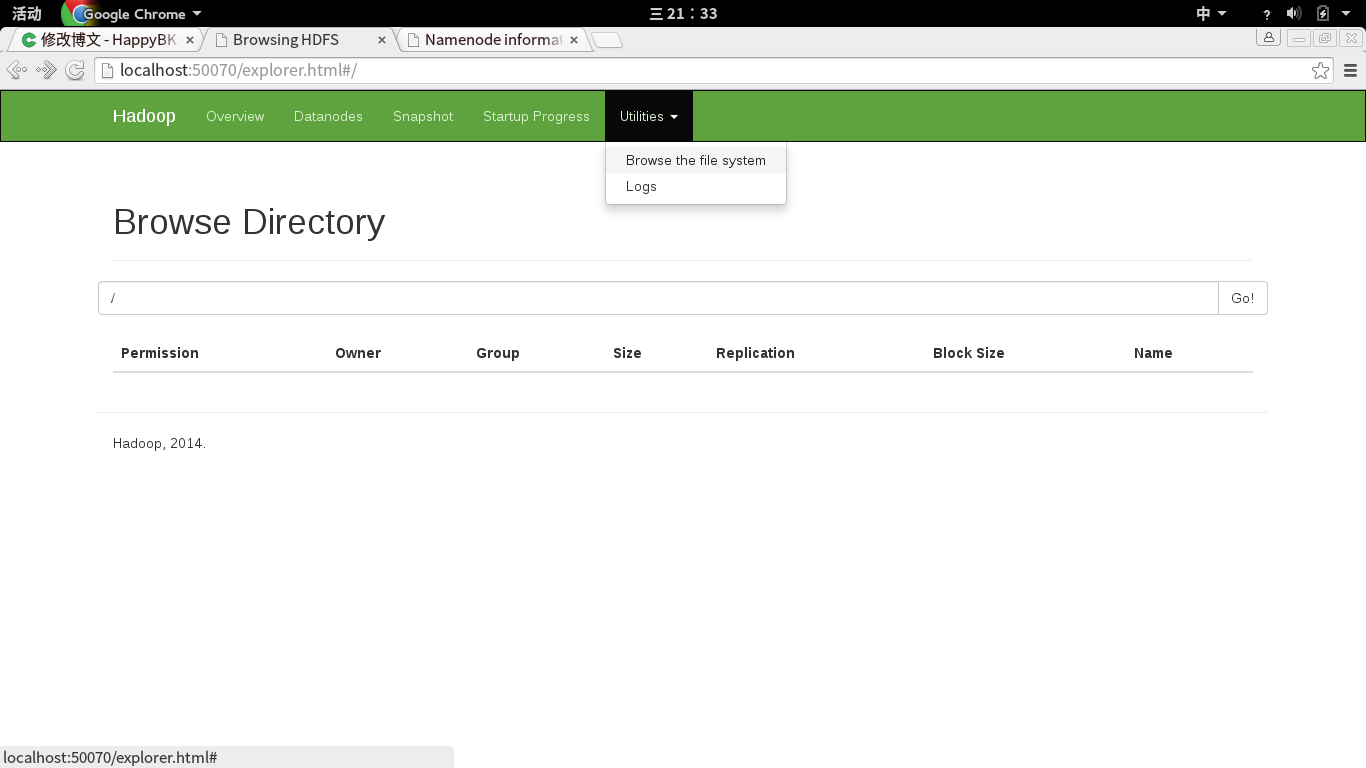

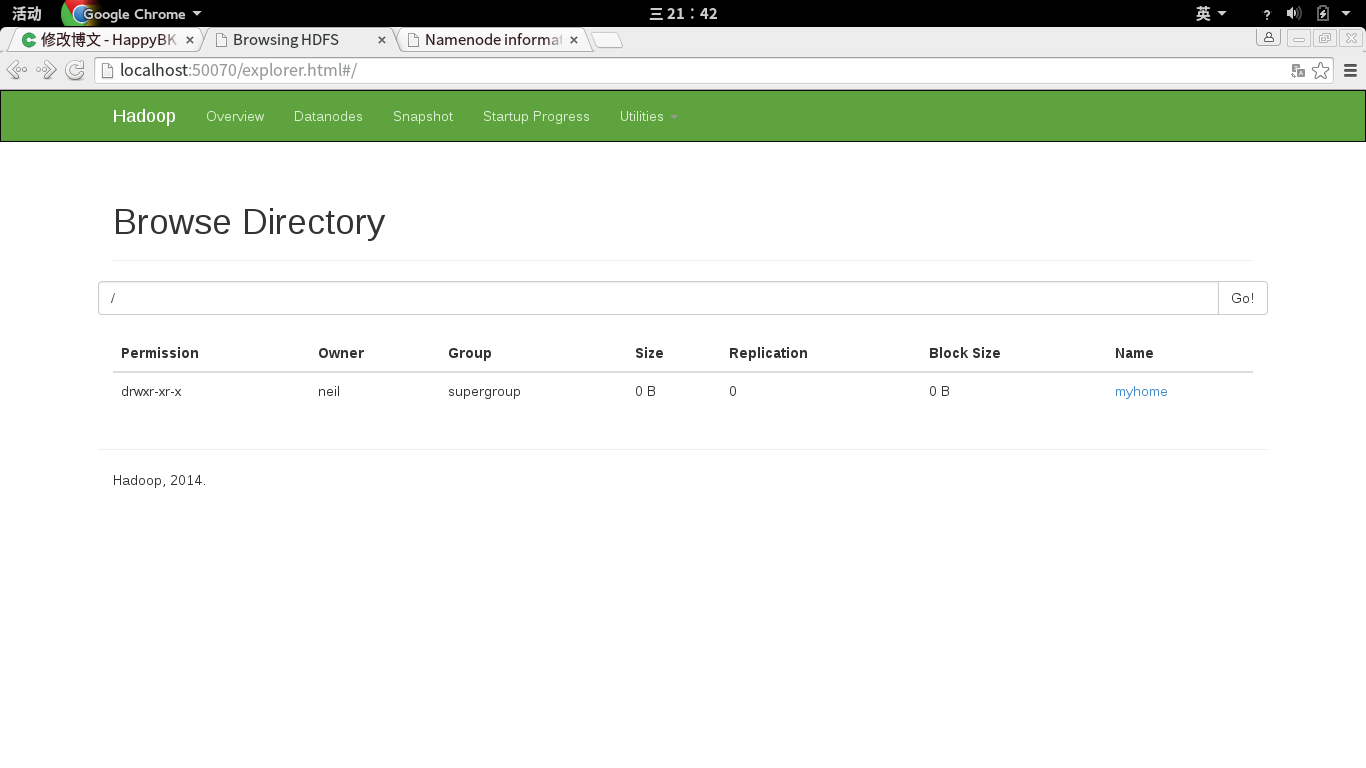

另外,還可以查看hdfs上的文件有哪些:

方法是點擊上面菜單Utilities中的Browse the file system

(本文初期oschina的用戶HappyBKs的博客,轉載請在醒目位置聲明出處!http://my.oschina.net/u/1156339/blog/396128)

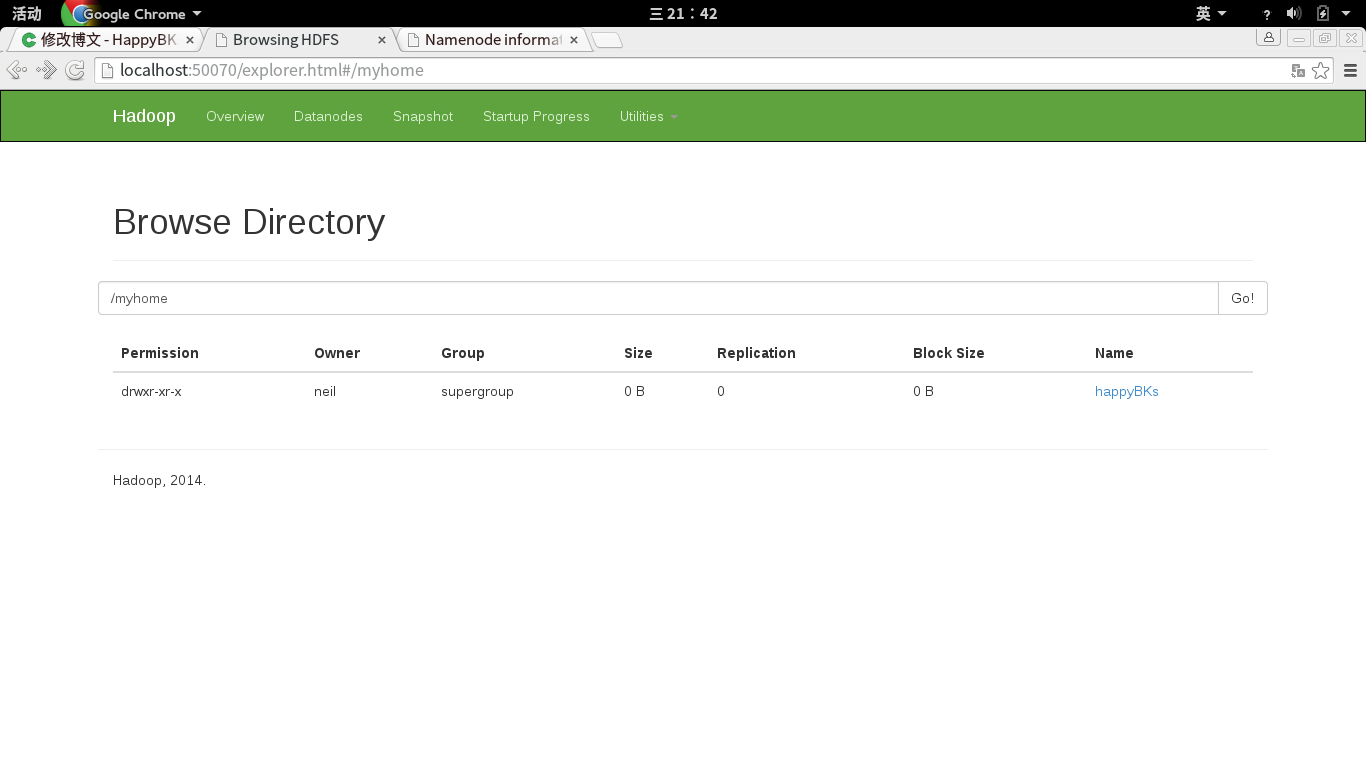

5.4 那么現在,我們嘗試向hdfs中添加目錄和文件。

[neil@neilhost hadoop-2.6.0]$ bin/hadoop fs -mkdir /myhome [neil@neilhost hadoop-2.6.0]$ bin/hadoop fs -mkdir /myhome/happyBKs [neil@neilhost hadoop-2.6.0]$

再次查看Utilities中的Browse the file system。

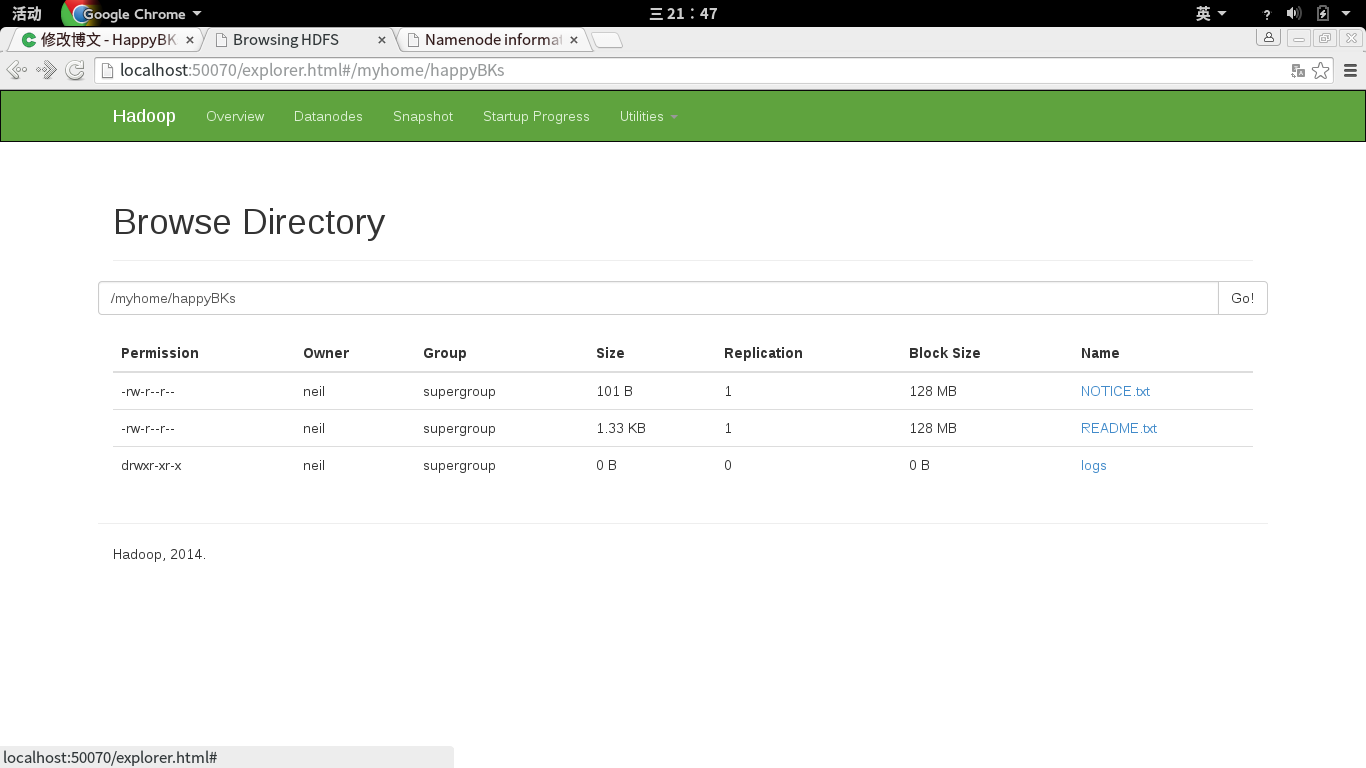

接下來,我們試著添加文件。

這里,我一次性添加兩個文件和一整個文件夾。

[neil@neilhost hadoop-2.6.0]$ bin/hadoop fs -put README.txt NOTICE.txt logs/ /myhome/happyBKs

我們查看hdfs。

6. 前面5我們已經啟動了dfs。現在開始啟動yarn。yarn不像dfs那樣對數據直接影響,我們可以使用一次性啟動,也可以使用sbin下的yarn-daemon.sh start resourcemanager和yarn-daemon.sh start nodemanager來分別啟動。

這里我直接一次啟動,使用start-yarn.sh

[neil@neilhost hadoop-2.6.0]$ sbin/start-yarn.sh starting yarn daemons starting resourcemanager, logging to /home/neil/Servers/hadoop-2.6.0/logs/yarn-neil-resourcemanager-neilhost.neildomain.out localhost: ssh: connect to host localhost port 22: Connection refused [neil@neilhost hadoop-2.6.0]$ sudo sbin/start-yarn.sh [sudo] password for neil: starting yarn daemons starting resourcemanager, logging to /home/neil/Servers/hadoop-2.6.0/logs/yarn-root-resourcemanager-neilhost.neildomain.out localhost: ssh: connect to host localhost port 22: Connection refused [neil@neilhost hadoop-2.6.0]$

可以看到,這里啟動被拒絕了,原因是ssh訪問被拒。即使我用su權限也是一樣。

sudo yum install openssh-server

網上給出了幾個在ubuntu上的解決方法。

http://asyty.iteye.com/blog/1440141 http://blog.sina.com.cn/s/blog_573a052b0102dwxn.html

結果輸入之后都失敗了

/etc/init.d/ssh -start

bash: /etc/init.d/ssh: 沒有那個文件或目錄

net start sshd

Invalid command: net start

最后解決方法:

[neil@neilhost hadoop-2.6.0]$ service sshd start Redirecting to /bin/systemctl start sshd.service [neil@neilhost hadoop-2.6.0]$ pstree -p | grep ssh |-sshd(7937) [neil@neilhost hadoop-2.6.0]$ ssh locahost ssh: Could not resolve hostname locahost: Name or service not known [neil@neilhost hadoop-2.6.0]$ ssh localhost The authenticity of host 'localhost (127.0.0.1)' can't be established. ECDSA key fingerprint is 88:17:a4:f2:dd:87:6f:ce:b4:04:07:d5:6c:ca:6c:b1. Are you sure you want to continue connecting (yes/no)? y Please type 'yes' or 'no': yes Warning: Permanently added 'localhost' (ECDSA) to the list of known hosts. neil@localhost's password: [neil@neilhost ~]$

之后,我們再嘗試啟動yarn。

[neil@neilhost hadoop-2.6.0]$ sbin/start-yarn.sh starting yarn daemons resourcemanager running as process 5115. Stop it first. neil@localhost's password: localhost: starting nodemanager, logging to /home/neil/Servers/hadoop-2.6.0/logs/yarn-neil-nodemanager-neilhost.neildomain.out [neil@neilhost hadoop-2.6.0]$ jps [neil@neilhost hadoop-2.6.0]$ jps 10113 Jps 7875 NameNode 9974 NodeManager 8936 ResourceManager 8136 DataNode 8430 SecondaryNameNode

發現,ResourceManager正式啟動了。(這里因為本文我不是一天寫的,所以前后pid會不一樣)

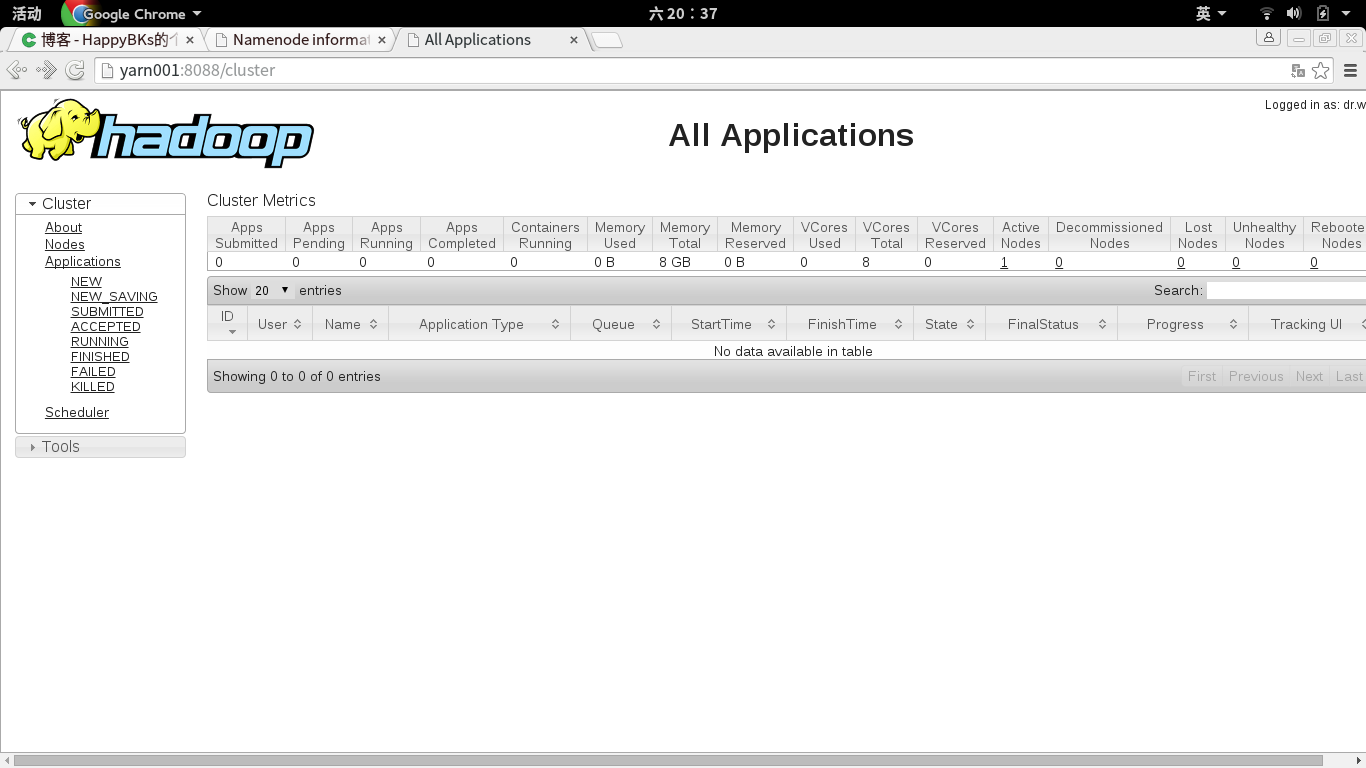

這時候,我們輸入yarn001:8088.進入下面的UI。剛進去可能Active Node為0,等一段時間刷新就出現1了。

注意:如果始終為0,用jps看看nodemanager是否已經啟動,如果沒有啟動,用sbin/start-yarn.sh start nodemanager來試試。

以上是“如何使用jar包安裝部署Hadoop2.6+jdk8”這篇文章的所有內容,感謝各位的閱讀!相信大家都有了一定的了解,希望分享的內容對大家有所幫助,如果還想學習更多知識,歡迎關注億速云行業資訊頻道!

免責聲明:本站發布的內容(圖片、視頻和文字)以原創、轉載和分享為主,文章觀點不代表本網站立場,如果涉及侵權請聯系站長郵箱:is@yisu.com進行舉報,并提供相關證據,一經查實,將立刻刪除涉嫌侵權內容。