溫馨提示×

您好,登錄后才能下訂單哦!

點擊 登錄注冊 即表示同意《億速云用戶服務條款》

您好,登錄后才能下訂單哦!

小編給大家分享一下如何從指定的網絡端口上采集日志到控制臺輸出和HDFS,希望大家閱讀完這篇文章之后都有所收獲,下面讓我們一起去探討吧!

需求1:

從指定的網絡端口上采集日志到控制臺輸出和HDFS

負載算法

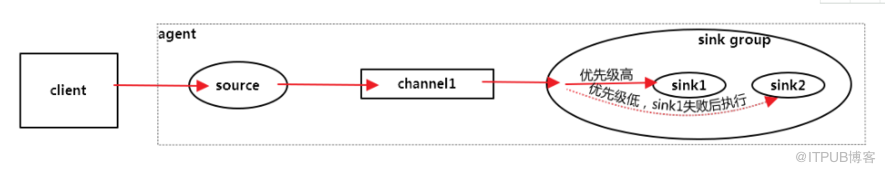

故障轉移:可以指定優先級,數字越大越優先

a1.sinkgroups.g1.processor.type = failover

a1.sinkgroups = g1 a1.sinkgroups.g1.sinks = k1 k2 a1.sinkgroups.g1.processor.type = failover a1.sinkgroups.g1.processor.priority.k1 = 5 a1.sinkgroups.g1.processor.priority.k2 = 10 a1.sinkgroups.g1.processor.maxpenalty = 10000

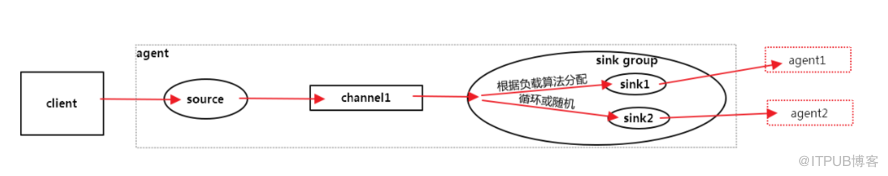

全部輪詢

a1.sinkgroups.g1.processor.type = load_balance

a1.sinkgroups.g1.processor.type = load_balance

#從指定的網絡端口上采集日志到控制臺輸出和HDFS

a1.sources = r1 a1.sinks = k1 k2 a1.channels = c1 # Describe/configure the source a1.sources.r1.type = netcat a1.sources.r1.bind = 0.0.0.0 a1.sources.r1.port = 44444 # Describe the sink a1.sinkgroups = g1 a1.sinkgroups.g1.sinks = k1 k2 a1.sinkgroups.g1.processor.type = load_balance a1.sinks.k1.type = logger a1.sinks.k2.type = hdfs a1.sinks.k2.hdfs.path = hdfs://192.168.0.129:9000/user/hadoop/flume a1.sinks.k2.hdfs.batchSize = 10 a1.sinks.k2.hdfs.fileType = DataStream a1.sinks.k2.hdfs.writeFormat = Text # Use a channel which buffers events in memory a1.channels.c1.type = memory a1.channels.c1.capacity = 1000 a1.channels.c1.transactionCapacity = 100 # Bind the source and sink to the channel a1.sources.r1.channels = c1 a1.sinks.k1.channel = c1 a1.sinks.k2.channel = c1

檢查logger輸出:

2018-08-10 18:58:39,659 (lifecycleSupervisor-1-3) [INFO - org.apache.flume.source.NetcatSource.start(NetcatSource.java:169)] Created serverSocket:sun.nio.ch.ServerSocketChannelImpl[/0:0:0:0:0:0:0:0:44444]

2018-08-10 18:59:17,723 (SinkRunner-PollingRunner-LoadBalancingSinkProcessor) [INFO - org.apache.flume.sink.LoggerSink.process(LoggerSink.java:94)] Event: { headers:{} body: 7A 6F 75 72 63 20 6F 6B 0D zourc ok. }

2018-08-10 19:00:35,744 (SinkRunner-PollingRunner-LoadBalancingSinkProcessor) [INFO - org.apache.flume.sink.LoggerSink.process(LoggerSink.java:94)] Event: { headers:{} body: 61 73 64 66 0D asdf. }

2018-08-10 19:00:35,774 (SinkRunner-PollingRunner-LoadBalancingSinkProcessor) [INFO - org.apache.flume.sink.hdfs.HDFSDataStream.configure(HDFSDataStream.java:58)] Serializer = TEXT, UseRawLocalFileSystem = false

2018-08-10 19:00:36,086 (SinkRunner-PollingRunner-LoadBalancingSinkProcessor) [INFO - org.apache.flume.sink.hdfs.BucketWriter.open(BucketWriter.java:234)] Creating hdfs://192.168.0.129:9000/user/hadoop/flume/FlumeData.1533942035775.tmp檢查hdfs輸出:

[hadoop@hadoop001 flume]$ hdfs dfs -text hdfs://192.168.0.129:9000/user/hadoop/flume/* 18/08/10 19:14:23 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable zourc1 2 3 4 5 6 7 8 9 10

看完了這篇文章,相信你對“如何從指定的網絡端口上采集日志到控制臺輸出和HDFS”有了一定的了解,如果想了解更多相關知識,歡迎關注億速云行業資訊頻道,感謝各位的閱讀!

免責聲明:本站發布的內容(圖片、視頻和文字)以原創、轉載和分享為主,文章觀點不代表本網站立場,如果涉及侵權請聯系站長郵箱:is@yisu.com進行舉報,并提供相關證據,一經查實,將立刻刪除涉嫌侵權內容。