您好,登錄后才能下訂單哦!

您好,登錄后才能下訂單哦!

由于眾所周知的原因,在國內無法直接訪問Google的服務。二進制包由于其下載方便、靈活定制而深受廣大kubernetes使用者喜愛,成為企業部署生產環境比較流行的方式之一,Kubernetes v1.13.2是目前的最新版本。安裝部署過程可能比較復雜、繁瑣,因此在安裝過程中盡可能將操作步驟腳本話。文中涉及到的腳本已經通過本人測試。

OS(最小化安裝版):

cat /etc/centos-releaseCentOS Linux release 7.6.1810 (Core)Docker Engine:

docker versionClient:

Version: 18.06.0-ce

API version: 1.38

Go version: go1.10.3

Git commit: 0ffa825

Built: Wed Jul 18 19:08:18 2018

OS/Arch: linux/amd64

Experimental: false

Server:

Engine:

Version: 18.06.0-ce

API version: 1.38 (minimum version 1.12)

Go version: go1.10.3

Git commit: 0ffa825

Built: Wed Jul 18 19:10:42 2018

OS/Arch: linux/amd64

Experimental: falseKubenetes:

kubectl versionClient Version: version.Info{Major:"1", Minor:"13", GitVersion:"v1.13.2", GitCommit:"cff46ab41ff0bb44d8584413b598ad8360ec1def", GitTreeState:"clean", BuildDate:"2019-01-10T23:35:51Z", GoVersion:"go1.11.4", Compiler:"gc", Platform:"linux/amd64"}

Server Version: version.Info{Major:"1", Minor:"13", GitVersion:"v1.13.2", GitCommit:"cff46ab41ff0bb44d8584413b598ad8360ec1def", GitTreeState:"clean", BuildDate:"2019-01-10T23:28:14Z", GoVersion:"go1.11.4", Compiler:"gc", Platform:"linux/amd64"}ETCD:

etcd --versionetcd Version: 3.3.11

Git SHA: 2cf9e51d2

Go Version: go1.10.7

Go OS/Arch: linux/amd64Flannel:

flanneld -versionv0.11.0| IP | 主機名(Hostname) | 角色(Role) | 組件(Component) |

|---|---|---|---|

| 172.31.2.11 | gysl-master | Master&Node | kube-apiserver,kube-controller-manager,kube-scheduler,etcd,(kubectl),kubelet,kube-proxy,docker,flannel |

| 172.31.2.12 | gysl-node1 | Node | kubelet,kube-proxy,docker,flannel,etcd |

| 172.31.2.13 | gysl-node2 | Node | kubelet,kube-proxy,docker,flannel,etcd |

注:加粗部分是Master節點必須安裝的組件,etcd可以部署在其他節點,也可以部署在Master節點,kubectl是管理kubernetes的命令行工具。其余部分是Node節點必選組件。

Master節點:

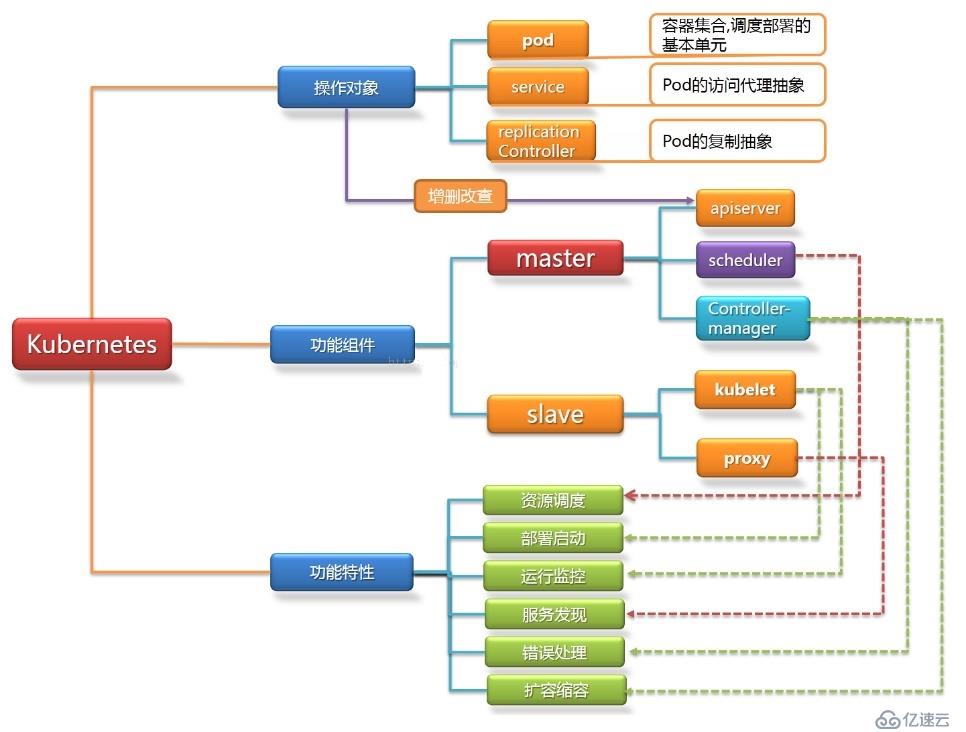

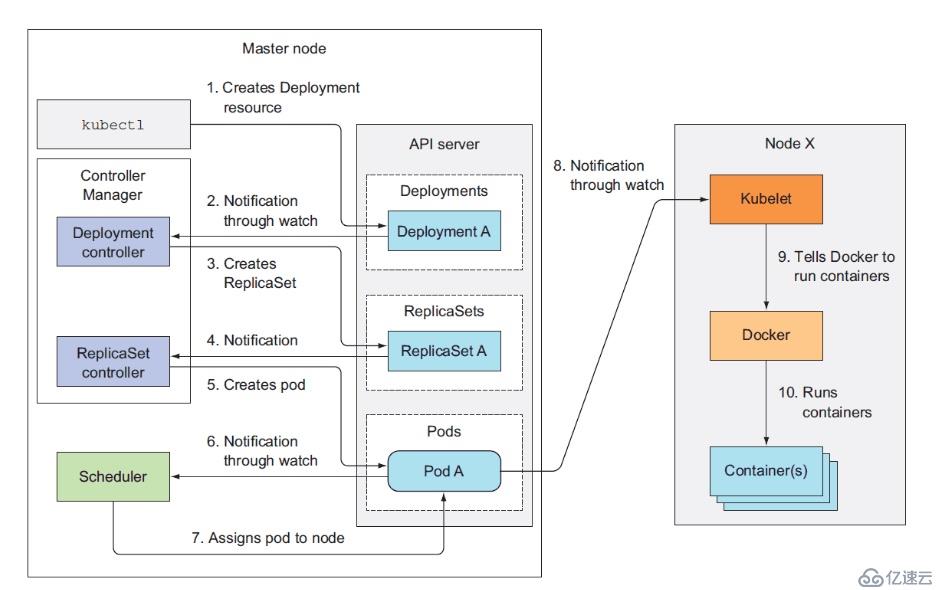

Master節點上面主要由四個模塊組成,apiserver,schedule,controller-manager,etcd。

apiserver: 負責對外提供RESTful的kubernetes API 的服務,它是系統管理指令的統一接口,任何對資源的增刪該查都要交給apiserver處理后再交給etcd。kubectl(kubernetes提供的客戶端工具,該工具內部是對kubernetes API的調用)是直接和apiserver交互的。

schedule: 負責調度Pod到合適的Node上,如果把scheduler看成一個黑匣子,那么它的輸入是pod和由多個Node組成的列表,輸出是Pod和一個Node的綁定。kubernetes目前提供了調度算法,同樣也保留了接口。用戶根據自己的需求定義自己的調度算法。

controller-manager: 如果apiserver做的是前臺的工作的話,那么controller-manager就是負責后臺的。每一個資源都對應一個控制器。而control manager就是負責管理這些控制器的,比如我們通過APIServer創建了一個Pod,當這個Pod創建成功后,apiserver的任務就算完成了。

etcd:etcd是一個高可用的鍵值存儲系統,kubernetes使用它來存儲各個資源的狀態,從而實現了Restful的API。

Node節點:

每個Node節點主要由二個模塊組成:kublet, kube-proxy。

kube-proxy: 該模塊實現了kubernetes中的服務發現和反向代理功能。kube-proxy支持TCP和UDP連接轉發,默認基Round Robin算法將客戶端流量轉發到與service對應的一組后端pod。服務發現方面,kube-proxy使用etcd的watch機制監控集群中service和endpoint對象數據的動態變化,并且維護一個service到endpoint的映射關系,從而保證了后端pod的IP變化不會對訪問者造成影響,另外,kube-proxy還支持session affinity。

kublet:kublet是Master在每個Node節點上面的agent,是Node節點上面最重要的模塊,它負責維護和管理該Node上的所有容器,但是如果容器不是通過kubernetes創建的,它并不會管理。本質上,它負責使Pod的運行狀態與期望的狀態一致。

在所有主機上執行腳本KubernetesInstall-01.sh,以Master節點為例。

[root@gysl-master ~]# sh KubernetesInstall-01.sh腳本內容如下:

#!/bin/bash

# Initialize the machine. This needs to be executed on every machine.

# Add host domain name.

cat>>/etc/hosts<<EOF

172.31.2.11 gysl-master

172.31.2.12 gysl-node1

172.31.2.13 gysl-node2

EOF

# Modify related kernel parameters.

cat>/etc/sysctl.d/kubernetes.conf<<EOF

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

sysctl -p /etc/sysctl.d/kubernetes.conf>&/dev/null

# Turn off and disable the firewalld.

systemctl stop firewalld

systemctl disable firewalld

# Disable the SELinux.

sed -i.bak 's/=enforcing/=disabled/' /etc/selinux/config

# Disable the swap .

sed -i.bak 's/^.*swap/#&/g' /etc/fstab

# Reboot the machine.

reboot在所有主機上執行腳本KubernetesInstall-02.sh,以Master節點為例。

[root@gysl-master ~]# sh KubernetesInstall-02.sh腳本內容如下:

#!/bin/bash

# Install the Docker engine. This needs to be executed on every machine.

curl http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo -o /etc/yum.repos.d/docker-ce.repo>&/dev/null

if [ $? -eq 0 ] ;

then

yum remove docker \

docker-client \

docker-client-latest \

docker-common \

docker-latest \

docker-latest-logrotate \

docker-logrotate \

docker-selinux \

docker-engine-selinux \

docker-engine>&/dev/null

yum list docker-ce --showduplicates|grep "^doc"|sort -r

yum -y install docker-ce-18.06.0.ce-3.el7

rm -f /etc/yum.repos.d/docker-ce.repo

systemctl enable docker && systemctl start docker && systemctl status docker

else

echo "Install failed! Please try again! ";

exit 110

fi注意:以上步驟需要在每一個節點上執行。如果啟用了swap,那么是需要禁用的(腳本KubernetesInstall-01.sh已有涉及),具體可以通過 free 命令查看詳情。另外,還需要關注各個節點上的時間同步情況。

在Master執行腳本KubernetesInstall-03.sh即可進行下載。

[root@gysl-master ~]# sh KubernetesInstall-03.sh腳本內容如下:

#!/bin/bash

# Download relevant softwares. Please verify sha512 yourself.

while true;

do

echo "Downloading, please wait a moment." &&\

curl -L -C - -O https://dl.k8s.io/v1.13.2/kubernetes-server-linux-amd64.tar.gz && \

curl -L -C - -O https://github.com/etcd-io/etcd/releases/download/v3.2.26/etcd-v3.2.26-linux-amd64.tar.gz && \

curl -L -C - -O https://pkg.cfssl.org/R1.2/cfssl_linux-amd64 && \

curl -L -C - -O https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64 && \

curl -L -C - -O https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64 \

curl -L -C - -O https://github.com/coreos/flannel/releases/download/v0.11.0/flannel-v0.11.0-linux-amd64.tar.gz

if [ $? -eq 0 ];

then

echo "Congratulations! All software packages have been downloaded."

break

fi

donekubernetes-server-linux-amd64.tar.gz包括了kubernetes的主要組件,無需下載其他包。etcd-v3.2.26-linux-amd64.tar.gz是部署etcd需要用到的包。其余的是cfssl相關的軟件,暫不深究。網絡原因,只能寫腳本來下載了,這個過程可能需要一會兒。

在Master執行腳本KubernetesInstall-04.sh。

[root@gysl-master ~]# sh KubernetesInstall-04.sh

2019/01/28 16:29:47 [INFO] generating a new CA key and certificate from CSR

2019/01/28 16:29:47 [INFO] generate received request

2019/01/28 16:29:47 [INFO] received CSR

2019/01/28 16:29:47 [INFO] generating key: rsa-2048

2019/01/28 16:29:47 [INFO] encoded CSR

2019/01/28 16:29:47 [INFO] signed certificate with serial number 368034386524991671795323408390048460617296625670

2019/01/28 16:29:47 [INFO] generate received request

2019/01/28 16:29:47 [INFO] received CSR

2019/01/28 16:29:47 [INFO] generating key: rsa-2048

2019/01/28 16:29:48 [INFO] encoded CSR

2019/01/28 16:29:48 [INFO] signed certificate with serial number 714486490152688826461700674622674548864494534798

2019/01/28 16:29:48 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

/etc/etcd/ssl/ca-key.pem /etc/etcd/ssl/ca.pem /etc/etcd/ssl/server-key.pem /etc/etcd/ssl/server.pem腳本內容如下:

#!/bin/bash

mv cfssl* /usr/local/bin/

chmod +x /usr/local/bin/cfssl*

ETCD_SSL=/etc/etcd/ssl

mkdir -p $ETCD_SSL

# Create some CA certificates for etcd cluster.

cat<<EOF>$ETCD_SSL/ca-config.json

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"www": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

EOF

cat<<EOF>$ETCD_SSL/ca-csr.json

{

"CN": "etcd CA",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Beijing",

"ST": "Beijing"

}

]

}

EOF

cat<<EOF>$ETCD_SSL/server-csr.json

{

"CN": "etcd",

"hosts": [

"172.31.2.11",

"172.31.2.12",

"172.31.2.13"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Beijing",

"ST": "Beijing"

}

]

}

EOF

cd $ETCD_SSL

cfssl_linux-amd64 gencert -initca ca-csr.json | cfssljson_linux-amd64 -bare ca -

cfssl_linux-amd64 gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=www server-csr.json | cfssljson_linux-amd64 -bare server

cd ~

# ca-key.pem ca.pem server-key.pem server.pem

ls $ETCD_SSL/*.pem在Master執行腳本KubernetesInstall-05.sh。

[root@gysl-master ~]# sh KubernetesInstall-05.sh腳本內容如下:

#!/bin/bash

# Deploy and configurate etcd service on the master node.

ETCD_CONF=/etc/etcd/etcd.conf

ETCD_SSL=/etc/etcd/ssl

ETCD_SERVICE=/usr/lib/systemd/system/etcd.service

tar -xzf etcd-v3.3.11-linux-amd64.tar.gz

cp -p etcd-v3.3.11-linux-amd64/etc* /usr/local/bin/

# The etcd configuration file.

cat>$ETCD_CONF<<EOF

#[Member]

ETCD_NAME="etcd-01"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://172.31.2.11:2380"

ETCD_LISTEN_CLIENT_URLS="https://172.31.2.11:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://172.31.2.11:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://172.31.2.11:2379"

ETCD_INITIAL_CLUSTER="etcd-01=https://172.31.2.11:2380,etcd-02=https://172.31.2.12:2380,etcd-03=https://172.31.2.13:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

EOF

# The etcd servcie configuration file.

cat>$ETCD_SERVICE<<EOF

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target

[Service]

Type=notify

EnvironmentFile=$ETCD_CONF

ExecStart=/usr/local/bin/etcd \

--name=\${ETCD_NAME} \

--data-dir=\${ETCD_DATA_DIR} \

--listen-peer-urls=\${ETCD_LISTEN_PEER_URLS} \

--listen-client-urls=\${ETCD_LISTEN_CLIENT_URLS},http://127.0.0.1:2379 \

--advertise-client-urls=\${ETCD_ADVERTISE_CLIENT_URLS} \

--initial-advertise-peer-urls=\${ETCD_INITIAL_ADVERTISE_PEER_URLS} \

--initial-cluster=\${ETCD_INITIAL_CLUSTER} \

--initial-cluster-token=\${ETCD_INITIAL_CLUSTER_TOKEN} \

--initial-cluster-state=new \

--cert-file=/etc/etcd/ssl/server.pem \

--key-file=/etc/etcd/ssl/server-key.pem \

--peer-cert-file=/etc/etcd/ssl/server.pem \

--peer-key-file=/etc/etcd/ssl/server-key.pem \

--trusted-ca-file=/etc/etcd/ssl/ca.pem \

--peer-trusted-ca-file=/etc/etcd/ssl/ca.pem

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable etcd.service --now

systemctl status etcd在Node1執行腳本KubernetesInstall-06.sh。

[root@gysl-master ~]# sh KubernetesInstall-06.sh腳本內容如下:

#!/bin/bash

# Deploy etcd on the node1.

ETCD_SSL=/etc/etcd/ssl

mkdir -p $ETCD_SSL

scp gysl-master:~/etcd-v3.3.11-linux-amd64.tar.gz .

scp gysl-master:$ETCD_SSL/{ca*pem,server*pem} $ETCD_SSL/

scp gysl-master:/etc/etcd/etcd.conf /etc/etcd/

scp gysl-master:/usr/lib/systemd/system/etcd.service /usr/lib/systemd/system/

tar -xvzf etcd-v3.3.11-linux-amd64.tar.gz

mv ~/etcd-v3.3.11-linux-amd64/etcd* /usr/local/bin/

sed -i '/ETCD_NAME/{s/etcd-01/etcd-02/g}' /etc/etcd/etcd.conf

sed -i '/ETCD_LISTEN_PEER_URLS/{s/2.11/2.12/g}' /etc/etcd/etcd.conf

sed -i '/ETCD_LISTEN_CLIENT_URLS/{s/2.11/2.12/g}' /etc/etcd/etcd.conf

sed -i '/ETCD_INITIAL_ADVERTISE_PEER_URLS/{s/2.11/2.12/g}' /etc/etcd/etcd.conf

sed -i '/ETCD_ADVERTISE_CLIENT_URLS/{s/2.11/2.12/g}' /etc/etcd/etcd.conf

rm -rf ~/etcd-v3.3.11-linux-amd64*

systemctl daemon-reload

systemctl enable etcd.service --now

systemctl status etcd在Node2執行腳本KubernetesInstall-07.sh。

[root@gysl-master ~]# sh KubernetesInstall-07.sh腳本內容如下:

#!/bin/bash

# Deploy etcd on the node2.

ETCD_SSL=/etc/etcd/ssl

mkdir -p $ETCD_SSL

scp gysl-master:~/etcd-v3.3.11-linux-amd64.tar.gz .

scp gysl-master:$ETCD_SSL/{ca*pem,server*pem} $ETCD_SSL/

scp gysl-master:/etc/etcd/etcd.conf /etc/etcd/

scp gysl-master:/usr/lib/systemd/system/etcd.service /usr/lib/systemd/system/

tar -xvzf etcd-v3.3.11-linux-amd64.tar.gz

mv ~/etcd-v3.3.11-linux-amd64/etcd* /usr/local/bin/

sed -i '/ETCD_NAME/{s/etcd-01/etcd-03/g}' /etc/etcd/etcd.conf

sed -i '/ETCD_LISTEN_PEER_URLS/{s/2.11/2.13/g}' /etc/etcd/etcd.conf

sed -i '/ETCD_LISTEN_CLIENT_URLS/{s/2.11/2.13/g}' /etc/etcd/etcd.conf

sed -i '/ETCD_INITIAL_ADVERTISE_PEER_URLS/{s/2.11/2.13/g}' /etc/etcd/etcd.conf

sed -i '/ETCD_ADVERTISE_CLIENT_URLS/{s/2.11/2.13/g}' /etc/etcd/etcd.conf

rm -rf ~/etcd-v3.3.11-linux-amd64*

systemctl daemon-reload

systemctl enable etcd.service --now

systemctl status etcd幾個節點上的安裝過程大同小異,唯一不同的是etcd配置文件中的服務器IP要寫當前節點的IP。主要參數:

執行以下命令:

[root@gysl-master ~]# etcdctl \

--ca-file=/etc/etcd/ssl/ca.pem \

--cert-file=/etc/etcd/ssl/server.pem \

--key-file=/etc/etcd/ssl/server-key.pem \

--endpoints="https://172.31.2.11:2379,https://172.31.2.12:2379,https://172.31.2.13:2379" cluster-health

member 82184ce461853bed is healthy: got healthy result from https://172.31.2.12:2379

member d85d48cef1ccfeaf is healthy: got healthy result from https://172.31.2.13:2379

member fe6e7c664377ad3b is healthy: got healthy result from https://172.31.2.11:2379

cluster is healthy"cluster is healthy"說明etcd集群部署成功!如果存在問題,那么首先看日志:/var/log/message 或 journalctl -u etcd,找到問題,逐一解決。命令看起來不是那么直觀,可以直接復制下面的命令來進行檢驗:

etcdctl \

--ca-file=/etc/etcd/ssl/ca.pem \

--cert-file=/etc/etcd/ssl/server.pem \

--key-file=/etc/etcd/ssl/server-key.pem \

--endpoints="https://172.31.2.11:2379,https://172.31.2.12:2379,https://172.31.2.13:2379" cluster-health由于Flannel需要使用etcd存儲自身的一個子網信息,所以要保證能成功連接Etcd,寫入預定義子網段。寫入的Pod網段${CLUSTER_CIDR}必須是/16段地址,必須與kube-controller-manager的–-cluster-cidr參數值一致。一般情況下,在每一個Node節點都需要進行配置,執行腳本KubernetesInstall-08.sh。

[root@gysl-master ~]# sh KubernetesInstall-08.sh腳本內容如下:

#!/bin/bash

KUBE_CONF=/etc/kubernetes

FLANNEL_CONF=$KUBE_CONF/flannel.conf

mkdir $KUBE_CONF

tar -xvzf flannel-v0.11.0-linux-amd64.tar.gz

mv {flanneld,mk-docker-opts.sh} /usr/local/bin/

# Check whether etcd cluster is healthy.

etcdctl \

--ca-file=/etc/etcd/ssl/ca.pem \

--cert-file=/etc/etcd/ssl/server.pem \

--key-file=/etc/etcd/ssl/server-key.pem \

--endpoints="https://172.31.2.11:2379,\

https://172.31.2.12:2379,\

https://172.31.2.13:2379" cluster-health

# Writing into a predetermined subnetwork.

cd /etc/etcd/ssl

etcdctl \

--ca-file=ca.pem --cert-file=server.pem --key-file=server-key.pem \

--endpoints="https://172.31.2.11:2379,https://172.31.2.12:2379,https://172.31.2.13:2379" \

set /coreos.com/network/config '{ "Network": "172.17.0.0/16", "Backend": {"Type": "vxlan"}}'

cd ~

# Configuration the flannel service.

cat>$FLANNEL_CONF<<EOF

FLANNEL_OPTIONS="--etcd-endpoints=https://172.31.2.11:2379,https://172.31.2.12:2379,https://172.31.2.13:2379 -etcd-cafile=/etc/etcd/ssl/ca.pem -etcd-certfile=/etc/etcd/ssl/server.pem -etcd-keyfile=/etc/etcd/ssl/server-key.pem"

EOF

cat>/usr/lib/systemd/system/flanneld.service<<EOF

[Unit]

Description=Flanneld overlay address etcd agent

After=network-online.target network.target

Before=docker.service

[Service]

Type=notify

EnvironmentFile=$FLANNEL_CONF

ExecStart=/usr/local/bin/flanneld --ip-masq $FLANNEL_OPTIONS

ExecStartPost=/usr/local/bin/mk-docker-opts.sh -k DOCKER_NETWORK_OPTIONS -d /run/flannel/subnet.env

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

# Modify the docker service.

sed -i.bak -e '/ExecStart/i EnvironmentFile=\/run\/flannel\/subnet.env' -e 's/ExecStart=\/usr\/bin\/dockerd/ExecStart=\/usr\/bin\/dockerd $DOCKER_NETWORK_OPTIONS/g' /usr/lib/systemd/system/docker.service

# Start or restart related services.

systemctl daemon-reload

systemctl enable flanneld --now

systemctl restart docker

systemctl status flanneld

systemctl status docker

ip address show在腳本執行之前需要把Flannel安裝包拷貝到用戶的HOME目錄。腳本執行完畢之后需要檢查各服務的狀態,確保docker0和flannel.1在同一網段。

這一步中創建了kube-apiserver和kube-proxy相關的CA證書,在Master節點執行腳本KubernetesInstall-09.sh。

[root@gysl-master ~]# sh KubernetesInstall-09.sh腳本內容如下:

#!/bin/bash

# Deploy the master node.

KUBE_SSL=/etc/kubernetes/ssl

mkdir $KUBE_SSL

# Create CA.

cat>$KUBE_SSL/ca-config.json<<EOF

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"kubernetes": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

EOF

cat>$KUBE_SSL/ca-csr.json<<EOF

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Beijing",

"ST": "Beijing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

cat>$KUBE_SSL/server-csr.json<<EOF

{

"CN": "kubernetes",

"hosts": [

"10.0.0.1",

"127.0.0.1",

"172.31.2.11",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Beijing",

"ST": "Beijing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

cd $KUBE_SSL

cfssl_linux-amd64 gencert -initca ca-csr.json | cfssljson_linux-amd64 -bare ca -

cfssl_linux-amd64 gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes server-csr.json | cfssljson_linux-amd64 -bare server

# Create kube-proxy CA.

cat>$KUBE_SSL/kube-proxy-csr.json<<EOF

{

"CN": "system:kube-proxy",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

cfssl_linux-amd64 gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson_linux-amd64 -bare kube-proxy

ls *.pem

cd ~執行完畢之后應該看到以下文件:

/etc/kubernetes/ssl/ca-key.pem /etc/kubernetes/ssl/kube-proxy-key.pem /etc/kubernetes/ssl/server-key.pem

/etc/kubernetes/ssl/ca.pem /etc/kubernetes/ssl/kube-proxy.pem /etc/kubernetes/ssl/server.pem

將備好的安裝包解壓,并移動到相關目錄,進行相關配置,執行腳本KubernetesInstall-10.sh。

[root@gysl-master ~]# sh KubernetesInstall-10.sh腳本內容如下:

#!/bin/bash

KUBE_ETC=/etc/kubernetes

KUBE_API_CONF=/etc/kubernetes/apiserver.conf

tar -xvzf kubernetes-server-linux-amd64.tar.gz

mv kubernetes/server/bin/{kube-apiserver,kube-scheduler,kube-controller-manager} /usr/local/bin/

# Create a token file.

cat>$KUBE_ETC/token.csv<<EOF

$(head -c 16 /dev/urandom | od -An -t x | tr -d ' '),kubelet-bootstrap,10001,"system:kubelet-bootstrap"

EOF

# Create a kube-apiserver configuration file.

cat >$KUBE_API_CONF<<EOF

KUBE_APISERVER_OPTS="--logtostderr=true \

--v=4 \

--etcd-servers=https://172.31.2.11:2379,https://172.31.2.12:2379,https://172.31.2.13:2379 \

--bind-address=172.31.2.11 \

--secure-port=6443 \

--advertise-address=172.31.2.11 \

--allow-privileged=true \

--service-cluster-ip-range=10.0.0.0/24 \

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,SecurityContextDeny,ServiceAccount,ResourceQuota,NodeRestriction \

--authorization-mode=RBAC,Node \

--enable-bootstrap-token-auth \

--token-auth-file=$KUBE_ETC/token.csv \

--service-node-port-range=30000-50000 \

--tls-cert-file=$KUBE_ETC/ssl/server.pem \

--tls-private-key-file=$KUBE_ETC/ssl/server-key.pem \

--client-ca-file=$KUBE_ETC/ssl/ca.pem \

--service-account-key-file=$KUBE_ETC/ssl/ca-key.pem \

--etcd-cafile=/etc/etcd/ssl/ca.pem \

--etcd-certfile=/etc/etcd/ssl/server.pem \

--etcd-keyfile=/etc/etcd/ssl/server-key.pem"

EOF

# Create the kube-apiserver service.

cat>/usr/lib/systemd/system/kube-apiserver.service<<EOF

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

After=etcd.service

Wants=etcd.service

[Service]

EnvironmentFile=-$KUBE_API_CONF

ExecStart=/usr/local/bin/kube-apiserver \$KUBE_APISERVER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable kube-apiserver.service --now

systemctl status kube-apiserver.service參數說明:

之前已經將kube-scheduler相關的二進制文件移動到了相關目錄,直接執行腳本KubernetesInstall-11.sh。

[root@gysl-master ~]# sh KubernetesInstall-11.sh腳本內容如下:

#!/bin/bash

# Deploy the scheduler service.

KUBE_ETC=/etc/kubernetes

KUBE_SCHEDULER_CONF=$KUBE_ETC/kube-scheduler.conf

cat>$KUBE_SCHEDULER_CONF<<EOF

KUBE_SCHEDULER_OPTS="--logtostderr=true \

--v=4 \

--master=127.0.0.1:8080 \

--leader-elect"

EOF

cat>/usr/lib/systemd/system/kube-scheduler.service<<EOF

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-$KUBE_SCHEDULER_CONF

ExecStart=/usr/local/bin/kube-scheduler \$KUBE_SCHEDULER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable kube-scheduler.service --now

sleep 20

systemctl status kube-scheduler.service參數說明:

之前已經將kube-scheduler相關的二進制文件移動到了相關目錄,直接執行腳本KubernetesInstall-12.sh。

[root@gysl-master ~]# sh KubernetesInstall-12.sh腳本內容如下:

#!/bin/bash

# Deploy the controller-manager service.

KUBE_CONTROLLER_CONF=/etc/kubernetes/kube-controller-manager.conf

cat>$KUBE_CONTROLLER_CONF<<EOF

KUBE_CONTROLLER_MANAGER_OPTS="--logtostderr=true \

--v=4 \

--master=127.0.0.1:8080 \

--leader-elect=true \

--address=127.0.0.1 \

--service-cluster-ip-range=10.0.0.0/24 \

--cluster-name=kubernetes \

--cluster-signing-cert-file=/etc/kubernetes/ssl/ca.pem \

--cluster-signing-key-file=/etc/kubernetes/ssl/ca-key.pem \

--root-ca-file=/etc/kubernetes/ssl/ca.pem \

--service-account-private-key-file=/etc/kubernetes/ssl/ca-key.pem"

EOF

cat>/usr/lib/systemd/system/kube-controller-manager.service<<EOF

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-$KUBE_CONTROLLER_CONF

ExecStart=/usr/local/bin/kube-controller-manager \$KUBE_CONTROLLER_MANAGER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable kube-controller-manager.service --now

sleep 20

systemctl status kube-controller-manager.service直接執行腳本KubernetesInstall-13.sh。

[root@gysl-master ~]# sh KubernetesInstall-13.sh腳本內容如下:

#!/bin/bash

# Check the service.

mv kubernetes/server/bin/kubectl /usr/local/bin/

kubectl get cs如果部署成功的話,將看到如下結果:

[root@gysl-master ~]# kubectl get cs

NAME STATUS MESSAGE ERROR

controller-manager Healthy ok

scheduler Healthy ok

etcd-2 Healthy {"health":"true"}

etcd-0 Healthy {"health":"true"}

etcd-1 Healthy {"health":"true"}Master apiserver啟用TLS認證后,Node節點kubelet組件想要加入集群,必須使用CA簽發的有效證書才能與apiserver通信,當Node節點很多時,簽署證書是一件很繁瑣的事情,因此有了TLS Bootstrapping機制,kubelet會以一個低權限用戶自動向apiserver申請證書,kubelet的證書由apiserver動態簽署。在前面創建的token文件在這一步派上了用場,在Master節點上執行腳本KubernetesInstall-14.sh創建bootstrap.kubeconfig和kube-proxy.kubeconfig。

[root@gysl-master ~]# sh KubernetesInstall-14.sh腳本內容如下:

#!/bin/bash

BOOTSTRAP_TOKEN=$(awk -F "," '{print $1}' /etc/kubernetes/token.csv)

KUBE_SSL=/etc/kubernetes/ssl/

KUBE_APISERVER="https://172.31.2.11:6443"

cd $KUBE_SSL

# Set cluster parameters.

kubectl config set-cluster kubernetes \

--certificate-authority=./ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=bootstrap.kubeconfig

# Set client parameters.

kubectl config set-credentials kubelet-bootstrap \

--token=${BOOTSTRAP_TOKEN} \

--kubeconfig=bootstrap.kubeconfig

# Set context parameters.

kubectl config set-context default \

--cluster=kubernetes \

--user=kubelet-bootstrap \

--kubeconfig=bootstrap.kubeconfig

# Set context.

kubectl config use-context default --kubeconfig=bootstrap.kubeconfig

# Create kube-proxy kubeconfig file.

kubectl config set-cluster kubernetes \

--certificate-authority=./ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=kube-proxy.kubeconfig

kubectl config set-credentials kube-proxy \

--client-certificate=./kube-proxy.pem \

--client-key=./kube-proxy-key.pem \

--embed-certs=true \

--kubeconfig=kube-proxy.kubeconfig

kubectl config set-context default \

--cluster=kubernetes \

--user=kube-proxy \

--kubeconfig=kube-proxy.kubeconfig

kubectl config use-context default --kubeconfig=kube-proxy.kubeconfig

cd ~

# Bind kubelet-bootstrap user to system cluster roles.

kubectl create clusterrolebinding kubelet-bootstrap \

--clusterrole=system:node-bootstrapper \

--user=kubelet-bootstrap因為kubernetes-server-linux-amd64.tar.gz已經在Master節點的HOME目錄解壓,所以可以在各節點上執行腳本KubernetesInstall-15.sh。

[root@gysl-node1 ~]# sh KubernetesInstall-15.sh腳本內容如下:

#!/bin/bash

KUBE_CONF=/etc/kubernetes

KUBE_SSL=$KUBE_CONF/ssl

IP=172.31.2.13

mkdir $KUBE_SSL

scp gysl-master:~/kubernetes/server/bin/{kube-proxy,kubelet} /usr/local/bin/

scp gysl-master:$KUBE_CONF/ssl/{bootstrap.kubeconfig,kube-proxy.kubeconfig} $KUBE_CONF

cat>$KUBE_CONF/kube-proxy.conf<<EOF

KUBE_PROXY_OPTS="--logtostderr=true \

--v=4 \

--hostname-override=$IP \

--cluster-cidr=10.0.0.0/24 \

--kubeconfig=$KUBE_CONF/kube-proxy.kubeconfig"

EOF

cat>/usr/lib/systemd/system/kube-proxy.service<<EOF

[Unit]

Description=Kubernetes Proxy

After=network.target

[Service]

EnvironmentFile=-$KUBE_CONF/kube-proxy.conf

ExecStart=/usr/local/bin/kube-proxy \$KUBE_PROXY_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable kube-proxy.service --now

sleep 20

systemctl status kube-proxy.service -l

cat>$KUBE_CONF/kubelet.yaml<<EOF

kind: KubeletConfiguration

apiVersion: kubelet.config.k8s.io/v1beta1

address: $IP

port: 10250

readOnlyPort: 10255

cgroupDriver: cgroupfs

clusterDNS: ["10.0.0.2"]

clusterDomain: cluster.local.

failSwapOn: false

authentication:

anonymous:

enabled: true

EOF

cat>$KUBE_CONF/kubelet.conf<<EOF

KUBELET_OPTS="--logtostderr=true \

--v=4 \

--hostname-override=$IP \

--kubeconfig=$KUBE_CONF/kubelet.kubeconfig \

--bootstrap-kubeconfig=$KUBE_CONF/bootstrap.kubeconfig \

--config=$KUBE_CONF/kubelet.yaml \

--cert-dir=$KUBE_SSL \

--pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/google-containers/pause-amd64:3.0"

EOF

cat>/usr/lib/systemd/system/kubelet.service<<EOF

[Unit]

Description=Kubernetes Kubelet

After=docker.service

Requires=docker.service

[Service]

EnvironmentFile=$KUBE_CONF/kubelet.conf

ExecStart=/usr/local/bin/kubelet \$KUBELET_OPTS

Restart=on-failure

KillMode=process

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable kubelet.service --now

sleep 20

systemctl status kubelet.service -l以上腳本有多少個Node節點就在相應的Node節點上執行多少次,每次執行只需修改IP的值即可。

參數說明:

可以手動或自動approve CSR請求。推薦使用自動的方式,因為從 v1.8 版本開始,可以自動輪轉approve csr后生成的證書。未approve之前如下:

[root@gysl-master ~]# kubectl get csr

NAME AGE REQUESTOR CONDITION

node-csr-FpTP2sCI0SiYDCxaIHa1SRukS_5u9BQN10BsTd6RU1Y 20m kubelet-bootstrap Pending

node-csr-YYfnPwAws2LxJzV-OgYjJ22zy_z9XQM8PT0MnqZN910 24m kubelet-bootstrap Pending在Master節點上執行腳本KubernetesInstall-15.sh。

[root@gysl-master ~]# sh KubernetesInstall-15.sh

certificatesigningrequest.certificates.k8s.io/node-csr-FpTP2sCI0SiYDCxaIHa1SRukS_5u9BQN10BsTd6RU1Y approved

certificatesigningrequest.certificates.k8s.io/node-csr-YYfnPwAws2LxJzV-OgYjJ22zy_z9XQM8PT0MnqZN910 approved腳本內容如下:

#!/bin/bash

CSRS=$(kubectl get csr | awk '{if(NR>1) print $1}')

for csr in $CSRS;

do

kubectl certificate approve $csr;

done[root@gysl-master ~]# kubectl get cs

NAME STATUS MESSAGE ERROR

controller-manager Healthy ok

scheduler Healthy ok

etcd-2 Healthy {"health":"true"}

etcd-1 Healthy {"health":"true"}

etcd-0 Healthy {"health":"true"}

[root@gysl-master ~]# kubectl get node

NAME STATUS ROLES AGE VERSION

172.31.2.12 Ready <none> 11m v1.13.2

172.31.2.13 Ready <none> 11m v1.13.2[root@gysl-master ~]# kubectl run nginx --image=nginx --replicas=3

kubectl run --generator=deployment/apps.v1 is DEPRECATED and will be removed in a future version. Use kubectl run --generator=run-pod/v1 or kubectl create instead.

deployment.apps/nginx created

[root@gysl-master ~]# kubectl expose deployment nginx --port=88 --target-port=80 --type=NodePort

service/nginx exposed

[root@gysl-master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

nginx-7cdbd8cdc9-7h946 0/1 ContainerCreating 0 33s

nginx-7cdbd8cdc9-vtkqf 0/1 ContainerCreating 0 33s

nginx-7cdbd8cdc9-wdjtj 0/1 ContainerCreating 0 33s

[root@gysl-master ~]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.0.0.1 <none> 443/TCP 8h

nginx NodePort 10.0.0.2 <none> 88:46705/TCP 28s

[root@gysl-master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

nginx-7cdbd8cdc9-7h946 1/1 Running 0 2m4s

nginx-7cdbd8cdc9-vtkqf 1/1 Running 0 2m4s

nginx-7cdbd8cdc9-wdjtj 1/1 Running 0 2m4s[root@gysl-node1 ~]# curl http://10.0.0.2:88

<!DOCTYPE html>

<html>

<head>

<title>Welcome to nginx!</title>

<style>

body {

width: 35em;

margin: 0 auto;

font-family: Tahoma, Verdana, Arial, sans-serif;

}

</style>

</head>

<body>

<h2>Welcome to nginx!</h2>

<p>If you see this page, the nginx web server is successfully installed and

working. Further configuration is required.</p>

<p>For online documentation and support please refer to

<a >nginx.org</a>.<br/>

Commercial support is available at

<a >nginx.com</a>.</p>

<p><em>Thank you for using nginx.</em></p>

</body>

</html>如果此時在瀏覽器輸入:<http://10.0.0.2:88> ,那么將出現nginx的默認頁面。

資源比較充裕的情況下,Master節點僅僅做為服務接口、調度、控制節點,必須部署的組件有:kube-apiserver、kube-controller-manager、kube-scheduler、kubectl、etcd。除此之外,一般還需要做HA等相關部署。如果Master節點資源比較充裕,有些實驗也要求至少有三個節點在運行,那么也可以將Master節點部署設置為一般Node節點來使用。為此,直接執行腳本KubernetesInstall-17.sh。

[root@gysl-master ~]# KubernetesInstall-17.sh腳本內容如下:

#!/bin/bash

KUBE_CONF=/etc/kubernetes

KUBE_SSL=$KUBE_CONF/ssl

IP=172.31.2.11

mkdir $KUBE_SSL

cp ~/kubernetes/server/bin/{kube-proxy,kubelet} /usr/local/bin/

cp $KUBE_CONF/ssl/{bootstrap.kubeconfig,kube-proxy.kubeconfig} $KUBE_CONF

cat>$KUBE_CONF/kube-proxy.conf<<EOF

KUBE_PROXY_OPTS="--logtostderr=true \

--v=4 \

--hostname-override=$IP \

--cluster-cidr=10.0.0.0/24 \

--kubeconfig=$KUBE_CONF/kube-proxy.kubeconfig"

EOF

cat>/usr/lib/systemd/system/kube-proxy.service<<EOF

[Unit]

Description=Kubernetes Proxy

After=network.target

[Service]

EnvironmentFile=-$KUBE_CONF/kube-proxy.conf

ExecStart=/usr/local/bin/kube-proxy \$KUBE_PROXY_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable kube-proxy.service --now

sleep 20

systemctl status kube-proxy.service -l

cat>$KUBE_CONF/kubelet.yaml<<EOF

kind: KubeletConfiguration

apiVersion: kubelet.config.k8s.io/v1beta1

address: $IP

port: 10250

readOnlyPort: 10255

cgroupDriver: cgroupfs

clusterDNS: ["10.0.0.2"]

clusterDomain: cluster.local.

failSwapOn: false

authentication:

anonymous:

enabled: true

EOF

cat>$KUBE_CONF/kubelet.conf<<EOF

KUBELET_OPTS="--logtostderr=true \

--v=4 \

--hostname-override=$IP \

--kubeconfig=$KUBE_CONF/kubelet.kubeconfig \

--bootstrap-kubeconfig=$KUBE_CONF/bootstrap.kubeconfig \

--config=$KUBE_CONF/kubelet.yaml \

--cert-dir=$KUBE_SSL \

--pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/google-containers/pause-amd64:3.0"

EOF

cat>/usr/lib/systemd/system/kubelet.service<<EOF

[Unit]

Description=Kubernetes Kubelet

After=docker.service

Requires=docker.service

[Service]

EnvironmentFile=$KUBE_CONF/kubelet.conf

ExecStart=/usr/local/bin/kubelet \$KUBELET_OPTS

Restart=on-failure

KillMode=process

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable kubelet.service --now

sleep 20

systemctl status kubelet.service -l

kubectl certificate approve $(kubectl get csr | awk '{if(NR>1) print $1}')

kubectl get csr

kubectl label node 172.31.2.11 node-role.kubernetes.io/master='master'

kubectl label node 172.31.2.12 node-role.kubernetes.io/node='node'

kubectl label node 172.31.2.13 node-role.kubernetes.io/node='node'

kubectl get nodes部署成功之后,將出現以下內容:

NAME STATUS ROLES AGE VERSION

172.31.2.11 Ready master 22m v1.13.2

172.31.2.12 Ready node 11h v1.13.2

172.31.2.13 Ready node 11h v1.13.24.1 Kubernetes的二進制安裝部署是一個比較復雜的過程,其中涉及到的步驟比較多,需要理解清楚各節點及組件之間的關系,逐步進行,每一個步驟成功了再進行下一步,切不可急躁。

4.2 在安裝部署的過程中,日志及幫助信息是十分重要的,journalctl命令較為常用,--help也會起到柳暗花明又一村的效果。

4.3 把執行步驟腳本化,顯得清晰有效,在后續的工作、學習過程中要繼續保持。

4.4 由于時間倉促,安裝部署中的很多個性化配置并未配置,在后續過程中會根據實際使用情況進行完善。比如:每一個服務或組件并未將日志單獨保存。

4.5 其他不盡如人意的地方正在完善。

4.6 文中的兩張圖片來源于互聯網,如有侵權,請聯系刪除。

5.1 認證相關

5.2 證書相關

5.3 cfssl官方資料

5.4 Systemd相關資料

5.5 Kubernetes基本概念

5.6 本文涉及到的腳本及配置文件

免責聲明:本站發布的內容(圖片、視頻和文字)以原創、轉載和分享為主,文章觀點不代表本網站立場,如果涉及侵權請聯系站長郵箱:is@yisu.com進行舉報,并提供相關證據,一經查實,將立刻刪除涉嫌侵權內容。